Leaderboard

Popular Content

Showing content with the highest reputation since 05/10/2026 in Posts

-

Interesting Breakdown Of Arri Colour Science

Andrew - EOSHD and 3 others reacted to BTM_Pix for a topic

I thought the title was slightly clickbaity but I’m glad I clicked on this one. There are a lot of “Arri look on your xyz camera” videos but I think this is a far more interesting take on it. The question is are you doomed to never match it because it is baked in (not least the IR cut filter) and it can’t be reverse engineered in post? Can anyone who has bought the Arri license for their supported Panasonic camera chime in with their thoughts?4 points -

The GX85 "Super-16" project

Andrew - EOSHD and 3 others reacted to kye for a topic

I like film and retro filmic looks, but shooting Super-16 (or even Super-8) is still an expensive PITA. After some testing of my equipment, I've realised that my GX85 has image quality equalling or surpassing a Super-16 film camera (with some categories surpassing a Super-35 film camera) so in my pursuit of a pocketable, portable, fun, simple, and fast setup that looks like film, this project is born. The criteria is to work out how to get great images from the tiny setup that are enough like film that most people would believe it if you said it was shot on film. My approach is simply to compare the two and find the biggest differences and then work on bringing them closer together, 80-20 rule and all that. The first point of comparison is already known, the crop factor is similar (2.2x vs 2.88x) so making sure I don't go too hard into shallow DOF then this should be comparable. Second consideration is camera movement, shake, and how they'll be used. S16 film cameras can be hand-held, but they've got some weight so are relatively steady in use. 8mm cameras were designed to be hand-held and are much lighter, so will move more. The GX85 is far smaller than either, but has IBIS (and OIS with some lenses) so that should make it feel larger, but I'll have to watch out for parallax, which will give away the cameras lack of heft. Third is the DR. Film has a huge DR and I wasn't sure how this would go - harsh clipping of highlights and blacks will be a dead giveaway. Without knowing anything about its rec709 profiles, I shot an exposure test where I took shots one stop apart. Film negatives have a lot of DR, but print film has far less, with stocks like Kodak 2383 only having about 5-6 stops in the linear range of their exposure (between about 10% luma and 90% luma, before the rolloffs kick in). Bringing in my test shots and matching the contrast within my standard colour pipeline (based around the Film Look Creator tool in Resolve) I realised the GX85 has enough DR to push its highlights well up into the highlight rolloff curve of the FLC, and same with the shadows, so this is fine too. DR, check! Fourth is resolution and texture. The images should be soft and noisy, but how much? After reviewing a number of sources, I realise that there are all kinds of factors, such as the speed of the negative, how it was exposed (0... or -1 and pushed in post, etc), but often the biggest factor in softness was the lenses used, and the biggest factor on the grain is the processing that the streaming service does when you upload it! In this sense, I have a lot of freedom in these aspects, but I'll have to do further tests on uploading to YT. I have seen videos that have really nice grain in 4K, so I know it can be done, but my previous tests showed the YT compression really changes things, so I'll have to do more tests. Then we're into testing with real images and just seeing what we see. My first test was some random shots in the garden, just to have a starting position. The feedback I got (including one friend who practically lives to talk about film!) was that it looked good but needed more saturation. My thoughts were that I exposed too high (I'd forgotten that the LCD is deceiving and the GX85 has a lot of shadow info) and as such the highlights in the first image were clipped in the file and still show in the graded image. After this test I happened to watch a YouTuber go through their grading process and they said they exposed by putting the image in the middle of the histogram, which made sense to me and I realised this is what I should do with the GX85. Second test was just a few images while out and about. It's the GX85 and 14mm F2.5 pancake lens. I'd previously forgotten this lens is both a 31mm and also a 62mm (with the 2x zoom) and so is much more flexible than I was remembering, so I made sure to include some 2x shots to see how useful that was with this level of image degradation. I also decided to push the images to get more of the kind of look I'm chasing. The 2x seems completely fine too, having quality far more than this level of softening will show. I also re-graded them in B&W, pushing the contrast much further. I may even want to go harder on these. Much more work to do, but I'm really liking the process so far. In these days of digital perfection, the attraction of film is in the colours and the texture. If you want the colours and not the texture, wanting to keep a much more modern level of sharpness and noise, emulating some of the properties of film is so ubiquitous that I think it's just called "colour grading". The phrase "film emulation" then is for the texture of film and deliberately wanting the imperfections and aesthetics of it. You don't have to go hard like I have with Super-16 film + Super-16 lenses levels of softening, but if you did this is easily possible too and FLC has 35mm presets which soften, but do so far more subtly than this. I'll continue to iterate on the colours and textures, but moving into moving images is probably next, with all the testing of the YT processing and compression that comes with that. But seriously, imagine telling someone in the 80s that you could fit an interchangeable lens camera capable of shooting feature-film level images in your pocket... Feedback welcome.4 points -

Dammit, v7 had a mistake in it, so re-exported and uploaded it.3 points

-

You may or may not have see this, Adapter with Native AF Speed!? Only works with a g7 or gh9 ii no others it seems. Available for about 100 lenses but works with some others just as not quick it seems. on futher reading Olympus E-M1 series and OM System OM-1 series] Metabones transmits the same PDAF/IBIS data to the camera in the same way as the latest Panasonics, and gets a significant boost in AF speed3 points

-

There are actually good answers to the 10x question for each FF mount. Sigma 20-200mm is an answer for E mount and L mount. Sony has their own 24-240mm too. Nikon have a 10x near miss with their 24-200mm and an over the top 14.2x 28-400mm. Canon has their 24-240mm too and if you don’t mind mimicking trombone playing while zooming then their old 35-350mm EF is a great bargain. I actually have one of the latter and the range is great.3 points

-

Interesting Breakdown Of Arri Colour Science

kye and 2 others reacted to fuzzynormal for a topic

Yes, you're exactly right. Musing about this is interesting to us, obviously! Here's my rambling take. The Japanese engineers have long been pursuing a different path of imaging evolution, as they've been in "electronics" mode for generations. Their culture kept them away from deep considerations of what film does vs. what digital does. The Japanese simply have a different sensibility about image IQ. This reality in of itself is a fascinating dive, going all the way back to traditional feudalism culture, WWII, national pride, and their "High Increasing Stage" era of the mid 20th century. Their imaging tendencies emerged out of their unique cultural context. In other words, once their engineering IQ evolution preferences were set, there was not much room for it go off on tangents. ARRI took a different road and put people on the development team that understood the physics and chemistry of film and wanted that look. ARRI engineering cuts and adds different spectrums for very good reasons. Thus, as you say when you mention plugins, emulation of film behavior is the thing, the whole additive vs. subtractive thing, for instance. ARRI does their adjustments in camera. So cinema follows ARRI to chase the IQ they know best, and ARRI becomes the cinema standard. The phrase "color science" is amusing to me. Because, yes, the engineering is science, but ARRI is in fact making their cameras behave with purposeful (and insightful) aberrations. They aren't going for accuracy, they're striving for colors that evoke a certain perception of emotion by being "pretty" which could be considered an artistic pursuit in their engineering craft. "ColorArt" doesn't have the same ring to it though. It's all pretty wild. I mean, I'm on forums where there are darkroom alchemists chasing esoteric processes to heighten certain color dyes in film, and minimize others by changing their development chemistry -- which is weird as heck and an extremely hard way to affect an image (save that when choosing the stock or when doing the enlargement print imo) but hobbyists aren't rational, they're just playing. There's a whole sub culture of analog photographers that deliberately buy decades old expired film just to see what happens with the dye degradation. All in all, there are an infinite number of ways to achieve color rendition. But, yeah, I'm with you. Give me a decent camera and I'll affect the image with a plugin and get it in the ball park. I'm not positioned financially or emotionally to be chasing the refined fringes of things. I enjoy mulling it over in places like this, but none of this sophisticated color science could be a part of my career. I'm not nearly talented enough to indulge it, nor smart enough for that.3 points -

The GX85 "Super-16" project

maxJ4380 and 2 others reacted to Clark Nikolai for a topic

I use it both for my own films as well as I get hired to do music videos and events. I just finished a feature length experimental film shot entirely with it called Shapes, Colours, Patterns. (There's a trailer for it on my Tumblr. https://clarknikolai.tumblr.com ) I'm very happy with it, and of course the image from that camera is gorgeous. Something I've discovered with the Digital Bolex's footage, is that it looks the best projected rather than shown on an LCD screen. I'm now working on a new project. It's a narrative, collectively written, performed and crewed by myself and three other artists. It's set in the present day in east Vancouver where three artists are working on their art projects. The characters are based on the people involved and their real lives (but fictionalized so we have more freedom.) We're using French New Wave and Availablism methods. Quick half-day shoots. It's self funded, using what we have around us, the equipment we already own, locations we already have, etc. (I think so far all we've spent on it was some coffees.) I plan to enter it in to film festivals when it's done. Here's a picture with the camera mounted backwards on the shoulder rig. This is so the camera operator can walk forward while the talent is behind them and they don't need a spotter. It's tricky to learn how to move but it's going okay. It works fine with a wide lens but not easy when zoomed in (as you'd expect.) We have to flip the image in the monitor or it's disorienting.3 points -

This guy makes any camera shine.

Katrikura and 2 others reacted to fuzzynormal for a topic

The secret sauce is skill. As someone that used to make my living doing travel videography decades ago, this Brandon guy has really honed the judgement it takes to get the shots. There's so much going on out there in the environment and he's able to omit it, control it, and/or shape it into something impressive. It's really quite a thing to do. He could make any camera in manufactured in the last 15 years look similar to this. In fact, he has. This guy is a cinematographer that really knows how to chase the light, compose a shot, and also create advantageous serendipity. Which might sound like a paradox, but it really isn't. But, yes, images like this sell cameras. Okay, buy the camera if you'd like and start the path to making an edit like this. You can't buy his boots-on-the-ground experience though. He's casual about it all during his "how-to" segment, but it really is the biggest factor here.3 points -

LUMIX L10 - announced

John Matthews and 2 others reacted to kye for a topic

Well, they just launched the Canon R6V, so I hope Panasonic enjoyed their 20 hours of PR!3 points -

So there are indeed some details in the video specs that will be of note to some. Internal RecordingH.264/H.265/MOV/MPEG-4 AVC 4:2:0 8/10-Bit 5674 x 2988 at 23.98/24.00/25/29.97/47.95/48.00/50/59.94 fps (200 to 300 Mb/s) 5184 x 3888 up to 23.98/24.00/25/29.97 fps (200 Mb/s) 4352 x 3264 up to 47.95/50/59.94 fps (300 Mb/s) 4096 x 2160 at 23.98/24.00/25/29.97/47.95/50/59.94/100/120 fps (100 to 300 Mb/s) 3840 x 2160 at 23.98/24.00/25/29.97/47.95/50/59.94/100/120 fps (70 to 300 Mb/s) 1920 x 1080 at 23.98/24.00/25/29.97/47.95/50/59.94/100/120/200/240 fps (16 to 200 Mb/s) H.264 ALL-Intra/MPEG-4 AVC 4:2:2 10-Bit 4096 x 2160 at 23.98/24.00/25/29.97/47.95/50/59.94 fps (400 to 600 Mb/s) 3840 x 2160 at 23.98/24.00/25/29.97/47.95/50/59.94 fps (400 to 600 Mb/s) 1920 x 1080 at 23.98/24.00/25/29.97/47.95/50/59.94/100/120 fps (200 to 400 Mb/s) Interesting to note that Panasonic have sidestepped the Micro HDMI bleating by just fucking it off altogether. Although it was playback only on the original anyway.3 points

-

New cinema camera...?

eatstoomuchjam and one other reacted to maxJ4380 for a topic

Ok its official, i have no willpower at all... as you can see not $700 but that was expected. I like the little embargo sticker, talking to a saleman they were only allowed to start selling these from yesterday. Hn gave me a gift card of $100 so the grip comes down to $54 easy decision. I did take it out of the box to charge it up on the way home as i had a usb to c cable in the car. I do wonder if i'm going to need a better memory card, what i have now is probably a bit ordinary. Big day tomorrow it would seem. we'll find out tomorrow, i'll let the suspense build :)2 points -

no 12 bit codec (whether it's PR444 or raw) makes it kind of DOA for me. that tended to be kinefinity's main selling point imo. conceptually its cool, but I just got a BMCC6K FF for 1500 euros second hand as a B-CAM. same sensor, same open gate capability, gives me an option to use an EVF as well, and it lets me do more in the grade.2 points

-

The GX85 "Super-16" project

John Matthews and one other reacted to kye for a topic

Stepping away from film emulation for a moment, the thought occurred to me to that we have softened the image enough for the 2x and 4x in-camera digital zooms to be tested. The GX85 sensor is 4592px wide, which with its 1.1x 4K crop factor, means that the horizontal resolution for its 1x is about 4174px, with the 2x crop it's about 2087px, and the 4x is about 1043px. Normally the 2x is usable on a 1080p timeline and the 4x is not, but with the film emulation on it, the only way to find out is to test it in the real world. Here is the GX85 with 14-140mm lens on it at F5.6. Optically zoomed to about 25mm: At 14mm and zoomed 2x in-camera: To me this looks completely fine. So far so good.. Optically zoomed to about 50mm: At 14mm and zoomed 4x in-camera: This is the big one. I see some differences here, but not enough that if I saw this in an edit it would pull me out of it, which is really what I'd want - to know if it's usable. For some context, I shot this test with three lenses, the 14mm F2.5 pancake lens (which is the lens I'll be using), the 12-35mm F2.8 and the 14-140mm shown above. While the light was changing during the test, what is interesting is that the 14mm F2.5 has much less contrast. The reason I point this out is that when comparing the 14mm F2.5 with in-camera zoom to the other lenses I think the lens rendering itself is the most significant difference. 2x comparison: and now for the main prize, 4x: This is an excellent result as far as I am concerned! Caveats: This is with the v5 film emulation grade, which as @eatstoomuchjam pointed out might be on the softer side of the range for what 16mm film rendered However, this grade DID NOT include the lens emulation stack, so the lens barrel distortion, vignetting, and corner softening hasn't been applied, so that could go some way to evening out the look I haven't done any sharpening to compensate for any softness. In the past I've discovered that a digital zoom of about 1.6x can be equalised by some careful sharpening in post, so sharpening isn't to be underestimated, especially in the context of an edit where (hopefully) the viewer is looking at the content and not the image Super-16mm film was often shot on several primes, where some would have been sharper than others, or on zooms, where the zoom would have been sharper in certain parts of its range than others, so natural variation in this stuff is potentially making it a more accurate emulation rather than detracting from it I suspect that the 4x is on the edge of being too soft, which makes sense as it's a 1K sensor readout going through a lot of processing and rescaling, but this is a great result. So.... the GX85 and 14mm F2.5 lens is a Super-16mm setup with a "turret" of T2.5 lenses that are equivalent to 31mm, 62mm and 124mm, and it's (just) pocketable and fits in the palm of your hand! Happy to share the power grade if anyone wants to play with it.2 points -

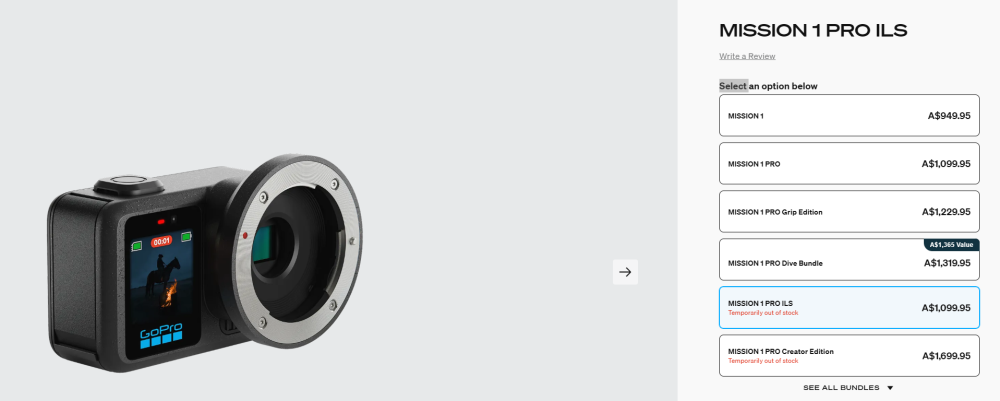

It was better when you could buy stuff under $1000 and pay not additional taxes. Gerry Harvey complained to the government he was losing too many sales. Thats when the ten % gst got applied to everything and the government had a win and Gerry Harvey could suddenly afford a few more race horses as well. For awhile those overseas ebay buys were a bargain as the middleman got cut out out the deal. However i suspect the manufacturers or sellers have upped the base price regardless of middlemen or not and the savings aren't as spectacular as they once were. Ah ok, i read that as a reasonable (price) opposite of what you meant lol, although not sure what gopros have normally sold for over there. I think i paid $500-600 for the 9 when it came out. Confident i looked around for the best "local" deal. Playing devils advocate momentarily and to be honest i have no interest in the other models, a new chip, decent capacity battery it seems, and ability to mount mft lenses and that 1080 at 960fps even at 10 seconds is impressive, i think i would be happy to pay $700 even through its a jump over earlier gopros but it wont be $700 here, I'm expecting $1000-$1300 er i just googled mission 1 in aus. Seems like there here, except the ils gopro and its $1100 which might come as a bit of a shock to some of you 🙂2 points

-

The return of the Digital Bolex

Andrew - EOSHD and one other reacted to Clark Nikolai for a topic

Just FYI, if anyone on here is looking for one. This one is 5000 Euro. https://cinematography.com/index.php?/forums/topic/104495-for-sale-digital-bolex-d16/2 points -

LUMIX L10 - announced

John Matthews and one other reacted to MrSMW for a topic

https://www.43rumors.com/just-announced-new-panasonic-lumix-l10/ I wasn't that interested until earlier today when I went to set my S9 up for the imminent season only to discover the audio on it has stopped working. Or is at least intermittent enough I can't use it until it's fixed and as there is zero chance of that happening before my season starts and I am between 2 countries so which to even have it fixed in, is a bit of an issue. So ordered a used S5ii from @Andrew - EOSHD favourite used camera emporium to tide me over. But then, less than 2 hours later, my YouTube feed gets flooded by the Lumix Bros who have been on another jolly and...actually, as above, wasn't particularly interested as I had my S9 as my compact C cam and social media unit...except, this would work even better, so probably going to put a preorder in as they should be available in June. The only thing I find odd is that every man and their dog has a video or press release and the only one's who don't seem to have mentioned it yet, is Panasonic Lumix themselves. Same funny old Lumix marketing department 🤔2 points -

Please recommend me some Manual Focus EF lenses!

Aussie Ash and one other reacted to stephen for a topic

Samyangs at f1.4 are not cheap plus they are big and heavy. As far as I remember you don't like big and heavy. Maybe older sigma lenses from HSM generation as Anaconda_ suggested. Sigma 24mm f1.8, 30mm f1.4, 50mm f1.4 HSM. They are all under 200 E/$. Not small though. If you want smaller and cheaper lenses then you have to accept they start at f1.8, f2 and f2.8. Then you have lots of option and not only with Canon. You can also adapt vintage lenses to EF adding second adapter. With speed booster they become f1.4 to f2 both quite usable in low light on m43 in my personal experience. I was in this place more than 5 years ago with BMPCC 4K. Went trough speed boosters, calculating focal lengths, spending lots of time researching and trying to build decent lens sets both vintage and modern. My frustration grew with time as I realized this won't be cheap nor ideal to my goals. Went FF and never looked back. My decision was driven specifically by lenses. I realized the hard way a well-known truth: We buy systems not cameras and lenses are more important than cameras. If I want to use vintage lenses, cheap lenses, have access to the largest pool of lenses - FF is the right choice. I may change one FF camera system with another, my vintage lens sets stay, lenses have the same focal length and character. Same is true for Canon EF lenses. A FF system is no longer more expensive than m43, especially when we include the lenses. Example Panasonic S5 vs Panasonic GX85 + speed booster. Paradoxically a FF system (body + lenses) may even come cheaper due to large pool of lenses than m43 + speed booster.2 points -

New cinema camera...?

maxJ4380 and one other reacted to eatstoomuchjam for a topic

I worded my response badly! I meant that the $700 US price tag was "already there" in terms of 'exorbitant, ridiculous, and "this is just an action camera - WTF"' which Kye had just said in anticipation of the AU pricing. I should have chosen phrasing that made it more clear that I wasn't simply repeating US pricing that is already known. 😅2 points -

Please recommend me some Manual Focus EF lenses!

zerocool22 and one other reacted to Anaconda_ for a topic

2 points -

https://drive.google.com/drive/folders/1ayD92_55eZom1FTxYGxE6KA_fnjkNwHn2 points

-

Interesting that WB is placed before De-bayer. Photographers call it "white balance scaling" and prefer it not to be done by manufacturer, since its digitally boosting channels, which is irreversible process. After all its not like all things they're doing is science. Some decisions are just an engineer thinking its the best compromise.2 points

-

PC build for editing | davinci resolve

ac6000cw and one other reacted to Samantha_Hong for a topic

I’d also make sure not to skimp on storage speed - having a fast NVMe drive for your active projects and cache files can make a surprisingly big difference to overall responsiveness, especially when working with larger footage formats.2 points -

Interesting Breakdown Of Arri Colour Science

kye and one other reacted to Simon Young for a topic

It’s not even close.2 points -

Interesting Breakdown Of Arri Colour Science

Ilkka Nissila and one other reacted to ND64 for a topic

Some of Nikon's portrait F mount lenses do the same. They deliberately let the red light doesn't hit the same plane as blue/green. As like not-much-corrected glasses of the old days. So red is a bit out of focus. Very very tiny amount. Tho that amount changes with focus distance. They usually optimize for portrait distances. They abandoned that approach with Z mount lenses.2 points -

Any used camera suggestions for under $400 for YouTube?

newfoundmass and one other reacted to fuzzynormal for a topic

I bought a used Em10iii over 5 years ago for $300 and haven't stopped using it since. Nice 4K video and you can put good vintage lenses on it for next to nuthin'. Yes, concentrate on lighting and getting a good mic (I use a Tascam DR10L) but do know there are a lot of good affordable cameras out there too. I also have 2 old GH4's in the cabinet. They're cheap as well.2 points -

Any used camera suggestions for under $400 for YouTube?

newfoundmass and one other reacted to srgkonev for a topic

thanks guys! Really appreciate the answers! Yeah, I'll probably will focus on getting mic and lighting setups firsts2 points -

For the talking-head stuff, almost any camera will be good enough if given enough light, so I'd suggest you concentrate on getting 1) enough light so your camera is at its native ISO, and 2) lighting that is flattering and creates depth and contrast in the image. There are lots of videos on YT that show this, and the before/afters show what is possible. You don't need expensive lights either, there is tonnes of info on home DIY hacks using lamps and cheap shower screens as diffusers, etc. The standard approach is 3 Point Lighting, like this: This video is a good primer and talks about how to use (or avoid) existing light sources like natural light and ceiling lights etc. Other videos that might be useful: This video is longer but starts with a complete setup, so acoustics etc too. Cameras get all the attention, but in the real world are some of the least important parts of the whole setup. You're lucky in that you're building something indoors for one specific use in an environment you control and (hopefully) doesn't have to be portable and easy/quick to setup and pack away. With a bit of effort you should be able to get a great setup that works really well and doesn't cost much at all.2 points

-

The GX85 "Super-16" project

kye and one other reacted to Clark Nikolai for a topic

Thank you. I'm glad people are liking it. It was a lot of work and took two years to make. Most of the time by myself, out in the city with a tripod and camera. I met a lot of people doing it since the camera looks unusual. (It's common in Vancouver to see someone filming as it's a big film production town and has six film schools but people out shooting usually have more modern squarish looking cameras.) The themes and aesthetic came out of the photography I had been doing for several years already. I had been framing buildings to make geometric shapes. This was basically adding motion to that series. The music was from a friend who had I got to know when he acted in a short I did a few years earlier. https://testcardmusic.bandcamp.com It hasn't had a festival screen it yet but it did get an award in Sevilla, Spain. https://www.instagram.com/seviff.spain/p/DUTcVcGDLq7/?img_index=162 points -

Fast lenses and film emulation can resurrect old cameras (ft. GH2 night footage!)

Andrew - EOSHD and one other reacted to kye for a topic

Old cameras have a number of challenges, including: - weak codecs, often 8-bit low bitrate files - terrible low-light - dated colour science and no log profile (rec709 profiles only) - poor DR - lack of IBIS or EIS - etc At the time these were pretty significant challenges. Now they aren't the challenges they used to be, because fast lenses and film emulation assist with all these limitations. Let's take these one at a time. Weak codecs Weak codecs, including 8-bit low-bitrate files can be soft, and can be overwhelmed by motion. By shooting with faster lenses you render more of the frame out-of-focus and therefore the limited bit-rate only has to focus on a smaller percentage of the frame. Thanks to cheap Chinese optics companies, we are now awash in F1.4, F1.2, and even F0.95 primes. The soft image is now no longer a liability, because compared to our modern 4K sensibilities, even 35mm film is noticeably soft by comparison. This means that by adding film emulation you'll be softening those edges and smoothing over any subtle compression artefacts. Film often has a more compressed colour palette, pushing hues closer together in many instances, lessening the visibility of artefacts. It doesn't work magic, but every bit helps. Terrible low light Cheap F1.4, F1.2 or even F0.95 primes sure make a big difference after the sun goes down. That "fast" F2.8 vintage lens you were shooting on back then is 3 stops slower than these things now. That can really bring a lot of situations back from being unusable to being at, or close to, native ISO. Dated colour science and no log profile Rec709 colour profiles are basically a creative filter the camera has applied, and they often weren't that good. Film emulation takes that image and applies an incredibly large transformation over it, which goes a long way to hiding any imperfections the colour profile might have had. It's like if you put on a pair of rose-tinted-glasses, you can still see that things have different colours, but any subtle differences aren't visible because the image has had a strong look put over the top. Also, film emulation plugins often come with controls for exposure and WB etc, which can help to grade the 709 footage, which was a major pain back before we had colour management pipelines. Poor Dynamic Range You know what else has pretty poor DR? Print film! Kodak 2383 has about 5-6 stops in the linear region, and then everything else in the image is squished into the highlight or shadow rolloffs. Yes, you can see into those rolloffs a bit, but if your camera has 8 stops then you've got at least a stop to put into each rolloff. People think film has huge DR, and it did at the time compared to consumer digital cameras, but it was the negative film that had the huge DR, not the print film. It's very common now for people to shoot on film, scan it, and then do everything else digitally, so they keep the full DR of the negative, rather than taking half of it and pushing it into the rolloffs. This is a still from Minority Report from 2002: It's not exactly a dynamic range demo - the streams of light INSIDE THE ROOM are blown out and every item of clothing the main character is wearing is crushed blacks. Lack of IBIS or EIS So there's a little shake in the files... well, film had this thing called Gate Weave, which was where each frame didn't perfectly align in the camera and so when played back there was movement of the whole image. Once we started doing digital intermediates people started stabilising the images digitally and that went away. When I went to the cinema and saw Goodfellas projected on celluloid they played a bunch of old ads and movie previews also on celluloid, and some were jumping around all over the place and some were rock solid (which means the projector the theatre was using wasn't the source of it) and much to my surprise, Goodfellas itself had quite a bit of it. By just using modern tools you can now stabilise things pretty easily, but this will create artefacts if you do it too strongly (especially if the camera had bad RS), but applying film emulation gives you much more leeway. This is because you can stabilise the image, then apply some Gate Weave, and once the viewer notices your images look like film they'll potentially just accept the shake in the image as being part of the film look. By adding Gate Weave and getting some grace from the viewer you can potentially increase the strength of the stabilisation you're applying too, with there being more wiggle room, and also because the softening of the image will mean that any distortions in the image will be slightly less visible. I was inspired to write this partly from my GX85 Super-16 camera project, but also partly by this video of the GH2 shooting at night. You can still see the ISO noise and macro-blocking creep in as blue hour ends, but he was also using the 9mm F1.7 and 35-100mm F2.8, the F1.7 is reasonably bright, but the F2.8 is pretty slow compared to things like the TTartisan 50mm F1.2 or the 7Artisans 35mm F1.2 primes that are $109 and $97 on B&H. These won't offer OIS, so your options for these on non-IBIS cameras are to spend more (Canon and Sony both offer 35mm and 50mm F1.8 primes with OIS) or to use a tripod or larger rig of some kind. Far from perfect, but much more useable than you'd think. These cameras have actually gotten better over time as the rest of the ecosystem is better able to support them. The only reason we don't think so is that our expectations have inflated faster than their potential.2 points -

The GX85 "Super-16" project

kye and one other reacted to Clark Nikolai for a topic

Here's a pic from a shoot I did last December. I don't know the brand of the shoulder rig (as I got it used on Craigslist), the EVF is the (sadly discontinued) Kinotehnik LCDVFE. The camera attaches to the rig with a Niceyrig quick-release plate (that has feet). The lens is a vintage Angenieux 17-68mm zoom with a screw on wide angle adapter, on top is a Niceyrig top handle holding an Audio-Technica stereo mic and a monitor mount. A bit hard to see is an attachment that goes below the rails between the shoulder pad and the grips for two wireless mic receivers.2 points -

This guy makes any camera shine.

kye and one other reacted to Clark Nikolai for a topic

So true. Our training and experience give us an eye for composition and framing. Last winter when I was in Mexico, as a tourist, I started shooting with my iPhone. I thought it was boring so I used an app that replicates grainy, dirty Super8 and shot with that. I realized that I also needed shoot like another person. I needed naïveté in my shooting, so I chose "1960s dad with his Super8 camera". So, I did things like shoot the waves in the ocean with a slow pan to the shore, signs, cars going by, etc. It was refreshing not to have to be so perfect all the time. (Now unfortunately I have to edit it and the footage is not exciting me, but that's a different story.)2 points -

What is the significance of Homer's "Odyssey" ?? in 3 minutes

eatstoomuchjam and one other reacted to Andrew - EOSHD for a topic

Let's change the subject. Terrance Malick is the most overrated boring director ever, adored by hipsters, hated by real filmmakers.2 points -

My understanding was that Brandon literally helped make the genre with (what I like to call) "washing machine travel films" like Hong Kong Strong that are like you rolled and spun a camera through a city and then cut it with only match-cuts - they trigger my motion sickness pretty strongly and I literally can't watch them. However, people loved it and he got a bunch of TV appearances out of it: As previously said in the thread, he can make any camera from the last decade shine, and he has, and it's skill. All true. What no-one else has said though, is that videos like this are film shoots. They're not holidays, or someone filming while traveling (even slow travel).. These videos are researched, storyboarded, scheduled, and then shot on location with a cast (him and his GF, but often he recruits locals and will direct them like he's shooting a narrative) and crew (IIRC he's mentioned hiring people to fix, drive, translate, liaise, etc). This is no secret, and his free BTS content shows this openly. I think he sits in a fascinating space that I don't see a lot of professionals operating in. He shoots uncontrolled (and uncontrollable) situations, like markets and crowded public places, does so with talent and a shot list, but does so shooting relatively low-impact. People shooting a travel doc will be shooting with talent in markets and in the streets but will have huge shoulder-rigs and will build up a little crowd of people who are just staring at the shoot and have to be choralled to keep them out of frame. People shooting relatively incognito in a crowd are mostly doing it without talent or a plan or shot-list. Not a lot of people sit between those two scenarios, and even less will tell you how to go about doing it. I've paid a lot of attention to his BTS segments (which are excellent if you want to make videos like this) but as someone who travels for the enjoyment of it and shoots along the way, I can tell you that there is very little overlap between shooting while you travel and producing and shooting and editing a travel film. I put myself on the email list for when he launched his course, and when it was released it was pretty pricey. Probably good value as he obviously knows what he's doing, but too much for me considering the differences of our methods. Maybe it's an aesthetic thing, but his work looks dated to me now, including the Oppo piece. I understand why he still shoots these things like this, because he's appeared on quite a number of videos like this that are posted on the manufacturers channel, rather than his own channel, so it's obviously how he keeps the lights on.2 points

-

I originally read the title as “This Guy Makes Any Camera Shite” and thought my cloud account had been hacked. I enjoy watching Brandon Li’s stuff, particularly the self shooting ones. Self shooting as in shooting on your own as though someone else is shooting rather than self shooting in the vlogging context. Tripods basically.2 points

-

Typing that reply certainly did make me warm even more to the LX100 and L10, but considering I have the GX85 and 14mm F2.5 already (and are therefore a FREE option!) it's pretty hard to beat for a number of reasons.. The size with the 14/2.5 is similar, and it doesn't get larger when you turn it on: As I edit in 1080p and am softening the image in-post rather than sharpening it, the 14/2.5 on the GX85 can be a 31mm and a 62mm (with the 2x zoom function) which are both absolutely awesome focal lengths for shooting how I like to shoot. I can easily bring other lenses if I am shooting something worth putting some effort into shooting (ie, it's not just an EDC opportunity). I've shot an absolute ton of tests on the GX85 so I know it inside and out. Like most of us here, I both crave the simplicity of having a fixed-lens setup that would do most of what I want, but I crave the choice and freedom of the options that an interchangeable lens mount provides!2 points

-

Sony a7r VI (Mark 6) - an a1 beater for $2000 less

Andrew - EOSHD and one other reacted to BTM_Pix for a topic

2 points -

I may have dreamt this but either in Flickr itself or an external site that used it’s data, you were able to search by EXIF so you could look at the extremes of the lens range and in this case it would be to display all LX100 images at 24mm f1.8 and 70mm f2.8 which was a more informative way to do it. People can fuck up the composition and the processing but even they struggle to overcome the physics. Welcome to episode two of the series! Fixed. The EIS is an additional crop as far as I can recall.2 points

-

Need to vent... MPB are a f-ing nightmare

Andrew - EOSHD and one other reacted to BTM_Pix for a topic

Unless of course they got me mixed up with Gerald Undone.2 points -

8-bit REC709 is more flexible in post than you think

amoricanseth reacted to kye for a topic

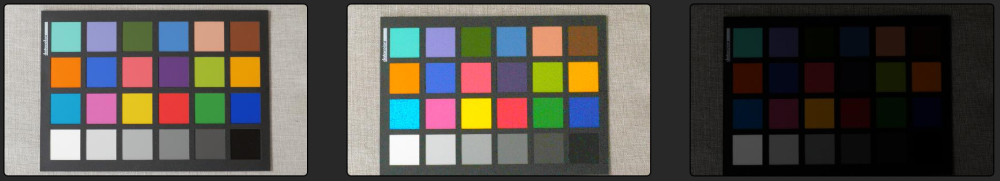

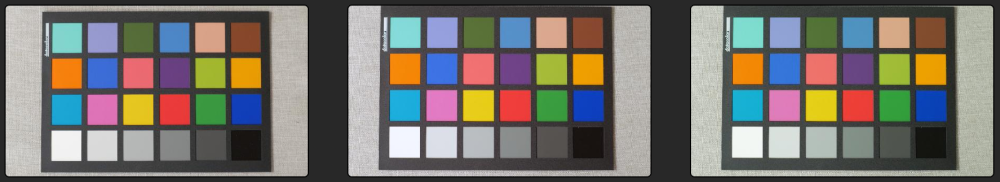

8-bit rec709 profiles are much more flexible in post than you might think. I'm not saying they're as good as an Alexa or whatever, but they're a million miles better than people give them credit for. Here's a latitude and WB torture test of the GX85, in the standard profile (8-bit rec709) customised to have reduced contrast but normal saturation. For each of the below, the left image is the properly exposed reference image, the middle one is the graded image, and the one on the right is the image SOOC. Exposure latitude test - +3 stops: Exposure latitude test - +2 stops: Exposure latitude test - +1 stops: Exposure latitude test - -1 stops: Exposure latitude test - -2 stops: Exposure latitude test - -3 stops: Exposure latitude test - -4 stops: Exposure latitude test - warm: Exposure latitude test - cool: Exposure latitude test - magenta: Exposure latitude test - green (as far as it would go!): Notes on the testing method: GX85 shot in manual mode on cloudy day Exposure varied by changing lens aperture WB varied by changing colour temp and tint Tools used in Resolve were mostly Lift/Gamma/Gain, saturation, and some had a bit of Shadows/Mids/Highlights Notes on the results: If it's clipped then it's clipped, there's no getting around that If you've shot a whole sequence in the wrong WB then shoot a test chart replicating the error, spend some time on the correction, then apply to all the shots... a bit of work but worth it to rescue a days shooting If you've shot on auto-exposure / auto-WB and want to correct the small errors then this is easily possible - it won't have gotten it nearly as wrong as what I have shown above Most cameras shooting in rec709 will do funky stuff to colours depending on their luma value as part of the look of the profile, so when you over/under expose and then pull things back the hues and saturation levels will have shifted around in odd ways compared to a normal exposure, but if it's a real shoot then you most likely won't have many dominant hues in the image and you only have to correct the ones that are in the frame and distracting, so the above is far more work than normal shooting would be The days of needing RAW or even LOG to change exposure or WB in post are gone, and although the rec709 profiles often have lower DR than log profiles, they're much better than you think. If anyone wants me to post full-sized versions with titles so you can flick back and forth then just let me know. Happy shooting!1 point -

LUMIX L10 - announced

Emanuel reacted to newfoundmass for a topic

This looked and sounded really good until they said there was no IBIS. I suppose this makes sense as they emphasized this is targeted for photographers, but I do hope they release a video focused model that includes IBIS because I would love a genuinely pocketable video camera like this.1 point -

C-mount lenses on smartphone

Andrew - EOSHD reacted to Cosimo for a topic

A mix of two glass, Schneider cinelux front and Moller 32 on the rear. Taking lens was a Soviet Vega 20mm C mount1 point -

Need to vent... MPB are a f-ing nightmare

BTM_Pix reacted to Andrew - EOSHD for a topic

It's a pity we can't reply to these emails with something like "Is it just a hobby or a real business?" "Did you mean to send that email?" How do these marketing boffins come up with this tone-dead stuff - are they psychopaths? yes1 point -

C-mount lenses on smartphone

Cosimo reacted to Andrew - EOSHD for a topic

What was this custom anamorphic, and what was the ground glass adapter? Liking the look1 point -

1 point

-

@kye Sorry I have not published those porno links. My account somehow has been stolen, sorry again for this inconvenience. Thanks 🙏1 point

-

Need to vent... MPB are a f-ing nightmare

eatstoomuchjam reacted to BTM_Pix for a topic

I hope MPB have looked at this thread at some point because I’ve just had an email from them entitled “Was it just a phase?”. I thought it was going to be something punny about phase detect AF and trying to alert me to their range of manual focus lenses or some such but no, it’s suggesting that photography must’ve just been a phase because I haven’t bought anything from THEM for a while. Entitling the email “Is it because we have gone really quite expensive and frequently lose your mate’s cameras?” then I’d have agreed with them as that being the reason I haven’t bought anything from them for a while. My “phase” of being a photographer which began a comfortable three decades before your company even existed remains intact MPB but I tell you what is now a phase and that’s ever buying anything from you, you cheeky patronising pricks. When someone who has been a frequent customer since you began, you might want to look inward as to what might have caused their buying to fall off a cliff.1 point -

The video was an improvisation test focused on capturing the human face using my modded phone. It wasn’t a planned shoot, just a quick experiment to see how the device performs in real conditions. Unfortunately, many clips were deleted because I didn’t have a tripod and was holding the phone by hand, which made some recordings unstable or unusable.1 point

-

One Decade

j_one reacted to fuzzynormal for a topic

The GH5 has been my workhorse for almost a decade now. For whatever reason, the need to move on from it has never been necessary, so I've stuck with it. For instance, AF is not an issue. Manual focus is how lenses get used by me. Slow-mo is a thing to do less of, not more of, imo. A full 10 years on, what does a different camera offer; like really offer? An extra stop of exposure? An extra bit of DR? Looking at a GH7 the thought is, "MMM, pretty nice." But then what? A big difference in ... what ... gets captured? Maybe the market has matured TOO much for me?1 point -

Back from a visit to Japan. We spent most of the time in a small town but went to Tokyo for a weekend, so I shot a lot in Tokyo and used the rest of the time to test a range of lenses I took just for that purpose. I tested the 12-35mm F2.8 for Night Cinema and it worked great and I loved the images, but as it got darker I kept cranking up the ISO and in the end it just didn't have the levels for the truly dark backstreets. I also tested the tiny 35mm F1.6 c-mount CCTV lens I got off ebay some time ago. It produced some really nice images in the right scenarios, but the plane of focus was so incredibly distorted that any scene with stuff off-centre in the frame would look really strange. It had more level than the 12-35mm but still fell short of my better options. My themes for the place emerged very quickly.... vending machines, bicycles, and lanterns. Anyone who has been to Japan will be surprised by this exactly zero percent. At this point we went to Tokyo and I treated it like a Night Cinema interval event, basically shooting as much as I could. I shot a whole sequence from the hotel window as the sun set using the Takumar 50mm F1.4 and SB, my go-to setup. I did a number of walks around the local area with the same setup. Each time I went out I liked using the setup more, and each time I reviewed the files I liked the images I got from it more as well. After China I was feeling like it was a bit too vintage / low-fi but I've really warmed to it since. I found myself a bit at odds with Japanese culture, especially in regards to the fervent dislike of badly-behaved foreigners and the locals dislike for being filmed in public (despite the fact no-one will tell you they don't like it), so I mostly filmed the place and not the people, or at least I didn't tend to film individual people, instead including them small in the frame, or en-mass, or out of focus. I think that lent itself to the cultural experience as well. The city, and to many extents the culture, dwarfs the individual, placing the focus on the group. As a tourist I can only glimpse the culture from afar, so taking the perspective of the outsider in the compositions is very much representative of the experience. My "big" outing was a walk from Shibuya to Harajuku on our last night there. As these places are known for youth and fashion and culture (and the counter-culture that fashion normally draws from) I concentrated on the grittier side of these areas. I also leaned into the layers and the overall chaos of the place, taking advantage of the Takumars ability to focus on a small slice of the chaos, both through the 70mm FOV and also the shallow DOF. Back in the small town I did more "test" walks with the TTartisans 50mm F1.2 (100mm F2.4 equivalent), the Helios 44M + SB combo (82mm F2.8 equivalent) and Takumar + SB combo for comparison (71mm F2.0). As the small town was much less dense I found the extra reach of the TTartisans to be useful, and the DOF was shallow enough to be useful at distance, and the image was much cleaner across the frame compared to the Tak. The Helios 44M was a different beast. I felt like I was fighting with it basically the whole time and came back from the shoot thinking it was a bust and I'd wasted an outing. The FOV often seemed wrong, it lacked the aperture to get enough light to the sensor and I was pushing the ISO a lot, the DOF was also deeper and so I found myself having to get closer to objects to get the separation I wanted, which then meant I was too close and the parallax motion from my hand-held movement was really distracting. The focus on my copy is very stiff and it is a very low gear so to go from distance to closer focus had the ergonomics of opening a jar where something sticky had gotten into the threads. Still, I got back from the shoot and lots of the images looked really nice, which I think is to do with the extra diffusion this has. It was also better behaved on the edges of the frame compared to the Tak too. One thing that isn't obvious from the frame grabs is the ghosting from the strong light-sources in frame, and because I shoot hand-held and have IBIS active, they move in unnatural ways. At first I thought they were coming from my vND but if anything they got worse on both the TTartisans and Helios after I took it off. I think due to this I'll have to lean into the imperfections in the grade and edit and go lo-fi, which is why I've applied a film emulation softening equivalent to 20mm film to the Helios footage. I also shot a lot with the iPhone 17 while there, normally during the day for non-cinema purposes, but that's a different topic for another time.1 point

-

1 point