androidlad

-

Posts

1,215 -

Joined

-

Last visited

Content Type

Profiles

Forums

Articles

Posts posted by androidlad

-

-

-

DJI Mavic 3

In: Cameras

- Juank and Rinad Amir

-

2

2

-

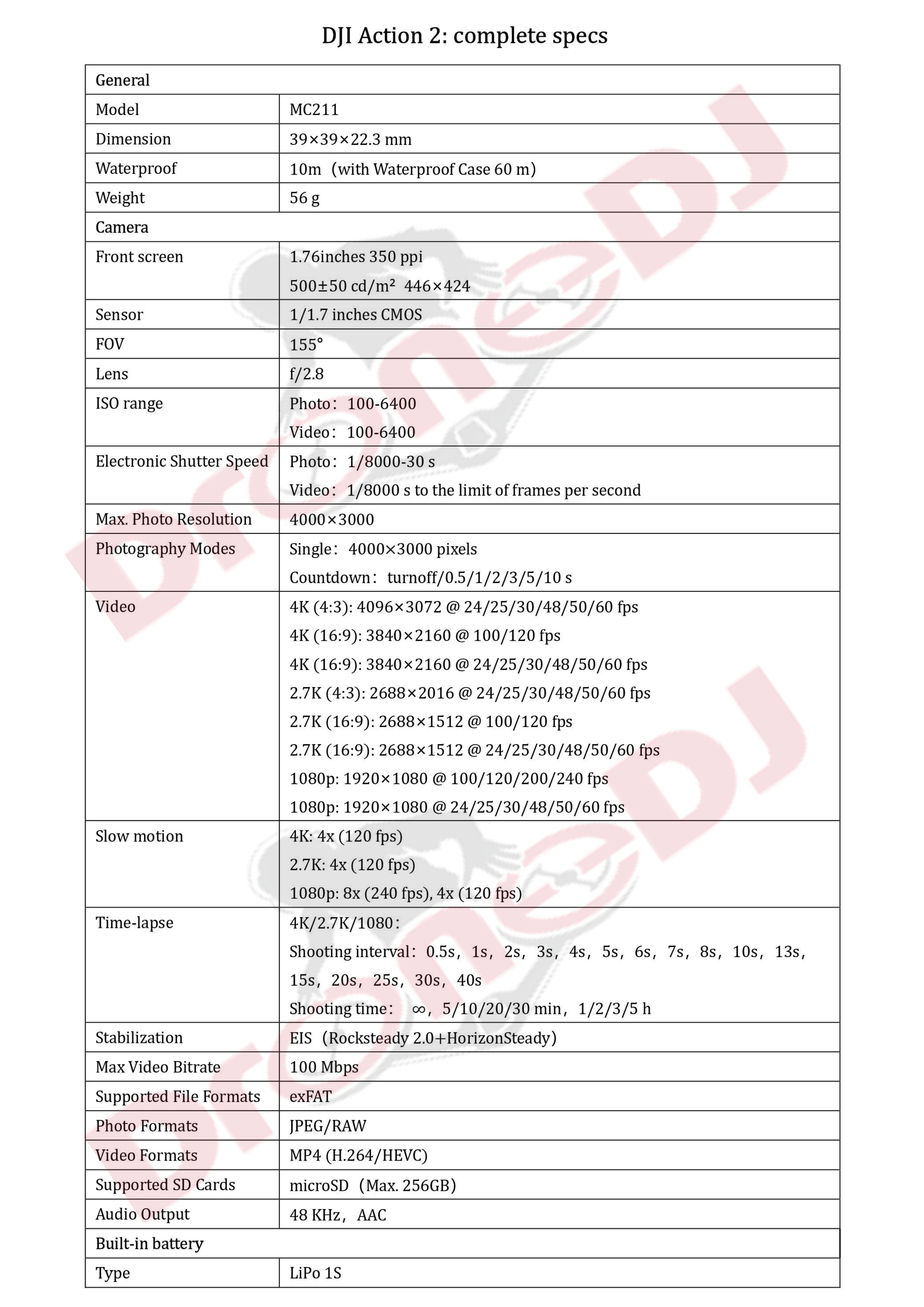

DJI Action 2

In: Cameras

-

DJI Action 2

In: Cameras

https://dronedj.com/2021/09/24/dji-action-2-camera-specs-leaked/

12MP 1/1/7" sensor, 155 degree FOV, f/2.8, up to 4K 120P, modular design, RockSteady 2 and HorizonSteady.

-

DJI Mavic 3

In: Cameras

https://www.theverge.com/2021/9/23/22690821/dji-mavic-pro-drone-leak-twin-cameras-four-thirds

20MP M4/3 main sensor. 24mm equivalent, f/2.8-f/11.

12MP 1/2" secondary sensor. 160mm equivalent, f/4.4.

Mavic 3 Pro and Mavic 3 Cine (built-in 1TB SSD).

-

https://dronedj.com/2021/09/17/autel-evo-iii-specs-photo-release/

EVO III

Maximum flight time 38-40 minutes and dual-band HD image transmission with up to 10 km flight range. Fisheye lens for omnidirectional obstacle avoidance.

EVO III (basic) with 1-inch CMOS sensor

EVO III Pro with 4/3-inch CMOS sensor

EVO III Zoom with 8K dual camera and 10x fusion zoom

EVO III Supersense with 1.4-inch CMOS sensor

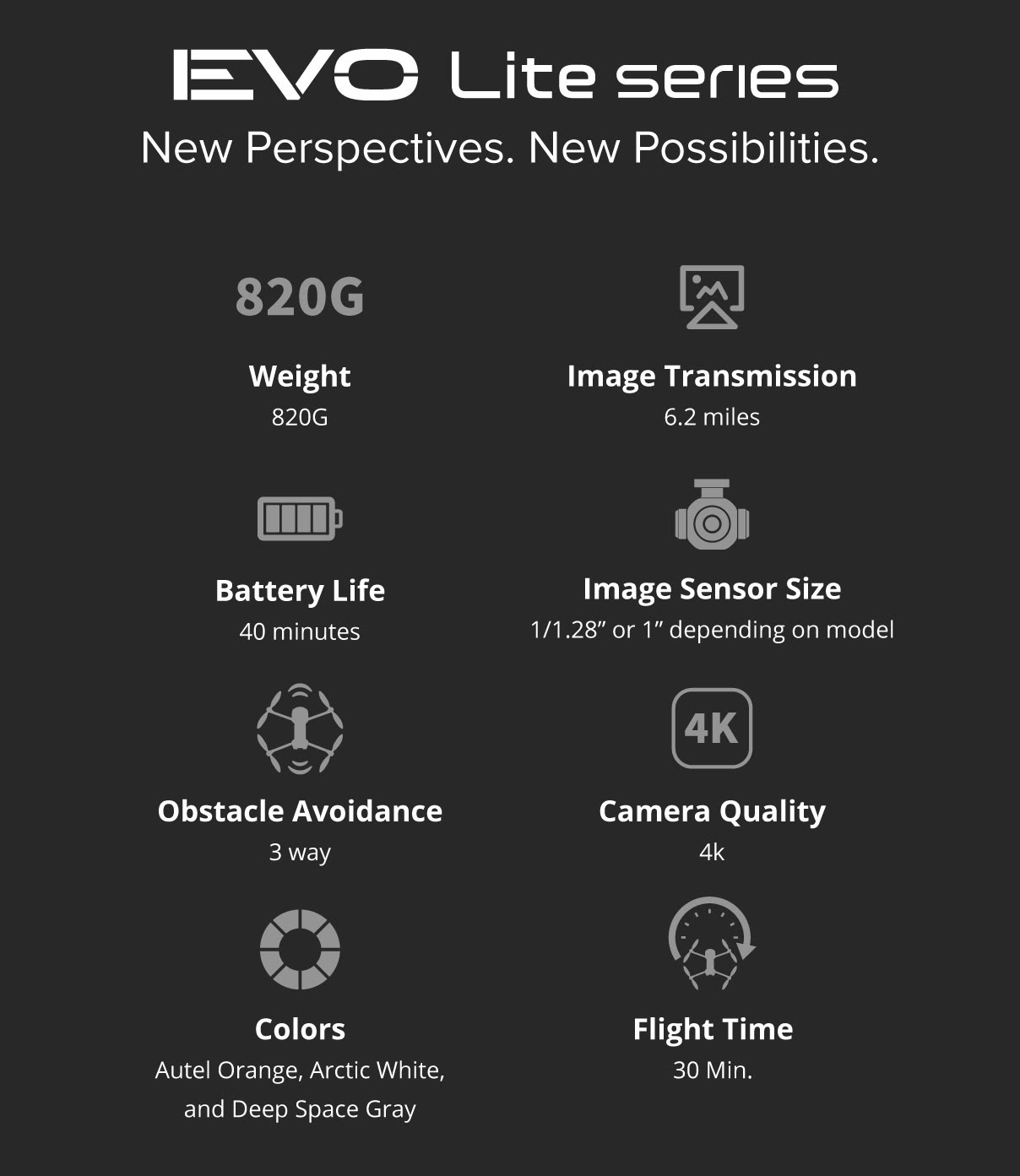

EVO Lite

Maximum flight time 40 minutes with up to 10 km flight range. Forward backward downward obstacle avoidance. 4K60P on all models. One-click vertical shooting mode.

EVO Lite with 1/1.28-inch CMOS sensor

EVO Lite Plus with 1-inch CMOS sensor

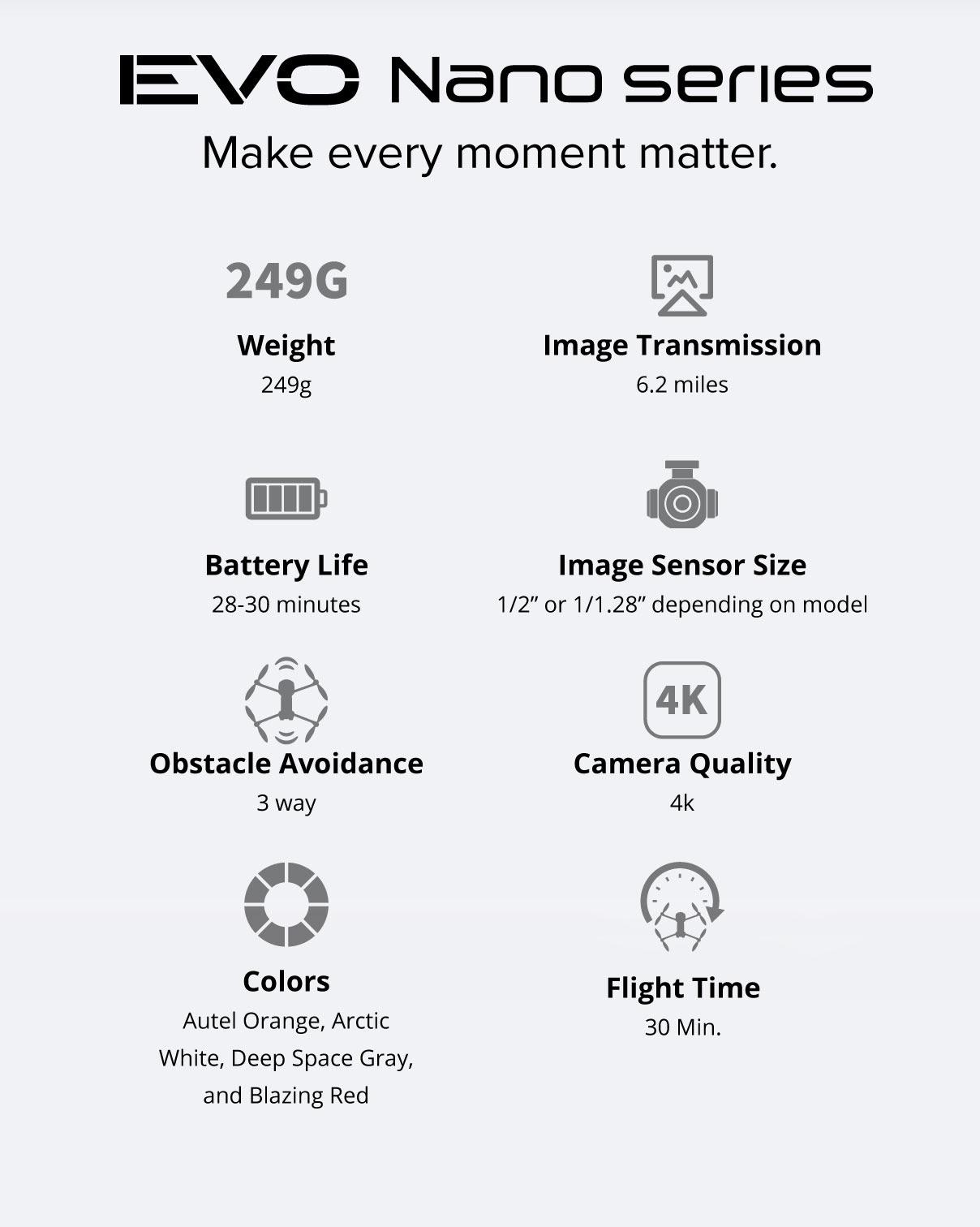

EVO Mini

Under 250g. Maximum flight time 30 minutes with up to 10 km flight range.

EVO Mini without forward backward downward obstacle avoidance

EVO Mini Plus with forward backward downward obstacle avoidance

-

You forgot to mention ProRes codec support for up to 4K 30P on the Pro model.

Now Apple just need to disable sharpening and noise reduction.

- BenEricson, Emanuel, Juank and 1 other

-

4

4

-

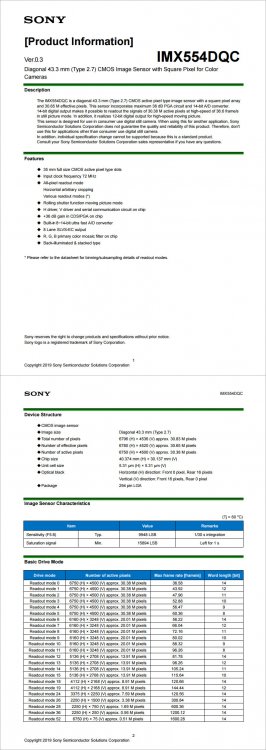

It's important to distinguish rolling shutter in photo mode (assisted by DRAM), and in video mode (direct ADC IO)

In photo mode, R3 has a electronic shutter flash sync speed of 1/180s at 14bit, indicating a sensor readout speed higher than that. (A1 e-flash sync is 1/200s and sensor readout 1/250s at 14bit). This aligns with the official claim that the rolling shutter is about 1/4 of 1DX III (1/60s at 14bit).

In video mode, the full sensor has a readout speed of 1/50s at 12bit, this enables a 16:9 crop of 6K at 60fps.

-

Official announcement on 15 September. Pricing 539.99EUR without GoPro Cloud annual subscription.

Other highlights:

Higher refresh rate front display.

Faster touch operation and shutter release.

Higher quality live stream video quality.

Full compatibility with Hero 9 accessories.

Improved low light image quality.

Better horizon alignment with higher slope limit.

New hydrophobic and anti-reflection lens coating.

-

2 hours ago, KnightsFan said:

GoPro makes a camera the records 4k60 for hours on end while enclosed into a waterproof case with no external heatsinks, at under half the weight of the Ninja V. I'm sure it's quite possible to build a screenless recorder smaller than the Ninja V.

😂

Does GoPro record ProRes HQ or RAW?

H.264 and HEVC codecs can be heavily hardware accelerated, using off the shelf chips (GoPro branded them GP1).

Chips to encode ProRes have to be built from scratch (essentially a CPU), hence the size and thermal requirement.

-

I've seen a Ninja V taken apart and from the size of the chip and heatsink/fan, it really can't be much smaller.

-

DJI Mavic 3

In: Cameras

New collision avoidance system, side sensors removed, front and rear sensors shifted to corners and apparently wide angle

Hasselblad dual camera - top with 7x optical zoom - lower 5.7K 24mm f2.8 - 16mm wide angle adapter included

Apple ProRes format support

1Tb internal memory

More than 40min flight time

5000 or 6000mah li-ion battery

Battery mounts from the back

Mini 2 style gimbal now has integrated automatic lock

Will use the same radio as the Air 2S

New smart controller

-

With the resolution/framerate and amount of motion involved with those devices, they are in need of a codec/bitrate overhaul, or you always end up with heavily compressed blotchy mess that can't hold up in post.

- Emanuel, newfoundmass and kye

-

3

3

-

https://winfuture.de/news,124840.html

New sensor for 23MP photo capture

New GP2 processor

5.3K 60P, 4K 120P, 2.7K 240P

HyperSmooth 4.0

-

is at the final stages of development and will be announced soon.

One exciting feature I can share is that the dynamic range is rated at 16 stops (actual ARRI stops, so 2 stops higher than current ALEXA sensor).

- seanzzxx, Jerome Chiu and billdoubleu

-

3

3

-

1 hour ago, Emanuel said:

Thanks : ) Any idea on the "manual exposure" mode? How much is it... "adjustable"? Any control on the shutter speed? Any flicker-free settings available or is the glass half empty after all?

There's no real manual exposure, you have the option to adjust exposure compensation, that's it.

If you want 4K 120fps full manual exposure, Xperia 1 III with Cinema Pro app is the only option.

-

-

2 minutes ago, Andrew Reid said:

There is nothing on the press release site Samsung Tomorrow about the new sensor and the photo @androidladuses is of the old sensor... I did a google reverse image search on it

The photo is of the original S5KVB2 announced in 2014, you don't even have to reverse image search it, the URL is right there.

Samsung haven't announced this refresh yet.

-

-

-

X-H2 to debut X-Trans V - a dual aspect 3.2um pixel size stacked sensor.

7332x4888, up to 96fps readout at 12bit (3:2)

7680x4320, up to 110fps readout at 12bit (16:9) -

-

-

6 hours ago, PDerrins2020 said:

Thanks for your reply Androidlad, the a7s3 is nice, looking for something a bit smaller with no screen or EVF and with better environmental ranting, any suggestions,

have a great weekend

FX3

New Autel Drones - EVO III, EVO Lite & EVO Mini

In: Cameras

Posted

Lite and Nano officially announced

https://dronedj.com/2021/09/28/autel-evo-nano-plus-price-specs-availability/

https://dronedj.com/2021/09/28/autels-mid-range-drone-series-evo-lite-starts-at-1149/

Nano starting price $649

Lite starting price $1149