androidlad

-

Posts

1,213 -

Joined

-

Last visited

Content Type

Profiles

Forums

Articles

Posts posted by androidlad

-

-

-

28 minutes ago, Caleb Genheimer said:

Any mention of open gate video?

Not yet but it's capable of outputing 8256 x 5504 3:2 open gate up to 50fps.

-

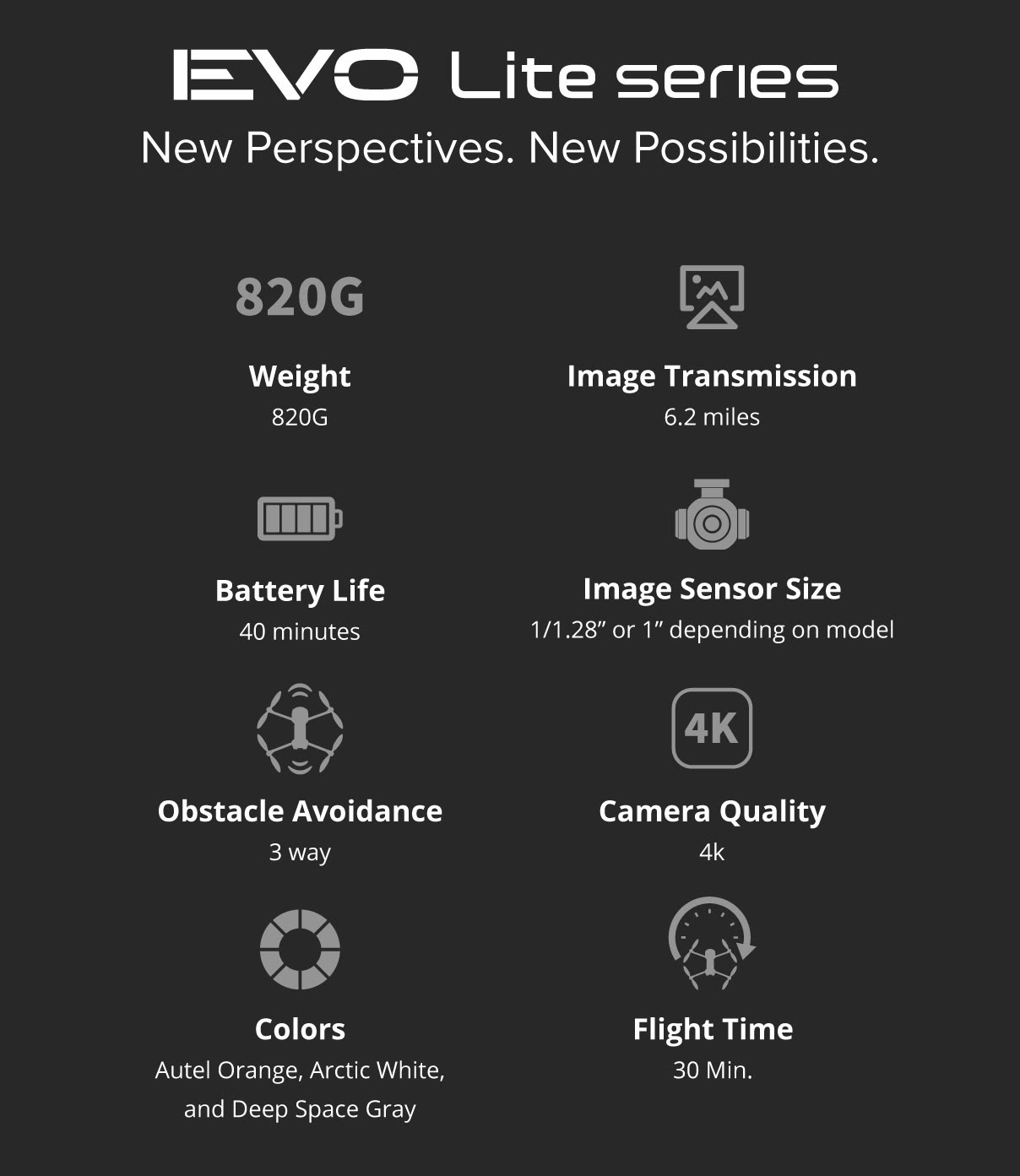

DJI Mavic 3

In: Cameras

DJI Mavic 3 Prices

Chinese prices were leaked via OsitaLV today

¥13,888 for Mavic 3

¥17,688 for fly-more combo

¥32,888 for Mavic 3 Cine

At 6.41 Yuan to the dollar, the rough US equivalents are:

$2299USD for Mavic 3

$2799USD for fly-more combo

$5199USD for Mavic 3 Cine

- Juank and Rinad Amir

-

1

1

-

1

1

-

DJI Action 2

In: Cameras

Some reviews have revealed a serious flaw - overheating.

In 25C ambient environment, even 1080 30P overheat after 30min, 4K 60P overheats just after 5min.

-

-

10 hours ago, IronFilm said:

I'd assume/hope you could have a 4K/24 subsampled with 7.8ms? That would be incredible! Better than ARRI in this regard.

Alexa LF is actually 7.4ms so it's technically slightly better than Z9.

Pixel binned 4K 24 to 60P may be added in a future firmware update. Along with other framerates such as 24.00P and 48P.

-

-

4 minutes ago, Eric Calabros said:

Of course there is. Your camera is blind to the events happening "between the frames".

There isn't because there are infinite amount of frames in a second.

"Frame" and units of measurement for time are all human construct. Time is a continnum.

We can use high shutter speed to freeze motion in a particular point in time, the higher the sharper for fast motion. And use high frame rate to capture a particular sequence of motion.

-

3 minutes ago, Eric Calabros said:

And you only cover 3% of a second with that combination of fps and shutter speed.

There's no such thing as "percentage of a second".

You freeze one to 120 "moments" in a second.

-

1 hour ago, Eric Calabros said:

120 fps is probably a 2x2 binned raw converted to HEIF. Nikon has done that before; their binned raws in D850 were soft, had some artifacts (only visible when zoomed in), but were clean in high ISO. Enough quality for social media.

What most people forget about capturing the right moment with speay and pray is the shutter speed. If you need 1/1000s to freeze a moving subject, even at 120fps you only get 120s of one milliseconds. So you only cover 12% of a second!

The 120fps feature is a DX crop with further subsampling.

120fps is not the shutter speed, you can use 1/4000s shutter speed to shoot 120fps and every frame will be pin sharp.

-

DJI Action 2

In: Cameras

-

DJI Mavic 3

In: Cameras

All video spec leaked in full:

https://winfuture.de/news,126006.html

20MP 4/3" 24mm f/2.8 main camera

12MP 1/2" 160mm f/4.4 telephoto camera

5280x3956 stills resolution

5120x2880 up to 50P

4K up to 120P

1080 up to 200P

4K up to 30P on telephoto camera

HEVC codec bitrate up to 200Mbps

ProRes HQ support on the Cine model on main camera

- Rinad Amir and Juank

-

2

2

-

55 minutes ago, Amazeballs said:

Now if someone could hack Sony firmware and make sort of a Magit Lantern.. that would unlock very interesting possibilities, including 48mp still on A7S3

Binning design would actually explain why A74 has better lowlight than A7S3

Remember this is Quad Bayer, the equivalent Bayer resolution of a 48MP Quad Bayer is about 38MP.

The real implication is single exposure HDR.

-

-

-

8K 60P is internal but will come as a firmware update after shipping.

-

Resolution 8208x5472, pixel size 4.386μm, high dynamic range mode readout time 2ms, high speed mode readout time 1ms, same readout speed for both video and stills, double stacked back illuminated CMOS sensor

- ntblowz, The Dancing Babamef and Juank

-

3

3

-

-

DJI Action 2

In: Cameras

-

DJI Mavic 3

In: Cameras

Official announcement date November 5

-

Sony A7 IV

In: Cameras

-

8K60P, 4K120P RAW

- greenscreen and Rinad Amir

-

2

2

-

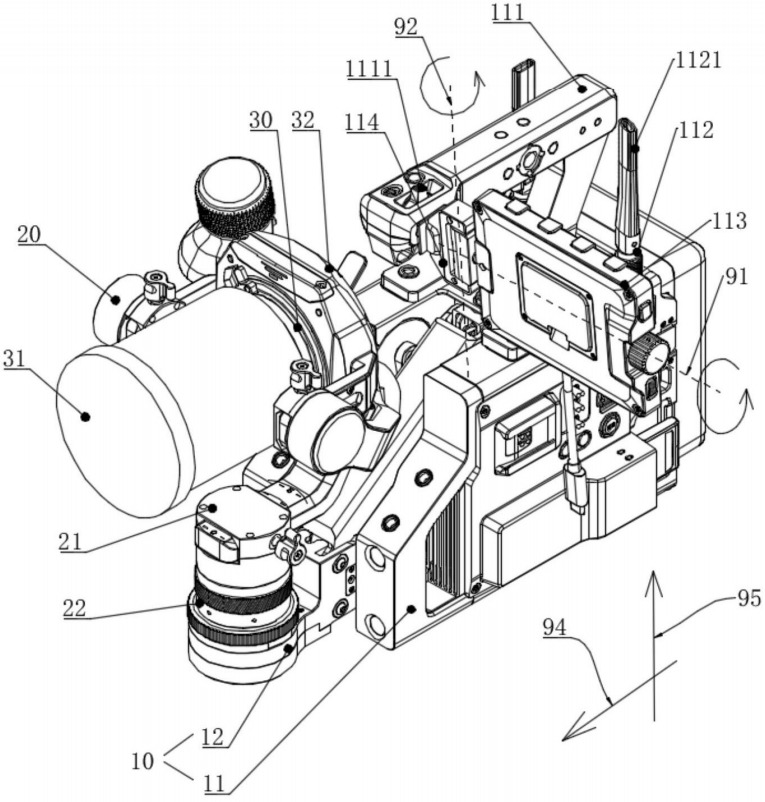

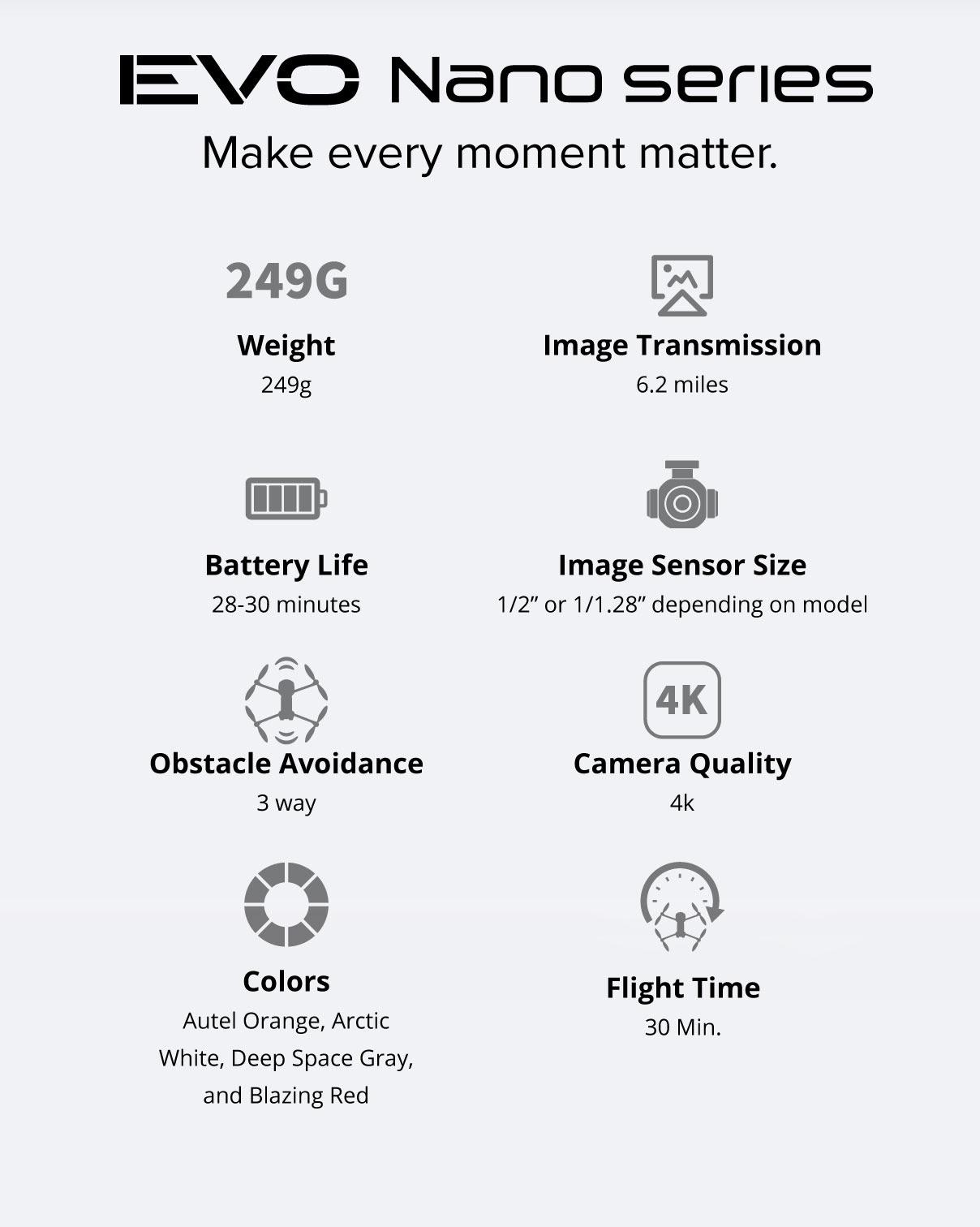

Lite and Nano officially announced

https://dronedj.com/2021/09/28/autel-evo-nano-plus-price-specs-availability/

https://dronedj.com/2021/09/28/autels-mid-range-drone-series-evo-lite-starts-at-1149/

Nano starting price $649

Lite starting price $1149

-

Nikon Z9 / Firmware 2.0 Official Topic

In: Cameras

Posted

It's not the same sensor as A1. The Z9 sensor was made to Nikon's spec but with Sony Semicon's DBI technology.