-

Posts

7,853 -

Joined

-

Last visited

Content Type

Profiles

Forums

Articles

Posts posted by kye

-

-

14 hours ago, Tim Sewell said:

I was so focussed on getting everything 'right' that I didn't pay enough attention to getting it good or interesting.

So I'm going to reshoot and one of the things I'm going to do is lean in to the limitations I have - first among which is that I'm doing this almost completely alone. I am both crew and talent! The biggest limitation caused by that is that camera movement while I'm on screen is not going to happen, which means that to create interest I need to make my angles and composition more interesting.

Firstly, it's awesome that you're actually shooting something! and the fact that you're on-screen is next level above that even, so in my books you're already successful 🙂

I'm not sure what you're shooting, and therefore what making it good / interesting really means in your context, but here's a few random thoughts..

- I normally screw things up the first (few/many) times I do something, so just chalk it up to a practice shoot and keep going

- If you're able to get a technically competent capture then you can really change the look in post, so I'd suggest just covering the fundamentals

- The success or failure of a film depends 97% on what is in the frame (with the remaining 2% being sound, and 1% image) so that's where your attention should be going

- Is there a way you can separate the various tasks in your mind while you're shooting? For example, maybe you put in a full battery, an empty memory card, and then completely forget the camera exists and just roll as you do 20 takes of the scene from that angle, focusing on your performance and emotional aspects while you're doing this?

- If there are small errors in continuity or performance there is always the option to just include them and make it a more stylised final film. For example, if things cut funny or jump around a bit etc, and you lean into it in the edit, then the impression might be that the character might not quite be in control of all their faculties, or might be drunk or on drugs, etc. Obviously I have no idea what the context is, so this might not fit your vision, but it might give you options where previously you didn't see a way forward or just couldn't get excited about the material

- If you haven't storyboarded or done detailed planning, one thing you could do is to pre-shoot the whole thing without lighting or performances etc, and then just edit it together in the NLE, and treat that as a moving storyboard. This would have the advantage of being able to anticipate any issues with any camera angles, and also to get a feel for the pacing and even things like if you decide to cut an angle or part of the film then you can skip filming it altogether.

Best of luck and keep us updated - we are definitely interested in hearing how you get on!

-

8 hours ago, eatstoomuchjam said:

Another factor for overheating can be the processor in the camera. The Z Cam E2 series are known for running very well even in fairly extreme conditions (though other components such as memory cards, SSD's, monitors, etc overheat). This is partly because of proper cooling/lack of IBIS, but also because a lot of image functions in the camera are done with ASIC vs the FPGA that some of the overheating cameras use. It has the drawback that Z Cam can't implement some changes (at least not without releasing a whole new controller board), but has big advantages for cooling and power use (can run for hours on an NP-F550).

I guess they added a cooling fan to the new F6 Pro - so maybe they changed processors for it or they decided to cool the memory card, etc.

Interesting comments about the ASIC vs FPGA, and the trade-offs of not being able to add new features via updates. I guess everything comes with benefits and drawbacks.

4 hours ago, EduPortas said:You're totally right, friend. That makes all the diff in the world for such a small device.

The sad thing is that the more that people lower their expectations about stuff like this, the more the manufacturers will take advantage of it. In economics the phrase is "what the market will bear".

Dictionary definition: https://idioms.thefreedictionary.com/charge+what+the+market+will+bare

QuoteYou should charge as much money for a product or service as customers are willing to pay.

Quote from HBR: https://hbr.org/2012/09/how-to-find-out-what-customers-will-pay

QuoteThe right answer to that question is a company should charge “what the market will bear” — in other words, the highest price that customers will pay.

The HBR article goes on to talk about various strategies for setting pricing, but one element is common - it's about the customers perceptions and their willingness.

The more we normalise products overheating, having crippled functionality, endlessly increasing resolution but not fixing the existing pain points in their product lines, the more they will do it.

-

9 hours ago, SRV1981 said:

If you’re struggling to comprehend DR I’m hopeless! 😂

so it seems I’ll rely on some other metrics as I’ll be baking images in with LUT in camera and not pushing in post. That may open up options for me as to what body I can use. Portability and compact is a prime start for me.

I suggest you start with the finished edit and work backwards. Your end goal is to create a certain type of content with a certain type of look. This will best be achieved using a certain type of shooting and a certain type of equipment that makes this easier and faster. Then look at options for lenses across the crop-factors, then choose your format/sensor-size, then the camera body.

-

53 minutes ago, sanveer said:

There may be a small series of glitches with the dynamic range test chart. It cannot make out the difference between overbaked images and actual dynamic range very clearly. All smartphone images are way too processed. And the excessive noise reduction and over-sharpening seems to make the image very limited for post work. Apple has clearly figured out to fake results on the test chart. Much like some smartphone companies having higher results on the SoC testing apps.

The difference between total visible stop at SNR 1 and usable ones at SNR 2 seem to suggest good headroom, especially when codec is high bitrate and with good bit depth (atleast 10-bit 4-2-2?). Then SNR 1 and SNR 2 are similar it's difficult tonsee whether the image is way too baked in to recover any more than the visible image shows.

I guess to reply directly on to your comments, yes, the DR testing algorithms seem to be quite gullible and "hackable" which I'd agree that Apple has likely done specifically for headlines.

None of the measurements in the charts really map directly to how usable I think the image would be in real projects, but I haven't read the technical documents by ImaTest, although if I was going to look into it more I think that would be a good idea so you'd know what is actually being measured.

-

51 minutes ago, sanveer said:

There may be a small series of glitches with the dynamic range test chart. It cannot make out the difference between overbaked images and actual dynamic range very clearly. All smartphone images are way too processed. And the excessive noise reduction and over-sharpening seems to make the image very limited for post work. Apple has clearly figured out to fake results on the test chart. Much like some smartphone companies having higher results on the SoC testing apps.

The difference between total visible stop at SNR 1 and usable ones at SNR 2 seem to suggest good headroom, especially when codec is high bitrate and with good bit depth (atleast 10-bit 4-2-2?). Then SNR 1 and SNR 2 are similar it's difficult tonsee whether the image is way too baked in to recover any more than the visible image shows.

The more I read about DR, the less I realise I understand it.

I mean, the idea is pretty simple - how much brighter is the brightest change it can detect compared to the darkest change it can detect, but that has a lot of assumptions in it when you want to apply it to the real-world.

I have essentially given up on DR figures. Firstly it's because my camera choice has moved away from being based on image quality and into the quality of the final edit I can make with it, but even if I was comparing the stats I'd be looking at latitude.

Specifically, I'd be looking at how many stops under still look broadly usable, and I'd also be looking at the tail of the histogram and comparing the lowest stops:

- how many are separate to the noise floor (where the noise in that stop doesn't touch the noise floor)

- how many are visible above the noise floor (but the noise in that stop touches the noise floor)

- how many are visible within the noise floor (the noise from the stop is visible above the noise floor)

- how high up is the noise floor

In the real world, you will be adding a shadow rolloff, so any noise will be pretty dramatically compressed, so it really comes down to the overall level of the noise floor (which will tell you how much quantisation the file will have SOOC and how much you can push it around) and how much noise there is in the lowest regions, which will give a feel for what the shadows look like. You can always apply a subtle NR to bring it under control, and if it's chroma noise then it doesn't matter much because you're likely going to desaturate the shadows and highlights anyway.

The only time you will really see those lowest stops is if you're pulling up some dark objects in the image to be visible, but this is a rare case and with more than 12 or 13 stops you're most likely still pushing down the last couple of stops into a shadow rolloff anyway, so it's just down to the tint of the overall image. Think about the latitude tests and how most cameras are fine 3-stops down, some are good further than that - how often are you going to be pulling something out of the shadows by three whole stops?? That's pretty radical! Most likely you're just grading so that a very contrasty scene can be balanced so that the higher DR fits within the 709 output, but you'd be matching highlight and shadow rolloffs in order to match the shots to the grade on the other shots, so your last few stops would still be in the shadows and can be heavily processed if required.

-

3 hours ago, EduPortas said:

Hmmm. Not really comparable since the BMMCC has no LCD or EVF.

It's a cube that functions as a heatsink and makes huge ergonomic sacrifices vs any decent MILC or DSLR.

The entire answer is that it has a fan, despite being tiny. It doesn't need to act as a heatsink because it has a fan, and if the BMMCC can have a fan, then any small camera can have a fan.

Do you think that adding a screen and EVF and a handle to the BMMCC would take it from shooting for 24 hours straight in 120F / 48C to overheating in air-conditioning in under an hour? Of course not, because.. it has a fan!

It was also considerably cheaper than many/all of the cameras being discussed here.

But it's not even just about having a fan.. even without one, there are cameras with much better thermal controls around.

I routinely shoot in very hot conditions (35-40+C / 95-105+F) and have overheated my iPhone, but have never overheated my XC10, my GH5, my OG BMPCC, my BMMCC, or my GX85. All this "it's tiny so it overheats in air-conditioning" just sounds like Stockholm Syndrome to me. -

2 hours ago, SRV1981 said:

Sarcasm?

11.2 stops is pretty close to the bottom of the list of DR specs that I keep.

Of course, DR specs are a minor aspect of film-making, and not even really indicative of the actual usable dynamic range of the camera (which is better represented by the latitude testing).

For example the iPhone 15 tests as having 13.4 stops of DR, but the latitude test shows that it only has 5 stops of latitude, whereas the a6700 has 8 stops, yet only tests as 11.4 stops of DR.

If you're going to do a dive into the specifications, you really need to understand what they mean when you're actually using the camera for making images. The reason you want high DR is so that you can use those extreme ends of the exposure range - if you can't use them then there's no point in having them and so a big number on a spec sheet is just a meaningless number on a piece of paper.

-

1 hour ago, zerocool22 said:

Sounds great, I wonder how much the AF has improved. (Especially in slowmo or full hd resolution)

I guess once it's released we'll see a new round of strange AF testing from the YT camera gaggle!

-

39 minutes ago, EduPortas said:

Pretty sure the size of the camera is the big culprit here. Sony's tend to be smaller devices and lots of them overheat. This is well established ten years plus after the first A7 came out.

My Z50 gets hotter than a young female American tourist in Cancun during Spring Break while recording just 10-15 minutes of 4K in an indoor setting. Again, it's a smaller device.

So if you want more reliability you're going to have to chose a bigger camera. DSLRs rarely ever overheat. They are bigger units, in general. Most of them have recording limits, yes, but overheating was not a problem just a few years ago. Bigger MILCs like Pannys don't experience this problem.

The BMMCC that did that recorded for 24 hours straight in 120F / 48C was smaller than almost any other MILC.

There are no excuses.

-

New firmware update just announced: https://www.panasonic.com/global/consumer/lumix/firmware_update.html

QuoteS5II Firmware Version 3.0 / S5IIX Firmware Version 2.0

1. Enhancement of Production Workflows

New Native Camera to Cloud Integration with Adobe’s Frame.io

Compatibility with Frame.io Camera to Cloud is now supported, enabling images and videos to be automatically uploaded, backed up, shared, and worked on jointly via the cloud. Recorded content is sent to the Frame.io platform through an internet connection via Wi-Fi or USB tethering, enabling seamless sharing of captured photos (JPEG/RAW) and Proxy videos. This empowers creators to receive remote real-time feedback during capture and enables collaborative editing among production teams using their preferred creative software. Frame.io Camera to Cloud streamlines the workflow from shooting to editing, enhancing overall efficiency in the creative process.Proxy Video Recording

This new feature records a low bit-rate proxy file when recording video. Simultaneously recording a proxy file that is linked with the original video recording enabling a faster delivery from production to post.2. Improved Basic Performance

Real-time Auto-focus Recognition (Animal Eye, Car, Motorcycle Recognition)

The improved real-time auto-focus system enhances the highly accurate Phase Hybrid auto focus of the S5II and S5IIX, efficiently recognizing people amongst multiple subjects. It also features an animal eye recognition function, to focus on and follow animal eyes, as well as a car and motorcycle recognition function, which is ideally suited for shooting motorsports.Enhanced E.I.S. Performance

In addition to Standard, High mode is newly added to E-Stabilization (Video) function, which electronically corrects large shakes when shooting on the move. A perspective distortion correction has also been added to correct distortion that tends to occur during video shooting when using a wide-angle lens. Combined with Active I.S. Technology, it is now possible to achieve even more stable footage when shooting on the move.3. Expanding Creative Options

SH Pre-burst Shooting

The newly introduced SH pre-burst shooting function records bursts before shooting begins. When set to the SH PRE mode, the camera begins burst shooting from the moment the user half presses the shutter button, allowing retroactive burst shooting up to the moment the shutter button is pressed down fully.- zerocool22 and John Matthews

-

2

2

-

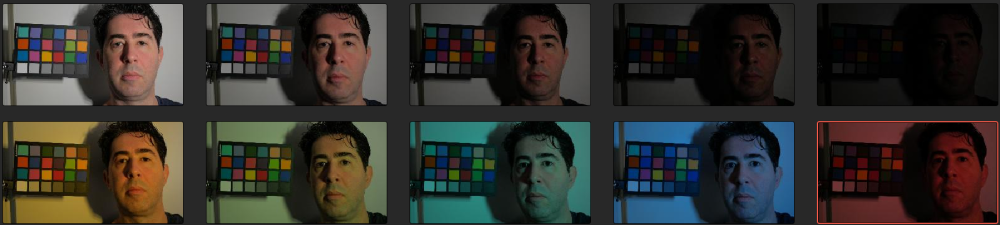

Shot a bunch of test shots, this time with skintones. I shot the usual shots gradually under-exposing, but instead of changing the WB in-camera manually to create the off-WB shots I bought a pack of flash gels and held them in front of the single LED light.

Also, as @John Matthews enquired about skintones, I have just done a first attempt at grading for the skintones rather than the chart. I looked at the chart of course, but considering that people is what the audience is looking at in the image, skintones are vastly more important. So I didn't try to get the greyscale on the test chart perfect, or correct the highlights or shadows perfectly, etc.

The first image is the reference. Those following that are each one-stop darker than the previous one. Then comes the gels. The gels are so strong they're essentially a stress test, not how anyone would / should / will be shooting, but it's nice to see where the limits are.

The first set is using only the basic grading controls and no colour management:

The results aren't perfect, but if you're shooting this horrifically then you don't deserve good images anyway! What is interesting is that some images fell straight into line and I felt like I was fighting with the controls on others, but this had no correlation with how large a correction was required. I suspect it's my inexperience and getting lucky on some shots and unlucky on others - sometimes I'd create a new version and reset and have another go and get significantly better (or worse) results than the previous one. No single technique or approach seemed reliable between images.

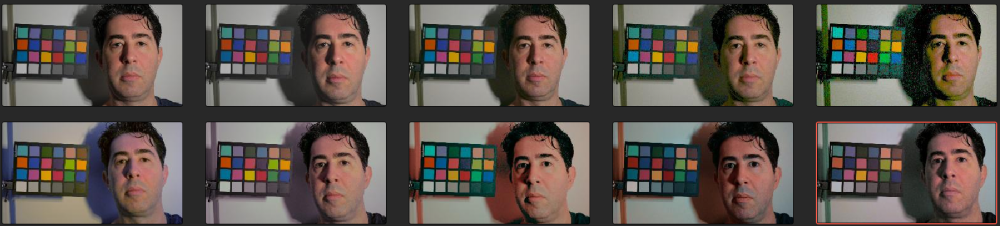

The next set had colour management involved and grading was done in a LOG space:

I also felt this set was very hit and miss in grading.

This next set was a different set of tools again, and I added the Kodak 2383 Print Film Emulation LUT at the end. I did this because any serious colour grading work done in post is likely to be through a 'look' of some kind and the heavier the look is the more it obscures small differences in the source images being fed into it.

Next set was a different set of tools, but keeping the 2383 LUT in the grade:

...and the final set was adding a film-like grade in addition to the Kodak 2383 LUT. I mean, no self-respecting colourist would be using a PFE LUT as the only element of their final look... right?

All in all, I am pretty impressed with how much latitude is in the files, and although lots of the above results aren't the nicest images ever made, if you're shooting anywhere close to correctly then the artefacts after correction are going to be incredibly minor, and if you actually shot something that was 2 stops under and was lit by a single candle using a daylight WB then you'd be pretty freaking happy with the results because you would have been thinking that that your film was completely ruined.

This is all even more true if your shots were all shot badly but using the same wrong settings, as the artefacts from neutralising the images you apply will be consistent between shots and so will have a common look.

I'm also quite surprised at how the skintones seem to survive much greater abuse than the colour chart - lots of the images are missing one whole side of the colour wheel and yet the skintones just look like you decided on the bleach-bypass look for your film and you actually know what you're doing. The fact that half the colour chart is missing likely won't matter that much because the patches on the colour chart are very strongly saturated and real-life scenes mostly don't contain anything even close to those colours.

I'm still working on this (and in fact am programming my own set of plugins) so there will be more on this to come, especially as now I have a good set of test images.

I think I should find a series of real-world shots that only require a small adjustment so I can demo the small changes required on real projects.

-

To compliment Geralds tech-but-not-filmmaker review, here's a filmmaker-but-not-tech review.

TLDR; he's also not so impressed, once you consider the price.

-

4 hours ago, SRV1981 said:

Interesting. Thanks for this view! Is it possible that at the same ISO, we’d see this color or DR difference? Meaning the creators are using ISO as the equalizer rather than exposure x/y axis?

Tony Northrup did a video, probably over a decade ago now, comparing ISO between cameras and found that in the same exact situation different cameras give different exposures at the same ISO, up to almost a stop. IIRC his test was meticulous using the same lens etc and he was shooting RAW, so there was no in-camera processing etc.

His conclusion?

It doesn't matter... because of all the reasons people have posted above.

-

47 minutes ago, zlfan said:

I really like ml raw. it bests all of my so called pro video/cinema cameras. if you don't care about xls, nd, double recording, sci out, etc. if you don't care rolling shutter.

The pro cameras are pro because they have xls, nd, double recording, sci out, etc.

It's like saying "I really like my Toyota hatchback. it bests all of my so called super-cars and hyper-cars. if you don't care about acceleration, top speed, braking, cornering, cool factor, etc."

🙂

-

26 minutes ago, zlfan said:

I set my epic-x mysterium-x to 5k 60p 2.40 5:1. I used 5k 24p 2.0 3:1. I don't see significant improvement over r1mx 4.5k 24p 2.40 7.5:1.

Yeah. Considering those cameras both have quite high-end images, it's not surprising there wasn't a significant improvement.

Perhaps focus on something other than the SOOC image?

What films do you make?

-

Also, and I cannot emphasise this enough....

The image SOOC is like looking at the negative film SOOC. It's not fit for direct display, it's not meant to be for direct display, and no-one really cares the differences in the SOOC image unless it appears in the final image.

Think of the post-production process as "developing" the image captured by the camera.

-

7 minutes ago, SRV1981 said:

Cool points but those screenshots and videos are comparing the images with same settings at the same time. There’s distinguishable differences and I find it interesting.

Sure.

But we've known for a long time that different cameras have differences even with the same settings.

If one is brighter and the other is darker, but are basically identical if you adjust the settings to match the image, then does it really matter? Like, if ISO200 on one is the same as ISO250 on the other, how does this impact you making a better film?

-

Most camera comparisons are pretty worthless because you're almost never compare two cameras in the real-world, and even if you are:

- They'll be from different angles, so will have different content

- They'll be colour graded, and matching cameras that shoot LOG is pretty easy

- Things like DR don't matter unless the scene has huge amounts of it, but this is rare these days

- Videos get compressed to within an inch of their lives by the streaming services, so anything subtle will get crunched

- You don't need to get that close in colour in order for people to not notice different camera angles not matching perfectly

- Even if camera angles don't match, it only bothers people if your film is completely crap

- A much more significant impact on your final film will be the features of the camera, as they make a different on set in terms of efficiency of shooting and your ability to spend any time savings on lighting, directing, etc etc

Trying to improve your film by concentrating on the minute differences in cameras is like trying to improve your paintings by concentrating on minute differences in your paint brushes.

- ac6000cw and eatstoomuchjam

-

2

2

-

5 hours ago, PannySVHS said:

I buy used all the time. Greatest deal were two LX10, a bit bumped up but perfectly usuable, for 120 Euro, three years ago, from a youtuber with 150.000 subs. One battery only though.:) Another great deal was an og pocket two years ago or so, with the 20mm 1.7 MK II for 200 or 250 Euro. Last summer I got a Lumix G2 and a G3, together for less than 30 Euro. This year no purchase yet, as planned to stay away from gas this year and as stated in another thread.:)

Those are all absolutely KILLER deals... well done!!

-

We all know that cameras produce (sometimes dramatically) different images and image quality, and yet we also know that within a given price range those same cameras typically use the same sensors from Sony and are recording to the same codecs in basically the same bitrates. It's maddening!

So, WTF is going on?

Well, I just came upon this talk below, which goes through and demonstrates some of the inner workings in greater detail than I had previously seen:

It's long, but here's my notes...

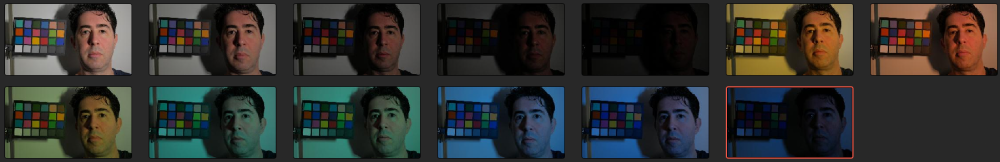

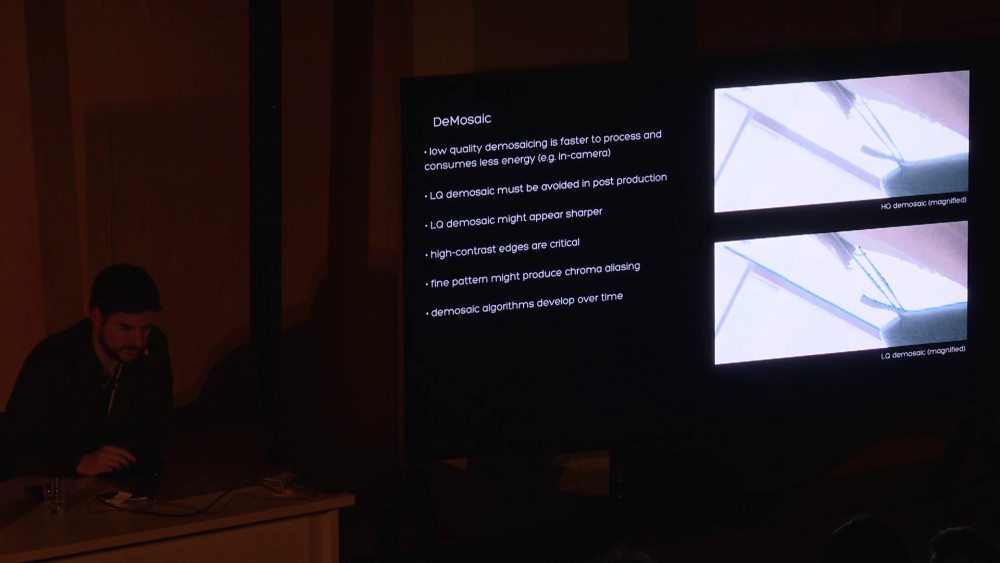

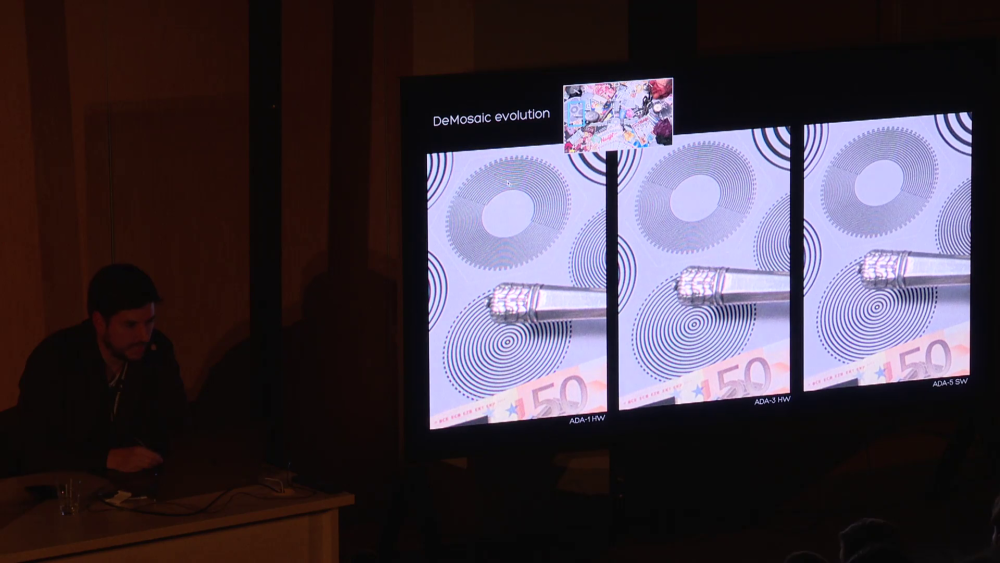

Firstly, how you de-mosaic / de-bayer the image really matters, with some algorithms being higher quality and require higher processing power:

(Click on the images to zoom in - quality isn't the best but the effects are visible)

If you're not shooting RAW then this will be done in-camera, and if your manufacturer skimped on the processor they put in there, this will be happening to your footage.

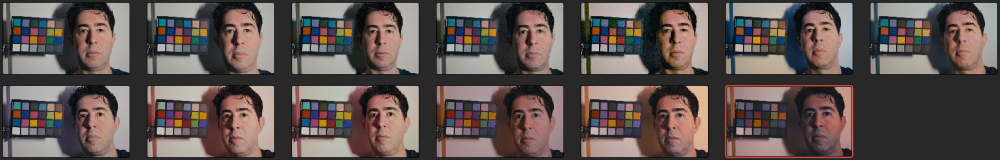

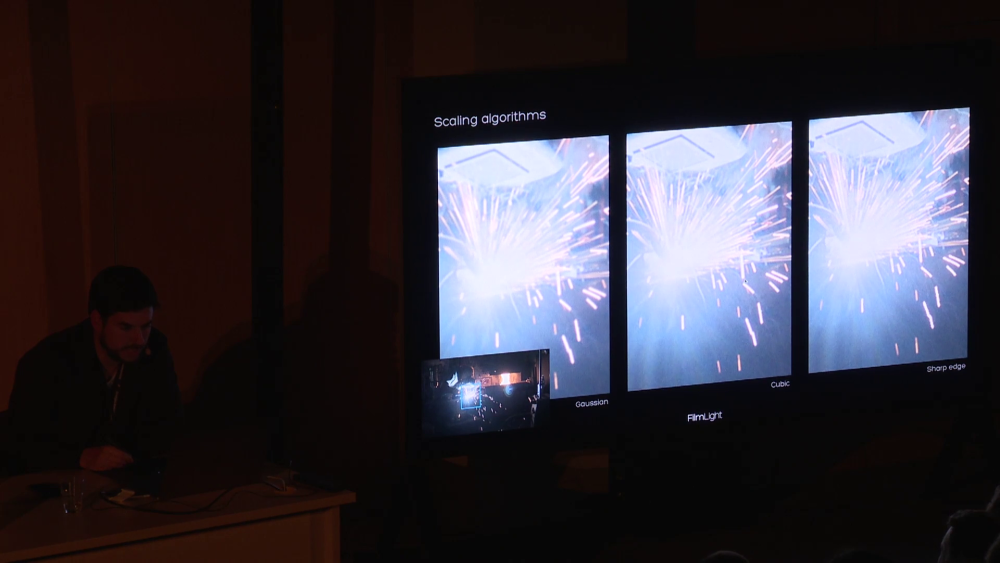

If the camera is scaling the image, then the quality of this matters too:

and if the scaling even gets done in the wrong colour space then it can really screw things up:

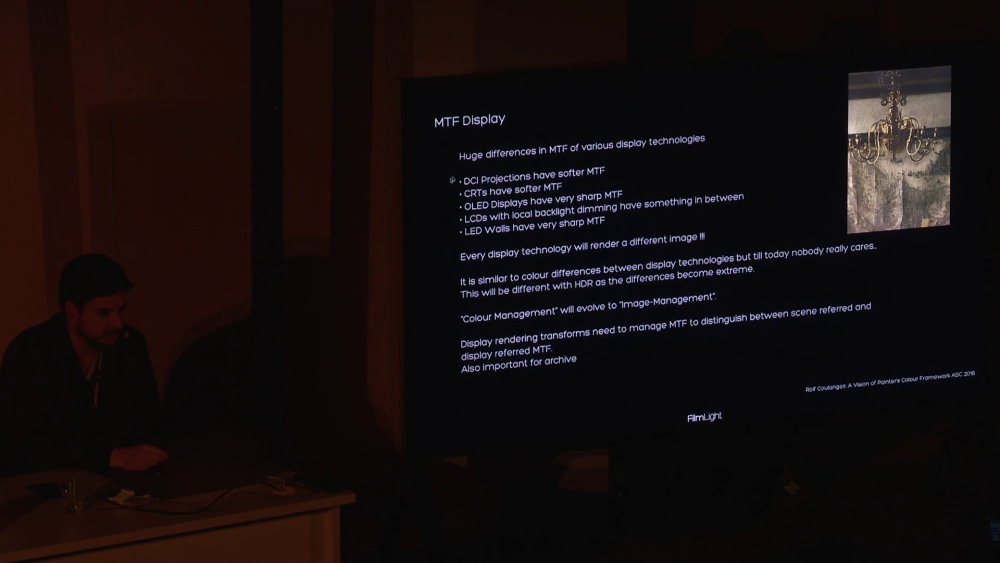

There's more discussions in there, especially around colour science which has been discussed to death, but I thought these might be illuminating as it's not something we get to see that much because it's buried in the camera and typically it's not something we can easily play with in the NLE.

All these add up to a fundamental principle that I have been gradually gravitating towards.

If you're shooting non-RAW then shoot in the highest resolution you can shoot in, and just un-sharpen (blur) the image in post.

Shooting in the highest resolution means that your camera will be doing the least downscaling (or none), and most glitches and bad processing will be at the pixel-level, which means that the higher the resolution the smaller those glitches and errors compared to the size of the image, and un-sharpening de-emphasises these in the footage.

You might notice that all of these shortcomings make the footage sharper, not duller, so the errors have made your footage sharp but in a way that it never was - it's fictional sharpness.

Also, the more modern displays are also themselves becoming sharper, so it's no wonder that footage all now looks like those glitch websites from the late 90s that were trying to be cool but looked like a graphic designer threw up into them...

FilmLight (who make BaseLight which is the Resolve competitor that costs as much as a house) seem to be on a mission to get deeper into the image and bring along the industry on that journey, and I'm really appreciative of their efforts as they're providing more insight into things we can do to get better images and get more value from our limited budgets.

- j_one, solovetski, SRV1981 and 3 others

-

6

6

-

14 hours ago, SRV1981 said:

What’s the best way to stress test?

I'd try a range of things, and I'm sure others will have more to add, but I would:

- Check the camera physically to make sure the screen works, buttons, EVF, etc

- I'd update the firmware straight away to the latest

- Put on several lenses and test that they're recognised correctly and the AF and OIS are working

- After putting in a new battery and formatting a memory card in it, I'd pick the best quality normal mode (24/25/30p) and do a long recording on it of something that has a lot of movement in it (a great test is putting three still images on a timeline each at 1 frame and then loop the video).. if it records without issue for 20+ minutes without anything odd happening that's good, but if you have time I'd test until the card fills up or the battery dies

- I'd also do the same but on the highest frame rate mode

- Check the files are playable in the camera and work on the computer

If it passes all of the above then it's unlikely that it has some lingering issue that isn't also present on new copies as well.

- MurtlandPhoto, SRV1981, Walter H and 1 other

-

4

4

-

About half my cameras were second hand, with a couple of them likely having a lot more than two previous owners, but haven't had any issues with mine.

If you're concerned about overheating, get a camera with a fan. A fan is the difference between a camera overheating in air-conditioning in under 45 minutes vs a camera recording for 24 hours in a race car at 120F / 48C.

-

5 hours ago, eatstoomuchjam said:

Gotcha - if you're more comfortable with the 35mm equivalent FOV, a 17ish vs a 20ish mm m43 lens makes total sense - and if you value AF, it's not your best option by far. From what I remember, it's a bit noisy and slow. I'm sure the Olympus 17 or the Panasonic 15 is faster. For me, the 14/2.5 and the 20/1.7 were no brains to keep in my kit when I was still using M43 because they were just so tiny. It felt silly not to bring them, especially since they're both really decent lenses.

The size and cost of the 14/2.5 and 20/1.7 sure make them compelling lenses to own and carry around with you, that's for sure. I don't know what the AF is like on the 20mm but all I do is frame up a composition, do a single AF-S via a custom button, then hit record and maintain the focus distance during the clip. I shoot short clips for the edit, so I don't need or want AF-C. From that perspective the 20mm might be just fine. The alternative is manually focusing with peaking, which would likely take longer than most AF.

Speaking of AF speed, I read something the other day - it said that Panasonics DFD sped up their CDAF, and I realised that I never see a CDAF Panasonic camera doing that thing where the focus racks the whole way to one end and then the whole way to the other end before acquiring focus, it just seems to do a quick jitter and it's done. I never thought about that being DFD but I guess it is.

5 hours ago, eatstoomuchjam said:For me, the 75/1.8 was always useful for either portraits (though one has to stand a little further away than I like) or for landscapes (as I age, I like telephoto landscapes more and more - just choose the little bit of the scene that I want). The fast aperture let me get pretty sharp stuff even when shooting from a moving car or train without having to crank the ISO on a smaller sensor. At this point, I have a Summicron-M 90/2 ASPH so unless I'd need autofocus, I'd just prefer it for that sort of landscape shot (and on FF, it's a really nice length for portraits, to boot).

I watched a lot of landscape photographer YT (Thomas Heaton etc) and they made a strong case for landscapes being shot with wide lenses and ultra-long lenses. Some of those shots that show just the jagged peak of the mountain or the lone tree or castle on a distant hilltop can be the most stunning.

5 hours ago, eatstoomuchjam said:Anyway, if you don't mind the aperture limitations of the 14-140 when it's racked to 75mm, you're definitely set there.

Some time ago I realised that DOF depends not only on aperture but also on focal length, so although a variable zoom gets slower as it gets longer (making the DOF deeper), it's also getting longer as it's getting longer (making the DOF shallower) and so I did a bunch of math to calculate DOF of the same composition. I posted the results in some other thread somewhere here, but the summary is that a lot of variable aperture zooms are almost constant DOF lenses, when taking the same composition (ie, if you double the focal length then you'd be twice as far away for the same composition).

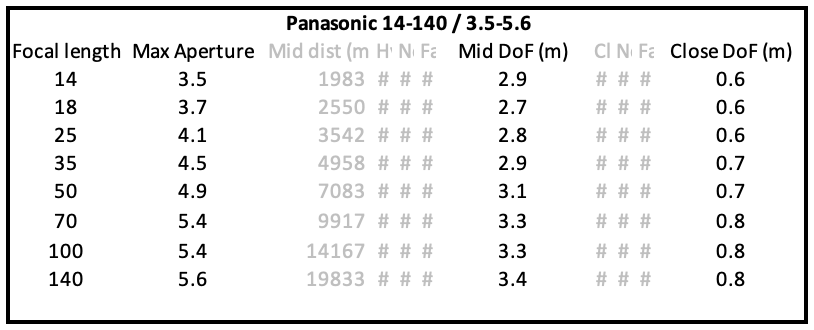

Here's the table of the 14-140mm lens. The "Mid DoF" column is the DoF of a mid portrait shot (chest and up) and the "Close DoF" is just top of shoulders and up:

It's not constant DoF but it's pretty close.

I then realised that for my environmental portraits, where I want the subject in focus but the background should at least be recognisable, I didn't want something that just had the subject floating in a sea of mush. Also, nailing focus is more important to me than shallower DoF, and if the focus isn't going to get it perfect every time, and also pick the right focus subject each time, or if there are two people next to each other but slightly different distances from the camera because I'm not standing exactly 90-degrees to the line between them, then I'd rather the DoF be a few meters rather than the shot be missed.

The other reason to have a fast lens is the low-light capabilities. FF obviously has the advantage because, all else being equal it gets 4x the amount of light onto the sensor, but this has to be balanced against the DoF which will also be radically shallower for the same T-stop. So if you don't want to shoot with a razor-thin DoF in low-light then you have to stop down. I find that in practice this would level the playing field in many compositions. Not all of them of course, and the seemingly greater investment in sensor technology from Sony in the larger sensors is also a factor, but it makes the topic more complex and far less one-sided than it might first appear.

5 hours ago, eatstoomuchjam said:For me, my travel kit contains a few redundant focal length primes - though they're less for the faster aperture now and more for being smaller/lighter/nondescript. Does 1 extra stop on the Fujinon 63/2.8 make any substantial difference than the 32-64/4 racked out? Not really. Is there any appreciable difference in quality on the prime? Not really. Are people more likely to ignore me when it's on there? Yes.

Yeah, it's definitely a case of "get the shot" first, "make the scene better by not making everyone uncomfortable" second, and "have a rig with a great image quality" third.

-

3 hours ago, QuickHitRecord said:

That seems quite bizarre! Which lens is this?

It was this one:

I modified my SJ4000 action camera with it:

When zoomed out the lens doesn't have great close-focus but about 2/3 of the focus range is past infinity, and when zoomed out it can only focus past infinity by a tiny little bit but is perfectly capable of focusing on the dust on the front element and then of going even closer and focusing on the dust inside the lens.

Sony A9III with Global Shutter

In: Cameras

Posted

Apparently Soderbergh shot his latest film on the A9 III.. in this article he talks about a "Sony DSLR" but there are tweets etc elsewhere that say it was the A9 III. Maybe the global sensor was a deciding factor.

Anyway, good to see that when moving away from cinema cameras the choice isn't completely ridiculous and something sensible was chosen. It's nice when film-makers don't just treat the world of consumer cameras as a novelty but actually as something that has genuine potential and capability.

https://filmmakermagazine.com/124668-interview-steven-soderbergh-presence-sundance-2024/