-

Posts

8,170 -

Joined

-

Last visited

About kye

Profile Information

-

Gender

Not Telling

-

Location

Planet MFT

Recent Profile Visitors

123,334 profile views

kye's Achievements

Long-time member (5/5)

6k

Reputation

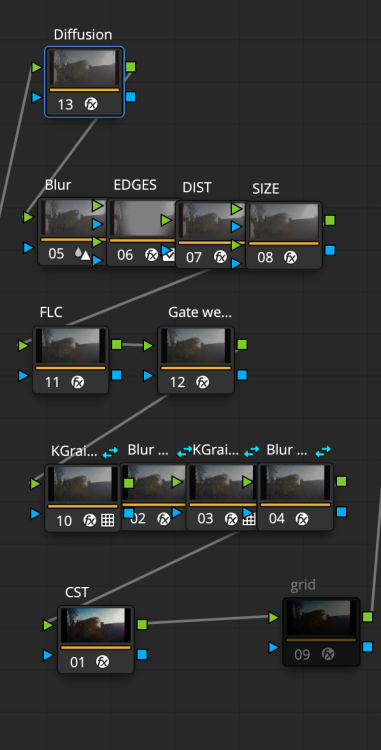

-

Thanks! My film nerd friend also said that this was basically there, and that after YT compression it would be indistinguishable. I'm actually really stunned as I figured that this project would end with me being happy I got a film look of some kind and everyone else giving up because I was so far away! I'm also sort-of stunned because the FLC and other plugins don't get this close and all I did was write a random noise generator and then a gaussian blur and we're there. My plan is to keep going and emulate as much as I can, including the full image pipeline, so filters / lenses / film / colour grading / etc. The way I've structured the grain is that it's at pixel-level on the timeline so to go from 16mm to 35mm all I have to do is lower the amount of grain and back off the softening, then back off the halation and bloom and gate weave etc, but if it looks good at 16mm it should be fine at 35mm where it's all much more subtle. 8mm is the same, but just turning everything up. Everything is tuneable, so adjusting things isn't that hard once you know which controls are the ones you want. Top row is diffusion, then 4 nodes for the lens emulation, then FLC and gate weave, then 4 nodes for the grain and softening, then CST to 709 output. I've started making a list for v8: Play with diffusion to simulate netting / diffusion filters Add CA to the lens emulation (already done) Lessen the amount of blur (I have some great S16 samples now to compare with) Add film dirt and damage, like scratches etc. There's an OFX plugin for this, so should be straight-forward and I plan to go subtle with it Really analyse my sources, including comparing the 1000Mbps scan my friend gave me to what I see in Resolve, and also comparing the YT samples I've downloaded to the YT version of my emulation (to compare apples-to-apples). I'll also start using better footage 🙂 As everything is tuneable, making a number of variants makes sense, potentially at 8mm / 16mm / 35mm, but also other variations too. Other things that are on my mind are things like bleach bypass, split toning (which Resolve doesn't seem to have a good answer for), and maybe some B&W options too. The FLC can do bleach bypass and potentially the B&W looks, but the split-toning might have to be another custom DCTL. Thanks! Great to get an additional perspective 😄

-

kye reacted to a post in a topic:

The GX85 "Super-16" project

kye reacted to a post in a topic:

The GX85 "Super-16" project

-

kye reacted to a post in a topic:

The GX85 "Super-16" project

kye reacted to a post in a topic:

The GX85 "Super-16" project

-

TrueIndigo reacted to a post in a topic:

The GX85 "Super-16" project

TrueIndigo reacted to a post in a topic:

The GX85 "Super-16" project

-

John Matthews reacted to a post in a topic:

The GX85 "Super-16" project

John Matthews reacted to a post in a topic:

The GX85 "Super-16" project

-

kye reacted to a post in a topic:

New cinema camera...?

kye reacted to a post in a topic:

New cinema camera...?

-

There's some truth to that, but I think GoPro are a different breed. Still, it's not a popularity contest. Seems sensible! What sort of shooting would you do with it? My impression is that something like this is best placed to do things that larger cameras can't do, otherwise it'd be better to use those as they'll have nicer DR, codecs, etc etc. It seems like the advantages this might have would be the size, the crop factor (if that's what you're going for), and maybe the slow-motion which some people have said isn't really available elsewhere. I have shot a lot on small cameras and enjoyed trying to push things to their limits, including shooting stills on my GoPro 3, which despite not even having a screen still worked really well for street photography (the secret was burst mode). When you see the camera YouTubers they all have a similar mindset that comes from constantly standing around in public talking to large cameras on tripods (or being filmed by someone else) so size doesn't really matter in that sense and they'll just rig everything up. If, on the other hand, you're going out with a camera for more than a week or two before the NDA lifts, you've got time to learn it and learn how to use it and how it influences you to shoot etc, so you can rig it (or not) in the best way possible for its logical use.

-

Dammit, v7 had a mistake in it, so re-exported and uploaded it.

-

Version 7: Brand new custom grain DCTL Removed mid-tone softening Grain is now after the FLC I abandoned the grain OFX plugins and wrote my own grain generator DCTL based on a random number generator with some sliders to modify the channel gains and saturation etc, and I tuned it to match some Vision3 samples from a friend. It's mostly blue and a little green with no red. Putting it before the FLC screwed up the colours and I couldn't work out what to put in to get the right result out, so I just moved the grain to after the FLC. This might mean it isn't right across the luma range, so happy to hear feedback on that. I also added a resolution chart at the back as I was using it to test some things and decided to keep it in there. It's an 8K source file, so was nice and sharp going into the grade... Fun!

-

John Matthews reacted to a post in a topic:

The GX85 "Super-16" project

John Matthews reacted to a post in a topic:

The GX85 "Super-16" project

-

kye reacted to a post in a topic:

The GX85 "Super-16" project

kye reacted to a post in a topic:

The GX85 "Super-16" project

-

kye reacted to a post in a topic:

The GX85 "Super-16" project

kye reacted to a post in a topic:

The GX85 "Super-16" project

-

Did the grain in previous versions also look like this, or have I done something that pushes it in the wrong direction perhaps? Version 4: Version 6: I got feedback that Vision3 tends to only have grain that is in the yellow/blue direction, unlike mine that contains a lot of grain on the red channel (despite there being a slider for this and me turning it down), so I'm going to rebuild the grain engine again to give me some better control over this. I've heard that grain "crawls" and even seen controls for this in the OFX, but I have no idea what this means. Do you know? I might have to read about this. I would assume (perhaps naively) that there would be no relationship between the grain on each frame, with each essentially being a random distribution of crystals. My thoughts turn to the digital pipeline perhaps, but I exported using Prores and then it's compressed by YT, so if we compare that to someone that scanned film and then uploaded that to YT then my pipeline doesn't have anything in it that there's didn't, so if mine differs then it must be in how I'm generating things. This video contains lots of high-quality film examples from 8mm / 16mm / 35mm film shot in various scenes - I assume these look fine to you? (linked to a good timestamp) Interestingly, I just became aware there's an open source plugin under development that does all this stuff for free. It's still in beta phase, and apparently runs very slowly, but I'll be keeping an eye on it. https://spektrafilm.114c.de

-

I was chatting with ChatGPT about the look of cinema and it gave me some things to think about, one of which was experimenting more with diffusion, which I don't think I've really found a good way to emulate in post. This is v6 of the test shots. Changes made are: Slightly less softening Less gate weave, just because I don't really like it Lowered Midtone Detail as a sort-of diffusion method Definitely a work in progress, but if anyone has any strong opinions on this then happy to hear them.

-

John Matthews reacted to a post in a topic:

The GX85 "Super-16" project

John Matthews reacted to a post in a topic:

The GX85 "Super-16" project

-

John Matthews reacted to a post in a topic:

The GX85 "Super-16" project

John Matthews reacted to a post in a topic:

The GX85 "Super-16" project

-

eatstoomuchjam reacted to a post in a topic:

The GX85 "Super-16" project

eatstoomuchjam reacted to a post in a topic:

The GX85 "Super-16" project

-

Here's a shot where I graded the 4x digital zoom to match the optically zoomed reference. I sharpened, added midtone detail and played with curves to counter the diffusion from the 14mm F2.5 lens. 14mm with 4x (look only) 14mm with 4x (graded to match) Optically zoomed to 56mm (reference image) So there we go, if I wanted to get a 4x shot to match, even between lenses, then it's completely possible.

-

Stepping away from film emulation for a moment, the thought occurred to me to that we have softened the image enough for the 2x and 4x in-camera digital zooms to be tested. The GX85 sensor is 4592px wide, which with its 1.1x 4K crop factor, means that the horizontal resolution for its 1x is about 4174px, with the 2x crop it's about 2087px, and the 4x is about 1043px. Normally the 2x is usable on a 1080p timeline and the 4x is not, but with the film emulation on it, the only way to find out is to test it in the real world. Here is the GX85 with 14-140mm lens on it at F5.6. Optically zoomed to about 25mm: At 14mm and zoomed 2x in-camera: To me this looks completely fine. So far so good.. Optically zoomed to about 50mm: At 14mm and zoomed 4x in-camera: This is the big one. I see some differences here, but not enough that if I saw this in an edit it would pull me out of it, which is really what I'd want - to know if it's usable. For some context, I shot this test with three lenses, the 14mm F2.5 pancake lens (which is the lens I'll be using), the 12-35mm F2.8 and the 14-140mm shown above. While the light was changing during the test, what is interesting is that the 14mm F2.5 has much less contrast. The reason I point this out is that when comparing the 14mm F2.5 with in-camera zoom to the other lenses I think the lens rendering itself is the most significant difference. 2x comparison: and now for the main prize, 4x: This is an excellent result as far as I am concerned! Caveats: This is with the v5 film emulation grade, which as @eatstoomuchjam pointed out might be on the softer side of the range for what 16mm film rendered However, this grade DID NOT include the lens emulation stack, so the lens barrel distortion, vignetting, and corner softening hasn't been applied, so that could go some way to evening out the look I haven't done any sharpening to compensate for any softness. In the past I've discovered that a digital zoom of about 1.6x can be equalised by some careful sharpening in post, so sharpening isn't to be underestimated, especially in the context of an edit where (hopefully) the viewer is looking at the content and not the image Super-16mm film was often shot on several primes, where some would have been sharper than others, or on zooms, where the zoom would have been sharper in certain parts of its range than others, so natural variation in this stuff is potentially making it a more accurate emulation rather than detracting from it I suspect that the 4x is on the edge of being too soft, which makes sense as it's a 1K sensor readout going through a lot of processing and rescaling, but this is a great result. So.... the GX85 and 14mm F2.5 lens is a Super-16mm setup with a "turret" of T2.5 lenses that are equivalent to 31mm, 62mm and 124mm, and it's (just) pocketable and fits in the palm of your hand! Happy to share the power grade if anyone wants to play with it.

-

kye reacted to a post in a topic:

New cinema camera...?

kye reacted to a post in a topic:

New cinema camera...?

-

eatstoomuchjam reacted to a post in a topic:

New cinema camera...?

eatstoomuchjam reacted to a post in a topic:

New cinema camera...?

-

kye reacted to a post in a topic:

New cinema camera...?

kye reacted to a post in a topic:

New cinema camera...?

-

eatstoomuchjam reacted to a post in a topic:

The GX85 "Super-16" project

eatstoomuchjam reacted to a post in a topic:

The GX85 "Super-16" project

-

Thanks! The more I get into this, and the more I think about what my own associations might have been, the more I realise how variable things are. Obviously people talk about how there isn't a single "look" for film, or even for an individual stock, as it depended on where it was developed and even who was running the machine that day, but if we zoom out then the look of film for one person might have been Hollywood movies at their local Megaplex and for someone else it might have been indy films at a tiny theatre with a super-worn projector, and for someone else it might have been the 8mm or 16mm their parents shot and projected. Even the examples on YT are stunningly different. I've linked to these videos before, but it's just amazing to me how different they are... S16mm on Bolex at night (IIRC on 500T and potentially pushed a stop?) S16 on Laowa Nanomorphs (Vision3 50D): I can tell you, the 35mm projection of Goodfellas I saw was absolutely nowhere near how sharp and clean this is! That's very encouraging to hear. I've shared this in a number of places and mostly got no feedback so I was wondering if it was more a case of "if you have nothing good to say then don't say anything", or potentially "you can't be serious, this guy is so far from the mark it's a lost cause and there's no point in me saying anything!" I didn't think I was doing that badly, but with film emulation some people have rather exacting standards! Assuming I've reached the goal of it not being obviously fake, I'm thinking about what I would do to grade it further, and the thing that comes to mind is a split-tone and the colour. Unfortunately the split-tone in FLC (and IIRC the separate OFX plugin in Resolve) aren't that great, so once again it looks like I might have to go the custom route. I'm also keen to play with the Subtractive Sat, Richness, and Bleach Bypass sliders too as I think they might be the key to getting a range of interesting looks. Some people do film emulations and have the saturated areas super dense, whereas others don't emphasise it so much. I remember someone posting their film emulation power grade to the LGG forums and the saturated areas were so dark I thought they were playing a practical joke on the forums, but people gave serious replies and they responded seriously too, so I just shook my head and didn't post any feedback.

-

kye reacted to a post in a topic:

The GX85 "Super-16" project

kye reacted to a post in a topic:

The GX85 "Super-16" project

-

kye reacted to a post in a topic:

Please recommend me some Manual Focus EF lenses!

kye reacted to a post in a topic:

Please recommend me some Manual Focus EF lenses!

-

Interesting that the Pro and ILS versions are the same price - that's not something I would have anticipated. Seems to me it's a pretty niche product. To justify it you'd have to really value something that it can do that other cameras can't (like shooting action stuff!), or you'd have to be looking at it as an all-rounder / your only camera and therefore not really want the things it can't do. I'm guessing that will be the case for enough people that the GoPro leadership and management bros will be able to look out at the world and see enough zealots who think it's totally rad dude and get the impression that they're doing the world a favour by just existing. There are a ton of options actually. The classic recommendation is the Laowa 7.5mm F2, which is rectilinear (not fisheye) and small/light and nice to use etc. It seems like you're not really that up with what is available for the MFT ecosystem - there's new lenses being released all the time. I recommend searching B&H, in the mirrorless catalog, and just select MFT as the mount and then explore what's there. B&H have 29 entries for prime MFT lenses under 9mm, including options at 3.5mm, 4mm, 6mm, 6.5mm, 7.5mm and 8mm. Some of these will be duplicates, but there's still a lot of options there. There's even a 4.5-10mm F2.8 zoom from Laowa in there, which appears to be a new product coming soon. B&H is the best option for new/current lenses: https://www.bhphotovideo.com/c/products/Mirrorless-Camera-Lenses/ci/17912?filters=fct_lens-mount_3442%3Amicro-four-thirds Then there's this page from Alik Griffin which is awesome and lists all MFT lenses ever made: https://alikgriffin.com/micro-43-lens-buying-guide/ No idea how many lenses are on that list, but it's about 35 pages long, so quite a few. Everyone who went FF think that MFT stopped suddenly in their absence! What would you define as "optimised for MFT"? Is it sharpness? or something else? Are there specific criteria that MFT has that differs from APSC sensors perhaps? If so, does it also differ between bodies or manufacturers? Like, I'm sure the sensor stack on an Olympus camera is different to the Panny ones? Genuinely curious, as loads of lenses are made for the new FF mirrorless mounts and seem to cover every one of them (E/Z/L/RF/M) but people don't seem to be talking about them not being "optimised for <insert mirrorless mount here>".

-

kye reacted to a post in a topic:

New cinema camera...?

kye reacted to a post in a topic:

New cinema camera...?

-

Somewhat embarrassingly, I originally bought the EF-MFT SB to replace my M42-MFT SB, but when I put my Takumar 50mm F1.4 on an M42-EF adapter and that onto the EF-MFT SB, the rear element of the Takumar interfered with the EF SB glass when I tried to focus more than a few meters away (nowhere near infinity focus). I probably could have determined that before I bought it if I'd searched enough, which is the embarrassing part. I'm not really sure how to tell which M42 and C/Y options would have this problem too.. is there a way to know? What Nikon lenses would be F1.4 or faster? and are there issues with the SB? The native MFT lenses from TTartisan / 7artisans are good options, but aren't as fast as the two Voigtlander F0.95 lenses I have, and are also bettered by a F1.4 lens on a SB. I did shoot with the TTartisans 50mm F1.2 in Japan and it seemed pretty good, but at 100mm equivalent FOV it's on the long end for my hand-held stuff. The Zeiss ZE Planar 50/1.4 looks like a potential option, being cleaner than the Takumar+M42-SB combo. The Mikaton 50mm F0.95 would be a stop faster than the Zeiss, and would be cleaner than the Takumar too, but is more expensive. I should look at the Sigma F1.4 primes too, in case something there stands out, although they're competing with the Zeiss Planar, which I would imagine is tough competition? I already have a ton of options and combined with my very specific requirements I'm really pushing things to find any options I haven't thought of, but I didn't think it would be so difficult to find super-fast FF EF lenses!

-

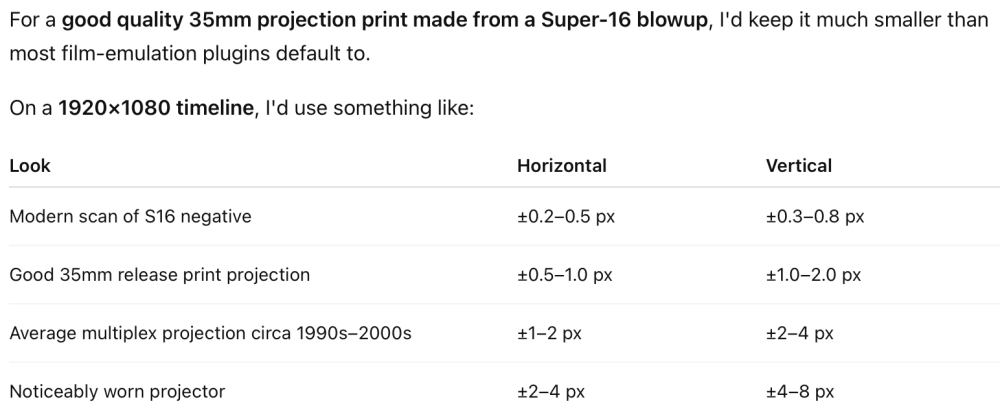

Thanks for the feedback! The gate weave was applied at the timeline level, so is the same settings throughout. It's funny how it appears differently on different shots through, maybe because the coffee equipment is motionless the gate weave stands out more? The original gate weave within the FLC looked like periodic horizontal movement, which surprised me as I would have thought that the film moving vertically at high speed and then being abruptly stopped for each frame would have resulted in more vertical positioning error than horizontal. Sadly it doesn't have any controls other than Amount and Rate. I ended up completely rebuilding it using the Camera Shake OFX plugin that has lots of controls. Sadly it seems based on a SIN function with a bit of randomness built in, so I had to play with the rate slider so it would interact chaotically with the frame-rate to appear relatively random. It does have separate amounts of vertical and horizontal (and also rotation, which I didn't use). I asked AI about the different characteristics and during a decent discussion it suggested: I got it to update the numbers with a 16mm projection print and projector, which made them slightly larger, but later on in the discussions it also suggested that having a small gate weave on each frame and occasionally have a larger registration slip of 3-5px, which it said was what people remember about the look. I don't really know how to do that in Resolve, so didn't include it. It also suggested that a worn projector might include some horizontal wandering and that film emulation plugins might emulate that as well, which might be what the FLC was doing. I ended up adjusting the vertical and horizontal so they were stronger than V4 but not so much that I hated it. Another thing it mentioned was on larger screens the amount will appear much more than smaller ones, and I'm editing in semi-cinema conditions, with a FOV of someone sitting in the middle of a normal theatre. If you're looking on a smaller device it might appear less. When I went to see Goodfellas at a theatre that was projecting from original print distributions, they played a bunch of old ads and a bunch of previews (IIRC a lot of these were 16mm) as well as the main feature on 35mm. The gate weave was really inconsistent, with some items having lots and others having none and being noteworthy for how absolutely rock solid they looked. Anyway, what else separates it from looking like real film? I suspect we're not there yet, and probably not even close...

-

Gate Weave increased (and I rebuilt the gate weave engine using the Camera Shake OFX). I'm not sure I like the aesthetic, so might turn it down for my own projects. @Clark Nikolai @eatstoomuchjam @Framed_By_Dan would especially appreciate your assessments of how close this is and what might be any next steps. I feel like it's good enough for my (untrained) eye, but if there are any more specific things you can identify then I'd be keen to keep going and see how far we can go. I'm gradually disabling things in the FLC plugin and manually recreating them myself using other plugins or just manual methods.

-

Soooooo...... no more EF manual focus lenses around I should know about?

-

Aussie Ash reacted to a post in a topic:

Interesting Breakdown Of Arri Colour Science

Aussie Ash reacted to a post in a topic:

Interesting Breakdown Of Arri Colour Science

-

As a 47mm F2.2 it's pretty close to a 50mm, so a pretty good FOV if that's the look you like. I've just bought the Panasonic 25mm F1.7, which is 50mm F3.4 on the GH7, and it's my first 50mm FOV lens, so I'm keen to start taking that out and about and "learning" that focal length. It probably seems odd to most that I've never shot with that focal length, but it's never really suited the situations I've been shooting in. Big and heavy isn't desirable, especially as I have some pretty killer combinations in that range already. I've got the Voigtlander 17.5mm and 42.5mm F0.95 lenses, which are 35mm and 85mm F1.9 equivalents, and with the Sirui 1.25x adapter they're as wide as 28mm and 68mm F1.5. Problem is those combinations are each 1300g / 4.6oz (2100g / 74oz with GH7) which is usable for hand-held shooting but my arms are pretty much done after a few hours. Of course, I'm also pretty much done with being on my feet for that long too so the setup isn't the limiting factor. These setups also have the advantages and coolness factor of being anamorphic too, so there's that! I'm slightly tempted by some other much stronger anamorphic adapters too, but in playing with the Sirui I've learned that they're very dependent on the taking lens, and some lenses work and other lenses that seem very similar are an absolute train wreck, so it's basically a blind purchase and these things aren't that cheap. My current preferred Night Cinema combo is the Takumar 50mm F1.4 and M42-MFT speed booster, and I bought the EF-MFT speed booster to try and get a slightly cleaner image, but unfortunately the 50/1.4 -> M42-EF adapter -> EF-MFT speed booster stack doesn't work as the back element of the Takumar interferes with the EF SB glass. I figured that the EF SB would open up a whole new world, as EF used to be the default standard for about half the worlds camera users, plus EF and PL were the de facto standards for cinema. My recollection was that there were tonnes of interesting and super-fast third party EF lenses, but now I go looking there doesn't seem to be so many to be found. If budget was no-option then there are a lot of cinema lenses that are very interesting, even anamorphic ones, but budget is a consideration so unfortunately things like the USD1400 Blazar Cato 85mm T2.8 2x anamorphic lens will have to just remain a dream! Apart from this being like me asking "can you recommend a lawnmower?" and you suggesting "just sell your house and move to a NYC apartment", I actually don't think it's true. I've done a lot of "what if" scenarios for what I'm trying to achieve, and while it might have worked for you specifically, when I look at the lenses for a given system I find every system to be very lacking. Sure, you can get fast lenses at 28/35/50/85, but there are all kinds of other gaps that MFT just doesn't have. This is gradually changing, as manufacturers gradually fill out the various lens lineups, but my impression of the current landscape is that most FF systems have the fast primes and holy trinity zooms (28/35/50/85 and 16-35/24-70/70-200) but very limited and patchy coverage of small and light lenses and in-between focal-lengths, etc. Adapting vintage SLR lenses gives access to character lenses, but only FF ones, not really S35 cinema ones (unless you go to crop mode which is throwing away resolution and now you're half-way to MFT!), and not the S16 ones. You sort of get the standard focal lengths in modern and in vintage, but that's it. MFT has all the small and light and in-between focal-lengths, but the weakness is in the fast primes and fast zooms. MFT used to have all the advantages of mirrorless essentially being able to adapt any SLR lens, but as time goes on, more and more of the interesting lenses are designed for mirrorless APSC/FF cameras and therefore not usable (or only available in MFT mount so you don't get a SB advantage) or are EF cinema lenses and are huge/heavy/expensive because they compromised on size and cost to ensure they're sharp sharp sharp sharp sharp enough for modern use. As an example, I have a tracking sheet of all the lenses I own (not every one available) and it's FF equivalent. This table includes: 15mm 18mm 26mm 28mm 30mm 31mm 34mm 35mm 40mm 50mm 53mm 56mm 59mm 64mm 70mm 71mm 75mm 78mm 80mm 82mm 83mm 85mm etc. I don't even think it's complete! Most of those are different characters too, being a mix of different manufacturers, vintages, and combinations of being native / with a SB / with an anamorphic adapter / with SB and anamorphic adapter, etc. So yeah, if you happen to want a fast standard focal length, or a fast trinity zoom (that's enormous), then FF is great, but the rest is pretty lacking. The other reason I'm not moving to FF is that most of what I shoot is on a native 10x zoom, which FF doesn't really have a good answer for. The other other reason is that the work for me to sell all my MFT equipment and re-buy in FF would make it so that I should just work those hours at my day job and increase my budget, so selling things isn't really cost effective. The other other other reason is that the GX85 has all sorts of configurations that FF can't match, so I wouldn't be selling that anyway.