-

Posts

8,146 -

Joined

-

Last visited

Content Type

Profiles

Forums

Articles

Everything posted by kye

-

Ultimately, the best thing to apply for this look would be one of these:

-

Here's the result of that Shot Match feature, just so you're aware: I think the video you're looking for from Aram K is this one:

-

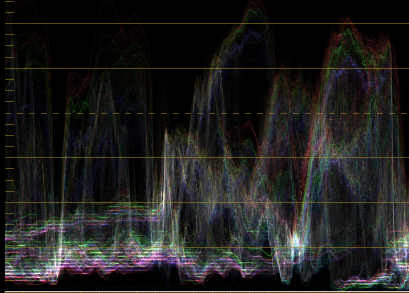

A few thoughts: Look at your scopes - look at what the waveform monitor is telling you about the levels in the image, and their colour balance. Check this out: You can see that in the shadows there is pink below green, that means that there's green in there. You can see in the middle and on the right there are much higher levels where red is highest and blue is lowest with green in the middle, that means warm tones at a higher luminance, which in this case is the girls skin tones. Put a global adjustment over your reference image and your grade turning the saturation right up, it will make your vectorscope much easier to see, and will accentuate all the colours so they're easier to see. It's like colour grading with a magnifying glass. Match the colours like that and turn it off and it will be a much better match than it looked when you were doing it. Apply an outside window and pull gain down to zero to crush the whole image (except for your window) then look at the scopes to see what just that part of the image looks like. Hover the window over the skin tones and see what the vectorsope is telling you - where is the centre of the hue range? how much hue variation is there? how saturated are they? Hover over other parts of the image too. Use a glow-style effect to bloom highlights to match the reference before you apply any other adjustments - they radically effect your shadow levels and contrast overall. When playing with shadow levels and black levels, crank the Gain right up so that most of the image is clipping and you've "zoomed into" the shadows - now 10 IRE might be up to 100IRE and you can easily compare your reference with your grade. All these are useful for grading your own footage in reference to itself too.

-

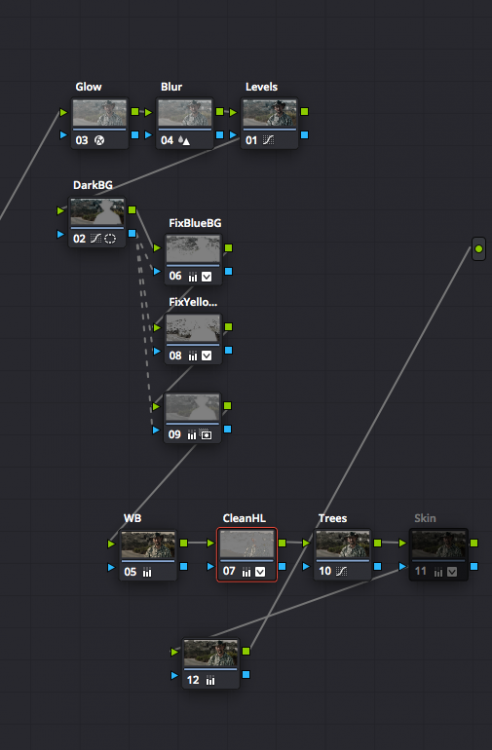

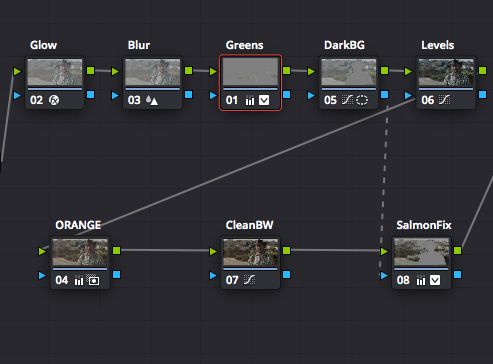

Attempt number one. Reference: Grade: and the node graph which quite obviously shows that this is the wrong way to go about such a thing: Attempt 2 Reference: Grade: Node graph - simpler but still awful: The absolute best way to match scenes is to get any camera and point it at something that looks like your reference.

-

I think it's about viewing angle. When I was getting my 32inch monitor I looked up the TXH and SMPTE specifications for viewing angle and it's quite wide, with the 32inch display being in-between the two specs when at arms length. If you're looking at a 55 inch TV at 10 feet then you're no-where near that viewing angle and won't see anything like the detail I'm seeing, or would be seen in theatres. From TXH (https://www.thx.com/faq/😞 For your viewing distance you'd need a 100 inch TV...

-

This feature, as I understand it, talks to the lens and then just crops into the sensor to only use the image circle that the lens covers. There are some issues: Doesn't work with non-native lenses, or lenses that don't talk to the camera (ie, all my lenses) The GH5 has a 5K MFT sensor. To have the same pixel density it would need 7.4K S35 or 10.7K FF. If it didn't have that resolution then there would be less pixels in the MFT image circle and I'd effectively get a downgrade.

-

That would rule it out for me. I've already invested in a lens collection that is at my preferred focal lengths, I don't want to have to re-buy my lenses to keep the same FOV as what I have (or in the case of the 7.5mm Laowa I simply cannot replicate the FOV as there is no equivalent lens). Your suggestion is half-way to suggesting a FF GH6, which exists - it's called the S1H.

-

We already have 5K in the GH5. There's only one mode that uses it, but it's there and is used for downscaling in most of the other modes of course. I'm much more interested in a camera that does 4K and 1080p better. I'd take Dual-ISO or eND over any increase in resolution any day of the week. We already have 400Mbps 4K 422 10-bit ALL-I, there aren't many people who can claim much benefit for a "better" output file than that, especially considering that the 4K killer mode is also already downsampled. EOSHD seems to be one of the most technically savvy and demanding community of GH5 users and have a look at how we're delivering. Resolution is not the answer.

-

I have a 32inch UHD display and the differences are obvious between 4K and 1080p. Having said that though, the differences between YT 4K and a 1080p Prores HQ export are slim, with the Prores having the advantage of course. It's all about bitrate, not resolution.

-

I think framerate is an artistic choice and I'm not much of a fan of the 60p look. I used to be pretty insensitive to it, but the more I get into film-making the more I see and now it looks strange and video-y. My internet is fine with 4K but can't do 4K60, so I notice whenever something is uploaded in 4k60 and the only ones I've seen that are have been shot in 60p too. Maybe others are frame-doubling but I haven't seen any. Here's a video uploaded in 1080 that has both:

-

True, but for each combination of resolution/framerate/DR there is very little you can do - that's what I mean by a brick wall. True, but probably not very useful. If you upload at a higher resolution then that resolution will have a higher bitrate, but the other resolutions don't. ie, if you upload at 1080p and watch at 1080p you get a certain bitrate, if you upload at 4K and watch at 1080p you get the same bitrate as before. You're only catering to the people with higher resolution displays, or those who manually override the resolution settings to stream in a resolution higher than their display. Exporting and uploading at a higher framerate probably hurts the aesthetic more than the extra bitrate helps, so that's not a particularly useful thing. I guess you could upload at double the framerate you shot at and just have every second frame the same in the file, but they'd move because the compression would make them different so it would still be a strange effect. Higher dynamic range is an interesting idea, but I'm not sure how you guarantee the same colour grade between those watching in HDR vs 709, so that's probably a wild-card too. YouTube is free, and the quality is "good enough". I think the strategy is to do our best and just work around it. It's not bad, and the content is what matters, rather than the image, after all 🙂

-

How on earth would that happen??? WTF are people doing with their SD cards.... wow.

-

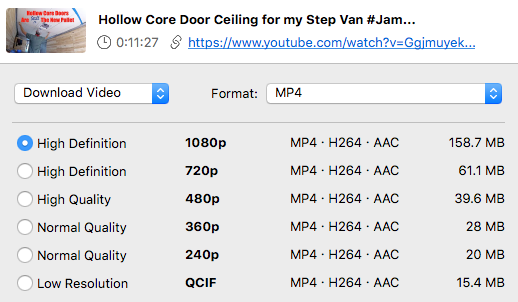

It sounds like this is your workflow: Shoot and record in either 4K Prores RAW or 4K Prores 422 or 4K 420 Edit / grade and export to 4K h264 Upload to YT If so, I suggest you consider the bitrates involved. 4K YT is something like 30Mbps. People suggest that you want to upload around 100-200Mbps h264 to YT to get the best results, but that any more than that is just wasting bandwidth. So shooting 4K Prores 422 would mean shooting 471Mbps to export in 100-200Mbps for YT to stream in 30Mbps. In this sense there's a pretty limited return on investment for shooting a higher quality acquisition format. The other consideration is that 8-bit and LOG formats aren't a good combination, so I'd suggest you either shoot 10-bit 422 and LOG, or shoot 8-bit and a more rec709 profile. Here's a reference for codecs and bitrates / bit depths / etc: https://blog.frame.io/2017/02/13/50-intermediate-codecs-compared/ and here's the thread where the YT quality and bitrates was tested: https://forum.blackmagicdesign.com/viewtopic.php?f=21&t=85016

-

That lens looks familiar.... I think I paid $20 for it, including shipping.

-

Welcome to the forums.. no need to lurk for so long, most of us don't bite 🙂 Reframing in post is one of the (few) valid reasons for "needing" 4K, and grabbing stills for PR isn't a bad application either. I've been editing 4K footage on a 2016 MBP 13inch which is only a dual-core, so not particularly powerful. It does well if you render proxies, but the online/offline workflow is a bit of a PITA. Another thing to consider if you're filming yourself is to get multiple cheaper cameras and have more than one angle recording at once. You already have several lenses, so with a dumb EF-MFT adapter you could easily get a second angle for little extra cost.

-

@Anaconda_ @newfoundmass YT is pretty much a brick wall when it comes to quality. You can give it higher quality files, but the quality bump is almost negligible. I haven't done exhaustive testing because it's been covered pretty well in this thread (look for the posts by Bryan Worsley): https://forum.blackmagicdesign.com/viewtopic.php?f=21&t=85016

-

The plot thickens.... YT delays the audio by an additional amount, and at the lower resolutions delays the audio by more. Turns out that due to YT delay, the video above from John was actually one frame ahead of the beat and TWO frames ahead of the beat. John has this followup, which includes an adjusted comparison, and I think there's much more of a difference now:

-

Sounds like you're at the start of your journey. A few thoughts that hopefully will be of use: Think very clearly about why you want 4K. If you want better image quality than your Canon Rebel then 1080 would do that. Your Canon Rebel outputs 1080p files, but they are absolutely terrible quality compared to almost any other camera that shoots 1080. You would be amazed. Long story short, I have been where you are now. I had a Canon 700D, and noticed that the video wasn't "sharp". I thought I needed 4K. I spent thousands of dollars on a Canon 4K cinema camera (the Canon XC10), 4K display, new computer to handle 4K. After shooting with the XC10 for about a year I realised that it didn't have the type of image I was looking for. I also learned that the 4K it shot was actually a lot worse than many other cameras 1080p footage. This is because the XC10 had 4K and a high bit-rate, but these things aren't the only things that matter. I moved to a GH5 and I am now shooting with that and really happy with the images. The GH5 can shoot great quality 4K - it is still right up there with the latest cameras. But here's the thing, i'm now going back to 1080p. The quality of the 1080p image from the GH5 is just as good as the 4K modes in most situations, the file sizes are better, and the workflow in post is much easier to deal with. There is no "best" camera. Every camera is a compromise, and a very serious one at that. A few radical examples - my GH5 is better than the $100K Alexa LF they shoot Hollywood blockbusters on because my GH5 has image stabilisation and the Alexa doesn't. That stabilisation is critical in the work I do and how I shoot. I say this as a prelude to the next point.... Cameras are all very different and the "best" camera is the one that will suit you. The P4K has a great image and files are nice to edit in post, but file sizes are huge, battery life is abysmal, it doesn't have stabilisation so you need a rig of some kind. In these ways the Olympus is completely different, the image is good but not as good, files are smaller but are much more difficult to edit in post, battery life is probably fine (I can't recall), and it has stabilisation. The first step in choosing a camera is working out what and how you shoot, then finding the best camera for you. If you're a chef you can buy the best fork in the world but it's still going to be completely useless for eating soup! I just use a USB card reader from Transcend. I've found that card readers can be absolutely rubbish, but the Transcend USB 3.0 one is cheap and the data rates are huge, much faster than the high-speed cards I have. 4K is a serious serious headache that you should seriously reconsider before you start down this path. Compared to 1080p, 4K has 4 times the number of pixels, which means everything in your entire setup needs to have 4 times the performance. A suggestion that you should consider is buying a cheap 1080p camera (such as a GH3) and using it to work out what you really need. It would be a very small cost investment, combine it with a Metabones SB and you could use the lenses you have but it would give you the opportunity to shoot a bunch of projects on it, edit them, learn the workflow, learn the pros and cons, and then once you've really got an understanding then you can sell it (probably for as much as it cost you) and then upgrade with much more confidence. Here's what a cheap but good 1080p camera (the GH3) looks like:

-

I quoted you here from the other thread (to keep things tidy and on-topic) to ask you about using the "slow" setting you mention above. I tried a slow encode in one of my above tests and found it to have an insignificant difference in IQ compared to the normal setting. Have you compared the two settings directly? I do recall the "slow" setting was dramatically slower though!

-

I've been doing various tests of image quality (Prores vs h264/h265) and also YouTube quality, and i'm thinking my next test will be if YT at 4K is good enough quality to tell the difference between a 4K acquisition / 4K upload and a 2K acquisition / 4K upscaled upload. The reason I mention this is that you're wondering if 4K 420 8-bit would be "good enough" for YT, whereas I think there's a chance that even 2K 422 might be "better" than 4K YT. My experience is that the difference between a great 4K master and a barely passable 4K master is basically invisible once YT compression has done its thing and brutally crunched the image.

-

-

You might be looking for a "flash bracket" - something that you can mount to the camera using the tripod screw underneath, and will provide other mount points for various things. They come in all shapes and sizes. Here's the BH page for some ideas and photos, but they're all over ebay and other stores. https://www.bhphotovideo.com/c/buy/Flash-Brackets/ci/653/N/4168864826 I've got a few different designs, some are aluminium, some steel, some good some cheaper, etc. You can combine various ones together, to make whatever you like really.

-

Not a stupid question at all, and I use a micro SD card in my GH5 via an adapter and it records just fine. I'm recording 4K at 150Mbps or FHD at 200Mbps with it and an adapter. The cards I have aren't UHS-II so I can't do the 400Mbps modes continuously, but they're just fine. I tested a high speed micro SD card in various SD adapters once via a speedtest and all the adapters worked just fine and all got the very high speeds that the card was rated at. What camera are you using?

-

There's a saying in business - "100% of nothing is still nothing". It's typically used to comment when people who own a business stifle the growth of the business because they want to retain full ownership and control and aren't willing to accept investment from other people. I bring it up because I fully agree with @IronFilm about how people perceive battery choices on new products. It is something that sways people's opinions, and you get more profit from having lots more customers with only a small hit to profit margins. Think of how many times you've seen a review of a monitor or a battery powered LED light and how the reviewer is happy to tell you that something takes "Sony NPF" or "Canon" batteries - they light up because it's something that could be a major pain but isn't. The comments are normally along the lines of "and we've all got a bunch of them already so we're sorted". Think about how the P4K took Canon LP-E6 batteries. They lasted 30 minutes a pop, and (here in Australia anyway) were about $100 each for the genuine ones. Want to have a small rig but be able to shoot all day? Let's imagine you'd need 10 of them. That's AU$1000. Plus chargers. That's a pretty significant percentage of the cost of the camera. Batteries are not a small cost, and worse still, it's got that hassle factor. People resent having to spend hundreds of dollars on batteries. It matters.

-

With all my codec comparing and YT quality measuring, I'm interested to know how people are actually delivering their content? I've made it multiple choice so you can select more than one option, so if it varies a little then put perhaps the two or three most common methods you use.