hoodlum

Members-

Posts

491 -

Joined

-

Last visited

hoodlum's Achievements

Frequent member (4/5)

175

Reputation

-

A nice upgrade to the LX100, albeit at the new premium prices. It is great to see that Panasonic was able to get their latest processor, battery and an EVF in a smaller body. I am interested to see if there are any recording limits due to heat. All of that new technology has added some girth.

-

Apparently, the A7V AF is struggling with 3rd party lenses. When Sony was asked about the issue, their response as “We do no guarantee compatibility”. Maybe Sony has dumbed down the AF for the 3rd party lenses. Something to watch.

-

I guess the US doesn't want those in other countries to invest in their businesses. I don't think they realize the consequences if this goes through. https://www.theglobeandmail.com/gift/88b464f77c2863c66a9326ee3eb93664ae337885ca5483f02881b645ad033c36/SWHM3X32RBFQFM2OX4MG5IDEWY/ Canadian investors holding U.S.-listed securities could see a sudden spike in the amount of tax they owe under a recent U.S. bill that has been tabled as a retaliatory measure against what it calls “discriminatory taxes” of foreign countries, including Canada’s digital services tax. If passed, the proposed legislation would add 5 percentage points to the withholding tax rate each year for four years on certain types of U.S. income for anyone living in a country that imposes a tax that the U.S. considers discriminatory or extraterritorial for U.S. citizens or corporations. The additional 20-per-cent withholding tax would remain in place as long as the other country’s disputed tax is in effect. The proposed bill appears to be targeting jurisdictions that have implemented a digital services tax on large U.S. technology companies or have an undertaxed payments rule (UTPR). Canada’s DST was enacted in 2024. The proposal means that Canadians who own U.S. securities that pay dividends or interest, or have realized gains, could see a large tax increase, said Kris Rossignoli, a cross-border tax and financial planner with Cardinal Point Wealth in New York.

-

sanveer reacted to a post in a topic:

Panasonic Interview

sanveer reacted to a post in a topic:

Panasonic Interview

-

While the translation is difficult to read and there are not a log of details, we can expect to see S1H and more compact cameras from Panasonic. Below are a couple of the comments from the interview. https://qicai.fengniao.com/537/5378995.html In terms of market demand insight, Panasonic is keenly aware of the trend of recovering demand in the fixed lens camera market. With the increasingly powerful cell phone photography function, the competition in the cell phone market has become more and more intense. Fixed lens cameras have become an important direction for cell phones to realize differentiated competition due to their unique functional characteristics, such as the long focal length function, which opens up new possibilities for cell phone photography. Based on this market change, Panasonic is looking forward to the fixed lens camera market and is actively planning the introduction of new products. Although it is not yet possible to determine the specific time of the release of new products, it can be seen that Panasonic in this field has launched an in-depth thinking and layout. In addition, Panasonic in the LUMIX S1H follow-up product planning is also actively exploring the LUMIX S1H as a positioning of the creation of film cameras, in the market has a unique position. With the continuous development and changes in the field of movie micro camera, Panasonic has received a large number of opinions and suggestions from various parties. In terms of product form, there are different expectations from users and the market. Some want the product to be similar to the FX3, cutting out the warship section and focusing on movie and video functions; some prefer to keep the micro-single form while strengthening the video function; and others expect to make it a square camera similar to the FX6. In terms of functional requirements, 8K 60p or even 8K 120p video shooting and built-in ND filters have become the focus of users' attention. In order to better meet the market demand, Panasonic merged with its professional-grade system imaging division in 2024, integrating the technical resources of both parties and engaging in deeper and closer discussions with technicians. Although the launch date of the next generation of the LUMIX S1H has not yet been determined, it can be seen that Panasonic is fully committed to the development of this product, and is striving to bring users an even better tool for movie creation.

-

An APS-C sensor would have made more sense. New prime lenses could be more compact and the faster sensor readout would have helped reduce the instances of rolling shutter. And maybe they could have implemented IBIS as well. A m43 sensor could have implemented IBIS in this body.

-

Sigma says BF stands for Beautiful Foolishness. https://petapixel.com/2025/02/23/the-sigma-bf-stands-for-beautiful-foolishness/

-

-

Juank reacted to a post in a topic:

Panasonic Lumix S1R Mark II coming soon

Juank reacted to a post in a topic:

Panasonic Lumix S1R Mark II coming soon

-

John Matthews reacted to a post in a topic:

Panasonic Lumix S1R Mark II coming soon

John Matthews reacted to a post in a topic:

Panasonic Lumix S1R Mark II coming soon

-

Does Sony have an app providing similar capabilities. New Lumix Flow app features: Designed for videographers Use an iPhone as an external monitor Storyboarding Editing LUT creation and direct import into the camera A filming assistant in a smartphone

-

sanveer reacted to a post in a topic:

Canon V1 1.4” sensor 16-50mm

sanveer reacted to a post in a topic:

Canon V1 1.4” sensor 16-50mm

-

ntblowz reacted to a post in a topic:

Canon V1 1.4” sensor 16-50mm

ntblowz reacted to a post in a topic:

Canon V1 1.4” sensor 16-50mm

-

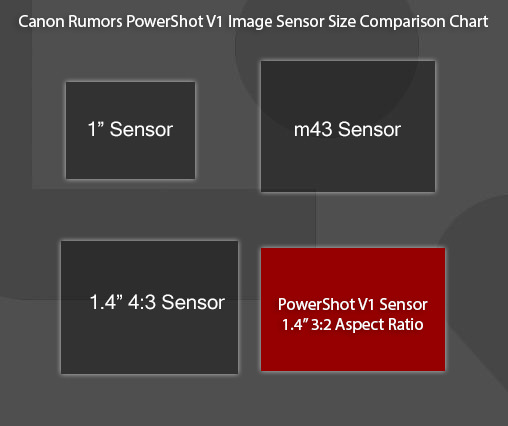

The m43 sensor is approx 1.35” so I guess Canon could say it is larger than the a m43 sensor. Although the sensor area is likely the same due to the different aspect ratio. But the comparison to m43 may give us an idea of the lens aperture. Based on the LX100, the V1 lens could be f2.0 to f2.8

-

Since we are likely less than 10 days away from the announcement, I thought I would start a separate thread. Below are the leaked details so far. 24MP CMOS (Canon Made) 1.4″ 3:2 Sensor DIGIC X Series DPAF Multi-Function Shoe Approximately 16-50mm (35mm Equivalent) 3″ LCD (1 Million Pixels) Articulating Screen 1.4x Photo Crop (17mp 70mm equiv) 4K Video (Slight crop for aspect ratio) 4K 60P / 1080 120P (Unconfirmed) Vertical Video Capable New Streaming Features (No detailed information) RAW, C-RAW, Dual Pixel RAW H.265 / HEVC C-Log 3 / HDR PQ A full line of accessories February/March 2025 Price: $899 USD

-

IronFilm reacted to a post in a topic:

Increasing interest in compacts, something is strange

IronFilm reacted to a post in a topic:

Increasing interest in compacts, something is strange

-

Increasing interest in compacts, something is strange

hoodlum replied to Andrew - EOSHD's topic in Cameras

Canon is expected to announce the V1 compact with 16-50mm (FF equiv) lens and 1.4" sensor before CP+. We will soon see Canon's take on this. -

Marcio Kabke Pinheiro reacted to a post in a topic:

Increasing interest in compacts, something is strange

Marcio Kabke Pinheiro reacted to a post in a topic:

Increasing interest in compacts, something is strange

-

IronFilm reacted to a post in a topic:

Increasing interest in compacts, something is strange

IronFilm reacted to a post in a topic:

Increasing interest in compacts, something is strange

-

Increasing interest in compacts, something is strange

hoodlum replied to Andrew - EOSHD's topic in Cameras

It looks like we may finally get a compact camera with wide angle to normal zoom, 8 years after the Nikon DL cameras were cancelled. Except it is Canon doing it with the V1. This is exciting news for me and it could be an automatic buy depending on the implementation. https://www.canonrumors.com/the-powershot-v-series-will-become-canons-new-line-of-compact-cameras/ 24MP CMOS Sensor (approx) Approximently 16-50mm (35mm Equivalent) 3″ Screen (Approx 1 million pixels) Screen has a 170° viewing angle 4K Video (Very slight crop, but likely for aspect ratio) 1.4X Crop Mode for Stills RAW, C-RAW, Dual Pixel RAW H.265 / HEVC C-Log3 / HDR PQ Launch: Late Q1/Early Q2 2025 -

Increasing interest in compacts, something is strange

hoodlum replied to Andrew - EOSHD's topic in Cameras

My daughter in university requested a compact camera last Christmas. She specifically mentioned the Canon Powershot Elph 180 due to looks and also since her friend uses the same camera so they could share settings. She liked the photos her friend took from that camera over her iphone. I believe smartphone camera have gone too far with HDR and along with sometimes getting the colour wrong. Camera companies just get the colours right for the most part. She also specifically mentioned the flash. I think the flash is the number #1 feature that is currently too limiting with smartphones and that is a big part of what is driving the compact camera trend. While some may be going for the retro look, that is not the main factor. I suspect camera companies still don't understand this and are not filling this large niche. I am not seeing many new compact cameras with a built-in flash. -

Something is nagging at me to go back to smaller sensor

hoodlum replied to Andrew - EOSHD's topic in Cameras

It is a great body but it still using a non-stacked sensor which Fuji and Olympus had a couple years ago already. -

Something is nagging at me to go back to smaller sensor

hoodlum replied to Andrew - EOSHD's topic in Cameras

I am looking forward to see what Canon has planned in 2025 for APS-C. This will also force Nikon and Sony to start taking APS-C more seriously, providing more competition for the GH7 and Fuji. https://www.canonrumors.com/there-are-a-couple-of-higher-end-rf-s-zoom-lenses-coming-with-the-eos-r7-mark-ii/ We have been told that Canon will be shaking up it’s APS-C line “significantly” later in 2025. It sort of sounds like there are going to be some deparatures instead of simple Mark II versions of the APS-C line. The EOS R7 Mark II will be the first APS-C Canon camera to get a stacked sensor. There was no word on the resolution of the new sensor, but it would be cool to see it go slightly above the current 32.5mp to give it 8K capabilities. As we’ve mentioned before, and we’re being told again. The EOS R7 Mark II will be going “up market”, so it may be a true EOS 7D Mark II successor.