-

Posts

29 -

Joined

-

Last visited

Content Type

Profiles

Forums

Articles

Everything posted by iaremrsir

-

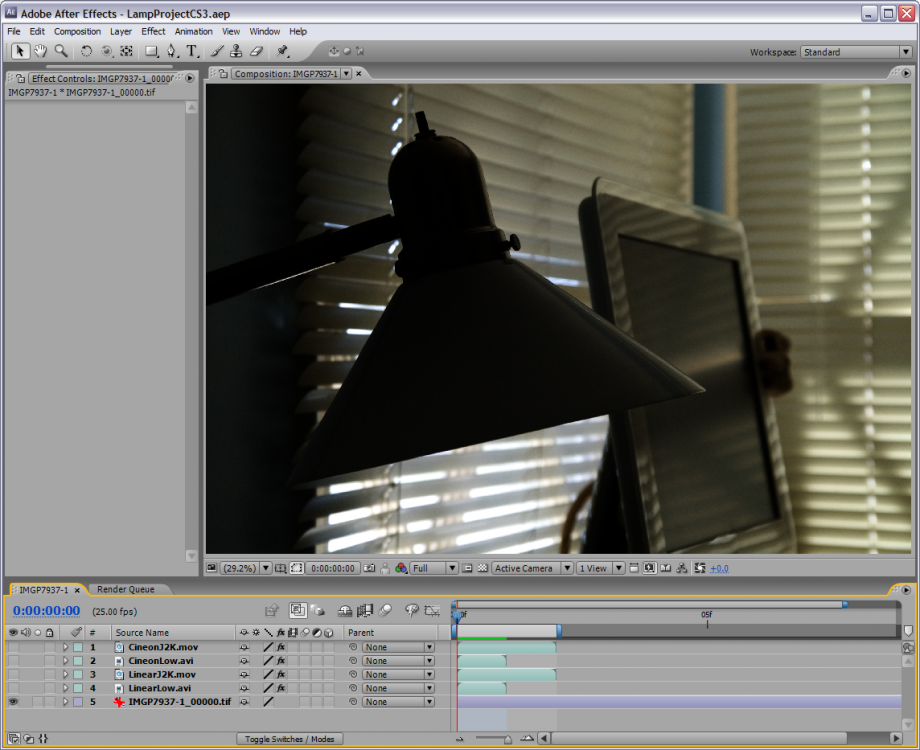

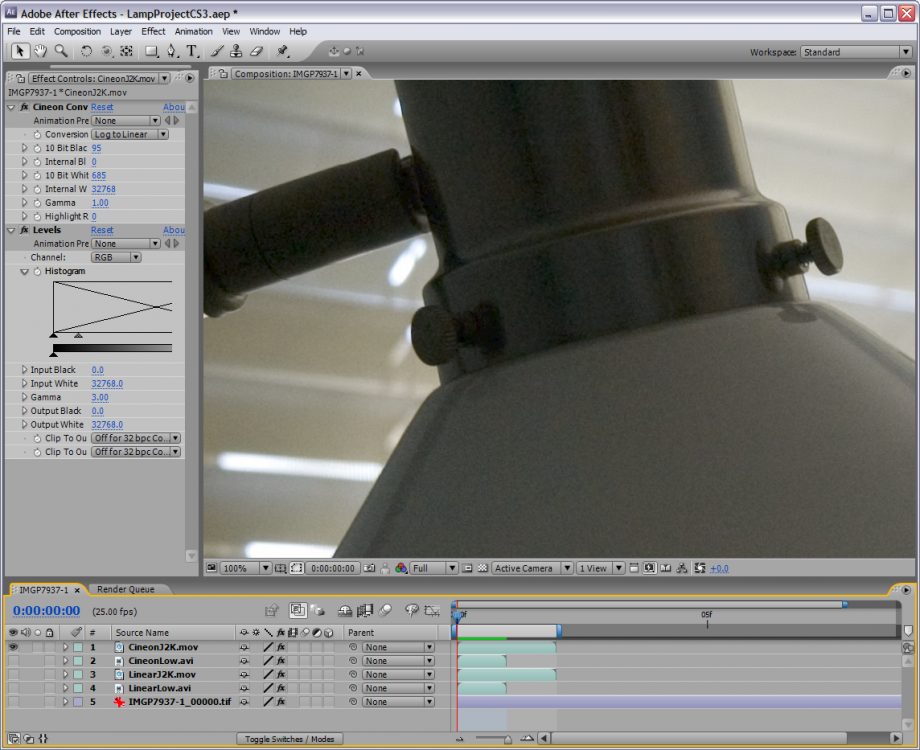

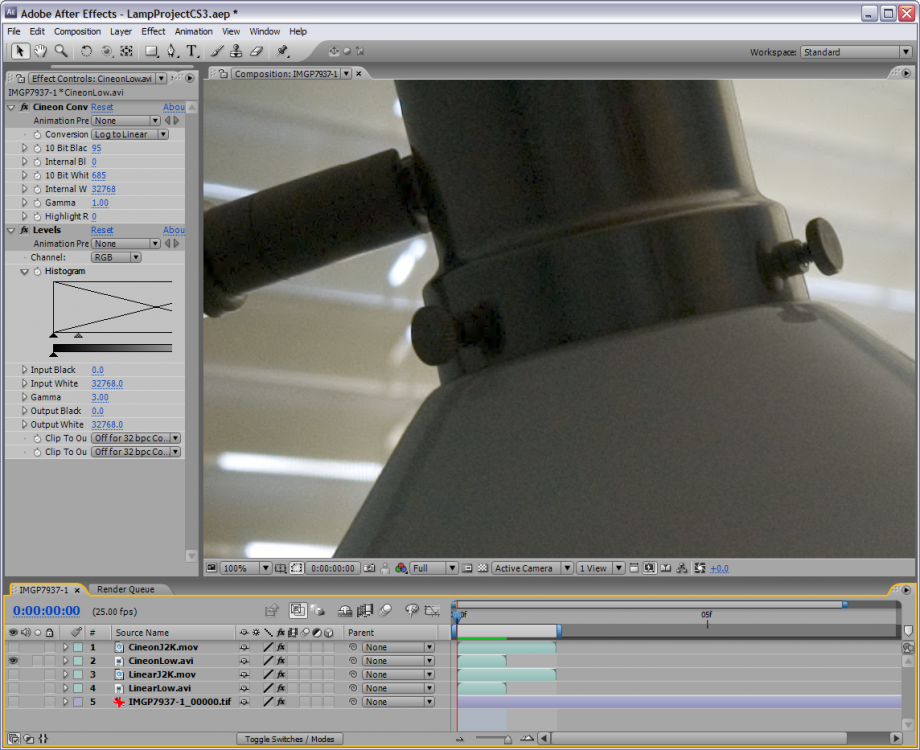

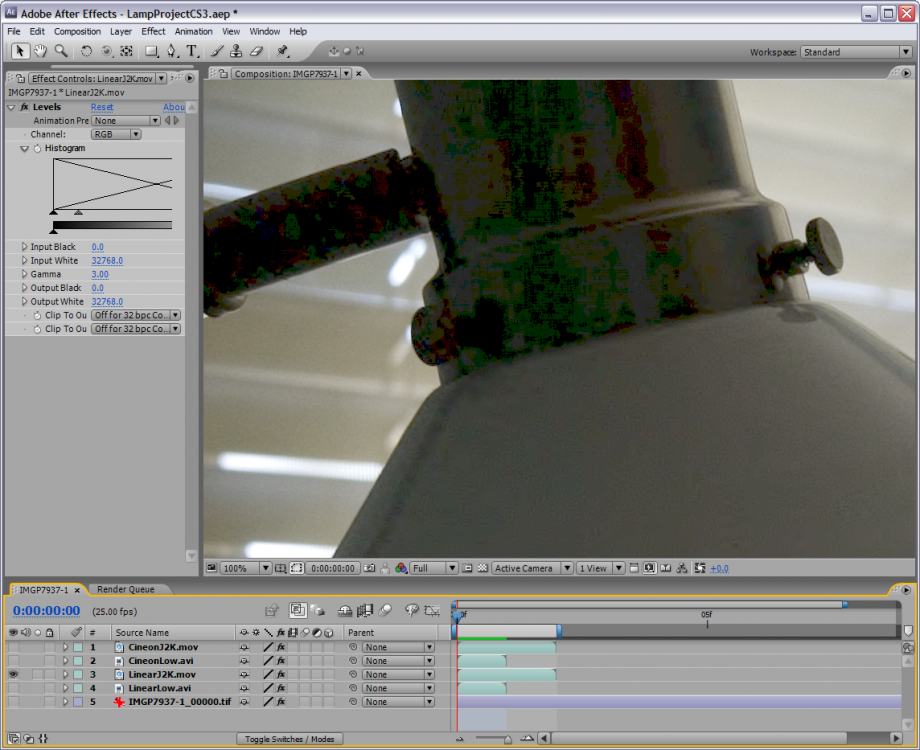

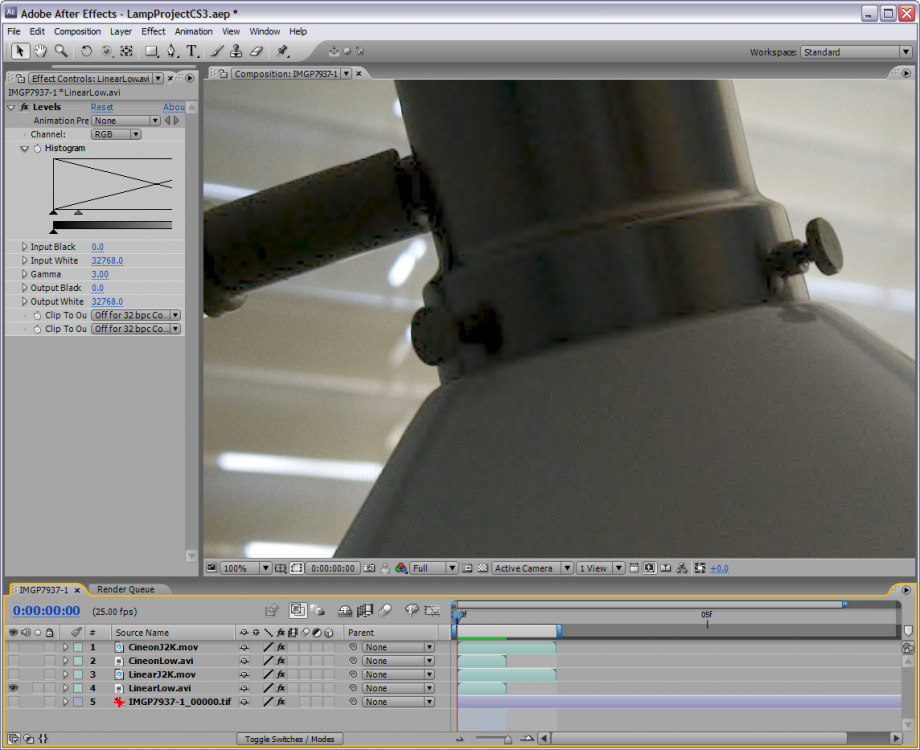

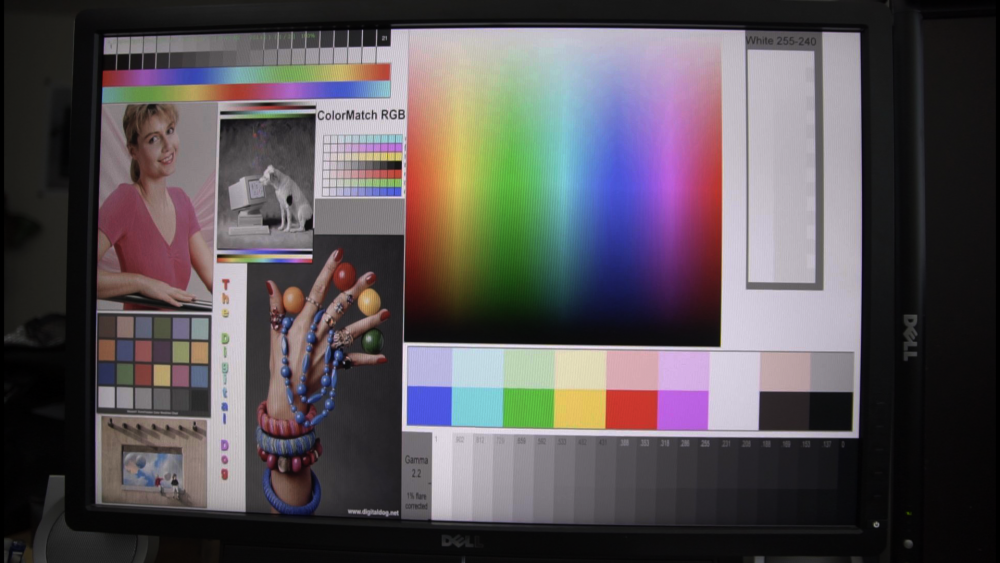

I directly addressed the 8-bit H.264 log encoded footage. I already said detail in the lower end is more likely to be destroyed by heavy compression. That includes noise. They are getting a wider range of color intensities, but less color saturation when shooting a wide gamut color space. No, the effect of compression is not the same in both cases because that's not how compression works. If the image isn't identical, it isn't going to compress the same. I said that compression smooths noise and details in the shadows. A log color space image is less likely to have compression artifacts and the strange blockiness, and will look more natural and retain the structure of the noise. The noise before compression is going to be the same before compression, regardless of color space. Only after a destructive process, like compression, do things like noise change. There's no good or bad guy in this. It's just debate. The nature of the beast so to speak. I enjoy the mental exercise. Here are some color managed samples from Dave Newman of CineForm from a decade ago. These show the effects of compression for data in the shadows. And before you say standard profiles aren't linear, I know they aren't. But they are fairly similar once you get below 3 to 4 stops below middle gray showing single digits per stop and being below 16/255. This is an extreme example being the equivalent of 10-bit 4:2:2 50 Mbps H.264, except these are wavelet compressed and generally handle noise a bit better than DCT based codecs. Uncompressed Linear: Compressed Linear CF Low and Linear J2K: Cineon CF Low and Cineon J2K: And some reading on the benefits of log curves compared to power curves. https://cineform.blogspot.com/2012/10/protune.html https://cineform.blogspot.com/2007/09/10-bit-log-vs-12-bit-linear.html

-

Is a sub $900 or 1.200 laptop enough for raw and/or 4k in Davinci?

iaremrsir replied to tomastancredi's topic in Cameras

My Dell 5000 series gaming laptop can playback a UHD timeline with GH5 and 4K XAVC from an F5. Also played with 4K Sony F5 raw in a 2K timeline and no hiccups there. In all honesty though, I think if you went with an alienware 13 or 15 you'd be better set up for more complex projects. A Dell 5000/7000 series gaming laptop, you'd be good for editing, grading, and low intensity stuff at high resolution. This is all in Resolve 14 by the way. Premiere has been too sketchy with raw performance for me. But I do agree that a small desktop setup for around the same price will get you much more bang for your buck. -

Hi, I'm Eddie. I designed the Bolex Log color specification and the image processing pipeline for Digital Bolex towards the end of its production run. Also wrote the plugin that lets people process CineForm Raw color in Davinci Resolve as if it were DNG. And I'm not saying this to get into a pissing contest. I'm saying this as someone who is on the manufacturer side of things and has to know the ins and outs of the product and how it's used with other tools. Shoot 8-bit H.264 from a Canon C100 or any of their DSLRs for that matter, then compare it to ProRes recorded over HDMI to an external device. You'll often seen that the ProRes has more noise and fine details/texture. This is because H.264 smooths out the noise in camera and HDMI outputs uncompressed data. You're speaking as if the main reason detail is lost in 8-bit is that it's the log curve, when in reality, the main loss of detail has been heavy compression. I already agreed that using a log curve in 8-bit will redistribute code values so that more space is given to the mids and shadows, meaning less codes per stop when storing HDR data. We're not debating sensor data, otherwise we'd be talking analog stages, 16-bit, 14-bit, uncompressed. So, in this case, compression has everything to do with the image data. If you apply a logarithmic gain at the analog stage (ignoring the electron multiplying case), noise would be much higher than if you applied the log curve after digitization. It's not pretty. Definitely not philosophical in any measure. And these log curves can't pull data that isn't there. It's well known that using a log curve will boost the appearance of recorded noise in any bit depth, color space, etc. I'm not arguing that log fixes noisy data. I'm arguing that recording log allows you to record data in a way that keeps more detail across the range and expose in such a way that allows you to better minimize the appearance of noise in post (ETTR). Trust me, I let out a heavy sigh any time I try to see someone compensating for low light scene or poor scene lighting by recording log. I didn't say you said they don't. But you said professionals are lighting within 6-stop ranges. They have millions to pour into set design, lighting, and wardrobe. I was just pointing out the fact, that in spite of them lighting like that, they still shoot log or raw (which is later interpreted as log in the grading tool). Also, they're gonna be shooting 10-bit, 12-bit, and 16-bit more often than not, so they aren't worried about losing code words per stop, which takes away the main argument of using a standard profile. I didn't say you did. But it isn't nonsensical. Canon raw has C-Log applied to it before being sent over SDI to external recorders for being saved as rmf. ARRI, BMD, CineForm, all write their raw formats with log as part of the specification. While it's not the Cineon-type log they use, it's log nonetheless. But you can grade in a log color space. Hence Davinci Resolve Color Managed timeline and ACEScc/ACEScct. Whenever you grade on top of log image data, your working/"timeline" color space is log. This is where we get out of the realm of objectivity. Because there are technical trade-offs for both sides, it's up to who's shooting as to which is preferable. There is no clearly defined technical mathematical winner in this case (which is one of the reasons I'm happy I don't have to deal with H.264 compressed, 8-bit recorded data anymore). And I'm not saying there is one that checks all the boxes in this case. When I say "retain detail through compression" I'm talking about codec compression. Like I stated earlier, a standard profile combined with high levels of compression will reduce texture in the low mids and lows. It reduces flexibility and the overall naturalness of the image. That is an issue of grading, not the source material. When I grade log material, I have no issue getting thick colors from it. Again, log doesn't add noise. It just increases the appearance. Once the image is graded, the appearance of the noise will look similar to that of the standard profile, but the texture of the shadows will look more natural, especially in motion. But you didn't grade to match which means the higher contrast and extra sharpness of the standard profile will have increased perceived detail of the aliasing and text. And in spite of that, we can see that the aliasing that you point out in the standard profile is present in the log image! When graded down to match, you'll get similar, if not exact sharpness from the log image. The difference between the two being flexibility. I took the log one you posted and graded it down more closely to what your standard profile looked like without the boost in saturation and you can see the apparent sharpness is similar and the noise isn't as much as you were making it out to be.

-

But you said "standard profiles" which are narrow gamut/SDR color spaces similar to sRGB/BT.709. So, yes, you did indirectly say you get more color with a smaller gamut. You're applying a line of thought that suggests these log specifications are applied at the analog stage before digitization which is the only place that a color transform could add noise. Any noise in the raw data before color transform will be present in the image regardless of the color transform. The only difference is that the noise is pushed down further in the other color space and wiped out with any other fine details in the shadows by lossy compression. Again, compression is what gets rid of detail, more so than quantization/bit reduction. Yes, in 8-bit you lose color resolution, but not to the extent that you are suggesting. If you've ever looked at characteristic curves of a log curve and something like linear or BT.709, you'll see that once you get to around 3-4 stops below middle gray, with a "standard profile" you're dropping detail low enough to where a codec like H.264 can smooth that detail out (which also smooths out noise and gives that plastic look or dancing blocks). But with a log curve, shadow detail is held above that threshold and is less likely to be smoothed out entirely (thus allowing you to avoid the plastic and shadow blocking). So yes, you might get more code words per stop with a "standard profile," but highly saturated colors are more likely to clip, you lose fine details in shadows, and you have a lot less room in the highlights. When you get into the mathematics of it, yes, you lose some code words per stop, but you can record more saturated colors, more highlight detail than just 2.3 stops, and detail and texture in the shadows. Even if an expert cinematographer lit a scene, they'll more often than not still have values in the deep shadows and possibly specular highlights. Of course it's not ideal in 8-bit, but nothing is. This doesn't even touch on post production color management when knowing the specifics of the color space is vital. You just said wide gamuts are able to store more intense values. That means they can store those more intense values that the raw data contains. Meaning they can store more colors regardless of the resolution (bit depth) that those colors are quantized into. Yet, cinema/TV has been shot in log and more recently raw, then graded in log working color spaces for decades.

-

Like I said with the sandbox example, you don't lose color information when using a log color space with wide gamut. It's literally the opposite. Wide gamut stores the most possible color information. These curves just change where the data is mapped. The log curve redistributes linear data so that the shadows and midtones are more likely to keep detail through quantization and compression. The only things that will cause you to lose information are the aforementioned quantization and lossy compression.

-

No. Small gamut does not equal more data. Okay, this isn't exactly the best representation, but this is the line of thought that I came up with when it clicked for me. Disclaimer: it is literally a childish example. So I apologize in advance haha Color space is a sandbox and data is your sand. Say we have a sandbox of arbitrary size and our current point of reference. We start to fill it with sand up until just after one single grain of sand spills out of the boundary of the sandbox. This would represent an image with colorfulness at its highest level and a linear lightness that gets mapped to 1.0 or 255 or 1023 etc. Let's fill the sandbox with more sand so that it spills overwhelmingly out to the side. But, we can't play with that sand because it's outside our sandbox. Now we're going to build a sandbox encompassing the spill and the other sandbox; far enough out so that there is a good amount of space between the boundary and our sand. Our point of reference is still the smaller sandbox, so even though it's the same amount of sand in and around the small sandbox, occupying the same space, it looks like a lot less sand because of the size of the sandbox (sorta the same idea of using smaller plates when eating. The same amount of food on a small and large plate will look like 'more' on the small plate). This is the same has having a wide gamut or HDR color space. Since most of us have a point of reference that is small, a relatively small amount of data will look normal or well saturated and contrasty. And what we would consider a relatively or exceedingly large amount of data in our small color space would look desaturated and low contrast in a wide gamut or HDR color space because the data is 'small' relative to the size of the larger space. Hope this makes sense. Cheers

-

Okay, I've skimmed through some of the comments here and hopefully I can add something helpful/useful and clear some things up for everyone here. S-Log, Alexa Log C, Canon C-Log, Redlogfilm, Bolex Log, BMD Film, V-Log, all the Cineon-type log specifications on modern cinema cameras were designed for exactly that purpose. The main goal of having these color spaces (consisting of mainly a color gamut and an optoelectronic transfer function, and sometimes a little more) were the following: Retain most amount of detail possible through digitization, bit reduction, and/or compression. Integrate/mix footage with motion picture film (Cineon) and other cameras. Consistency and mathematical accuracy, which translate to less guess work. And I think there's some misconception that these log specifications were designed to be looks. Yes, they have a look designed in them/inherent to them, but that is not their main function. By retaining the most possible detail you're able to reproduce scene referred data more accurately, which means you can color with everything the camera was capable of seeing/capturing. So, while there is a bit of 'lookery' happening with these color specifications, their main purpose is to retain data. No. All else equal, any noise in an image recorded in a log color space will be present in that image when viewed in another color space. These color transforms can't add or subtract noise from an image, only change the appearance just like every other bit of signal. Not sure what you mean by dated, but as long as you're viewing wide gamut or HDR data on a display with a gamut lesser in size or more restrictive transfer function, it will look desaturated and low contrast. Log specifications are not inherently destructive. Bit reduction and compression, however, are destructive (in most cases). The data is already present in the image. A LUT is literally just a quantized representation of a color transform (continuous functions like our log specifications). So all it does is change the appearance of the data that's already there. If further explanation is needed, I'd be happy to oblige.

-

I think this is a good example of what @mat33 was describing. More from Olan! Shot at 400 ISO. Though I'm not sure if Olan used any noise reduction here.

-

That was actually one of things Olan mentioned in his post on the user group. He decided to go for ISO 400 instead of 200 because he didn't want the clean look for this. While it is possible to get the clean look, I think a lot of people like the mojo that ISO 400-800 bring. Now, on a D16 mkII using the KAE-02150 sensor, a clean image would be extremely easy to get, and you wouldn't be sacrificing much high end range at all.

-

Thought I would show some new footage that was recently posted in our user group by Olan Collardy. Processed in Resolve with Bolex Log. User demand (lots) and time. And of course, no guarantees of anything until it comes from the head honchos.

-

Not bad cameras to be mistaken for And of course great work by Arsen. He's been putting out great stuff with the camera since he first laid hands on it.

-

Ahh so these are in camera settings rather than the characteristics of the actual curve. The black level is probably like it sounds, it changes where black is recorded. In the case of S-Log, it'd allow you to change the recorded black from 90/1023 to 0, or whatever you like really. The knee is likely the knee that is used in many Rec. 709 profiles that allows it to keep more data in the highlights. It would act at the very top of the range and essentially "bend" the curve down to keep more range or clip at a lower point. This depends on if the knee is applied before or after the signal is already clipped from the gamma transform. The higher the value, the sharper the bend is. Not sure on what the black gamma would be. It'd be best to look up the user manual for the camera these settings are for know if there are exact units they are giving you versus an arbitrary scale. And I must say, it would be a lot easier to leave the values at default. It would also make it much easier in post to do color transforms when needed. But I do share your desire for understanding everything you possibly can about a camera haha

-

Everything you need to know about S-Log 2 can be found in the technical summary. https://pro.sony.com/bbsccms/assets/files/micro/dmpc/training/S-Log2_Technical_PaperV1_0.pdf As for tutorials on the topic, I'd be willing to help here as much as possible. I highly recommend taking a look at Alistair Chapman's articles on S-Log at xdcam-user.

-

The maximum gain we can have on the EMCCD register is 40x at 0°C (threshold of diminishing return) which is about a 5.3 stop boost. The problem with going that far is that we'd likely end up getting more noise in the midtones since we'd have to use more of the low gain signal. We would technically extend our dynamic range by a stop and get better SNR in the deep shadows, but that gives way to noise in the middle. So at default it'd be a 4.3 stop boost on the EMCCD at normal operating temperature. And we'd be able to get around 14.8 stops (based on well cap and read noise RMS) without any highlight reconstruction. You'll be able to see pretty far into the dark with this sensor (it is designed with surveillance in mind after all). There's also the EMCCD only mode, where we could use high gain and get around the same dynamic range as the current D16, but cleaner. My main thing with this is that we haven't had any FPN. So on a sensor with 14+ stops (without HR) that doesn't have FPN I also forgot to mention that the sensor can switch to "Normal mode" (11+ EV DR) instead of the intra-scene switchable gain to achieve 60fps at 1080p.

-

Since it's based off of the 5.5 micron design, without the EMCCD we'd see a base ISO of 200. But since the sensor has what they call "intra-scene switchable gain" (this happens in the analog realm, not digital like the ALEXA and BMDs), we would likely see a base ISO of ~1600 when using the intra-scene mode. In determining this, I'd basically have to find the operating point of the sensor to see what would give the most even distribution of dynamic range vs noise vs sensitivity. I agree QuantumFilm looks really good spec wise, but based on what they have built into the sensor, I think I'd want to change a few things because I'm a control freak with these kinds of things haha It's a shame that they aren't doing S16, S35 sized sensors. I think we'd actually try CMOS if it were QuantumFilm sensors.

-

That is, in fact, the sensor I'm talking about the KAE-02150. So, so nice. If you read up on EMCCDs, you'll see the higher the gain, the lower the amount of noise. It works by varying voltages in a special gain register and the electrons collide with each other and a phenomenon called impact ionization happens. More electrons are created! And electrons are our signal! So more gain = more electrons = more signal = less noise

-

I developed the color science and did all my tests with the camera on Windows. There's the free HFS explorer tool, or you can buy HFS drivers for Windows for 20 USD. If we were to make other cameras, I think a D35 and a D16 mk II based on EMCCDs would be my preference. The D16 mk II would hopefully be based on the KAE-02150. So 30fps, 14+ EV of DR without any of the highlight reconstruction. That's without any noise reduction in the circuitry of the sensor or in the software, so it'd keep the same mojo of the D16. The D35 is more of a dream camera for me. Nothing like it currently exists. I actually made a list a while ago and posted on our user group that Joe was actually on board with had the funding been there to pursue it. It'd basically be an EMCCD version of our sensor, but double the dimensions and resolution horizontally and increase the dimension vertically enough to achieve 16:9.

-

I've found that the browser color management plays a large effect. So far the only browsers that have given a "'correct" image like what I see viewing the file from my desktop are Microsoft Edge, Safari, and just recently Chrome. Further, make sure you're not uploading something like H.265/264 to YT. The generational loss combined with the 4:2:0 space causes color shifts. I noticed far less color shift uploading a ProRes or DNx file versus H.264/265 which left my reds looking more magenta.

-

Thanks for posting this @Mattias Burling. It's nice to see that users are happy with the changes we've made.

-

I get around 500-550 MB/s with my 840 EVO and 850 EVO drives. As long as those are connected to a SATA III port or you have them in RAID, you'll be fine. The big bottlenecks will be CPU, GPU, and RAM. For 4K I recommend a minimum of 32GB of high speed RAM (1600 MHz or faster), ideally 64GB. For your GPU the GTX 1070 is a good starting point. It's inexpensive and has 8GB of RAM which is a great help for 4K. CPU will need to be the highest end i7 CPU or a Xeon to edit smoothly. Of course this all depends on what your delivery format is. I can get 18fps with a 1080p timeline using 4.6K raw footage on a 3 year old overclocked CPU. But that drops drastically if I use a UHD timeline. Are you looking to build your own PC or buy a complete system from a company like Dell or HP?

-

I'm gonna guess a lot. I would think it'd be similar to calibrating a new camera. There are a couple of methods, but the simplest would be first recording grayscale charts to linearize the film stock. Then using something like the ColorChecker SG or similar they can create a 3x3 matrix which can get them most of the way to through color conversion from white balanced digital source to film stock. Then convert to something like LCh space to adjust each channel individually using multiple reference charts. Then convert back to linear RGB space and apply the film transfer function. There's also the route of measuring the spectral sensitivities of both to get a more accurate reading than the color charts, but those tools can cost in the 10K USD range. I don't know if that's what they actually do, but it would technically work.

-

Yeah, it is. The sensor is from the same family as the D16's. Never had the pleasure of shooting with one, but it makes some very nice images. This music video by one of the artists I follow was shot on the ikonoskop as well.

-

Why does RAW DNG change depending on what software you use?

iaremrsir replied to Turboguard's topic in Cameras

You can try using the luma v sat curve and keep the saturation low in the deep shadows. That will reduce the appearance of color noise while retaining the texture. -

The Blackmagic Video Assist as well as the newer Atomos Flame and Inferno series. We're working on making the HDMI recording functionality more compatible with recorders. False color works on the raw data to let you know when your first channel clips. Because the D16 doesn't do any internal highlight recovery or noise reduction, we thought it'd be good to know this. This means that you have anywhere from 1/2 to 2/3 of a stop more highlight range in the raw file than you'd get over HDMI. So In raw at 800 ISO you're gonna see close to six stops of overexposure range, but in the HDMI you're gonna see around 5 1/3. I will ask about being able to separate the LCD from HDMI. Our goal is to make the current camera as best as it can be before we start trying to sell people a new one.

-

Why does RAW DNG change depending on what software you use?

iaremrsir replied to Turboguard's topic in Cameras

Premiere defaults to sRGB which is brighter than BT.709. Adobe also has a default curve it uses before conversion to sRGB if I remember correctly. BMD Film also has a relatively low middle gray point and black point at 392 and 36 respectively on a 10b scale. So BMD Film's middle gray is actually lower than sRGB and BT.709. Also, because each program uses different methods to do color conversions, you'll find that each program can produce a different results. Adobe uses the DNG SDK while Resolve uses libraw which is based on dcraw. DNGs are not like ARRIRAW, Sony RAW, or REDCODE. All of those have specific SDKs and using those are the only way you can process those raw files. In order to use a different method, you'd have to reverse engineer the formats. But since the DNG/TIFF is an open standard it's not hard for programmers to put together their own processing pipeline. It just comes down to programmers using different methods to process the same data.