paulinventome

Members-

Posts

162 -

Joined

-

Last visited

About paulinventome

Recent Profile Visitors

The recent visitors block is disabled and is not being shown to other users.

paulinventome's Achievements

Active member (3/5)

87

Reputation

-

Sigma fp and EVF-11 for other cameras... Could be possible

paulinventome replied to paulinventome's topic in Cameras

Good thoughts! I spoke to Sigma who said it wasn't possible, Whether that's true or not i don't know - i must assume there are handshakes going on because there is a switch on the EVF for flipping between LCD and EVF and that has to pass back into the camera somehow. I could rig something for power (you are right, that has to be power source).. I have extension cables for USB and HDMI, so if i power it then it should work as is - then swap the HDMI signal and see if that works... Despite what sigma says it is still tempting as officially i doubt they could say it would work (it was worth an ask) There's a new Leica M11 EVF as well... Hmmmm cheers Paul -

Sigma fp and EVF-11 for other cameras... Could be possible

paulinventome replied to paulinventome's topic in Cameras

I did, it's still quite big. I have a Graticule Eye and the OEYE is quite a bit longer. At the moment i'm using Hassleblad OVF Prisms on the Komodo screen itself, which is 1440px across anyway. Very easy but limited angles. I may speak to portkeys actually, they just released an SDI version of their mini screen, which in someways is more compact thanks Paul -

PannySVHS reacted to a post in a topic:

Sigma fp and EVF-11 for other cameras... Could be possible

PannySVHS reacted to a post in a topic:

Sigma fp and EVF-11 for other cameras... Could be possible

-

So i just got an EVF-11 for the sigma fp and it's a really nice small lightweight EVF. It uses the HDMI out, USB C and there are two prongs that i believe connect to the flash output on the side of the sigma fp I want to see if i can use this for my Komodo, which is crying out for a small EVF (The Zacuto stuff is too large, i have an Eye already and would happily get rid of it) So first experiment was to get some cables to plug the Komodo into the HDMI (via an SDI convertor) and then plug the USB C from the camera to the EVF - thinking that maybe that would be enough to start with. But no go. Then i saw the two prongs of the flash and wondering whether they were power and the USB C connection was control. So anyone got any details on the pins for the flash on the side, or has anyone experimented? I'm just trying a proof of concept to see if it's possible, the EVF might be relying on control from the camera over that USB C in which case all bets are off... cheers Paul

-

JJHLH reacted to a post in a topic:

Sigma Fp review and interview / Cinema DNG RAW

JJHLH reacted to a post in a topic:

Sigma Fp review and interview / Cinema DNG RAW

-

JJHLH reacted to a post in a topic:

Sigma Fp review and interview / Cinema DNG RAW

JJHLH reacted to a post in a topic:

Sigma Fp review and interview / Cinema DNG RAW

-

JJHLH reacted to a post in a topic:

Sigma Fp review and interview / Cinema DNG RAW

JJHLH reacted to a post in a topic:

Sigma Fp review and interview / Cinema DNG RAW

-

JJHLH reacted to a post in a topic:

Sigma Fp review and interview / Cinema DNG RAW

JJHLH reacted to a post in a topic:

Sigma Fp review and interview / Cinema DNG RAW

-

JJHLH reacted to a post in a topic:

Sigma Fp review and interview / Cinema DNG RAW

JJHLH reacted to a post in a topic:

Sigma Fp review and interview / Cinema DNG RAW

-

JJHLH reacted to a post in a topic:

Sigma Fp review and interview / Cinema DNG RAW

JJHLH reacted to a post in a topic:

Sigma Fp review and interview / Cinema DNG RAW

-

JJHLH reacted to a post in a topic:

Sigma Fp review and interview / Cinema DNG RAW

JJHLH reacted to a post in a topic:

Sigma Fp review and interview / Cinema DNG RAW

-

Fairkid reacted to a post in a topic:

Sigma Fp review and interview / Cinema DNG RAW

Fairkid reacted to a post in a topic:

Sigma Fp review and interview / Cinema DNG RAW

-

Sigma Fp review and interview / Cinema DNG RAW

paulinventome replied to Andrew - EOSHD's topic in Cameras

I think that's pretty unfair without knowing what we do. Most of my work is P3 based. Ironically the vast majority of apple devices are P3 screens, there's lots of work targeting those screens. 2020 is taking hold in TVs and is becoming more valid and of course HDR is not sRGB. Any VFX work is not done in sRGB. Any professional workflow is not sRGB in my experience. All we're doing is taking quarantine time and being geeks about our cameras. Just like to know right. My main camera is a Red, not a sigma fp, but the sigma is really nice for what is it. It's just some fun and along the way we all learn some new things (i hope!) cheers Paul -

Sigma Fp review and interview / Cinema DNG RAW

paulinventome replied to Andrew - EOSHD's topic in Cameras

I'm with you on all this but i don't know what you need sigma to supply though... The two matrixes inside each DNG handle sensor to XYZ conversion for two illuminants. The resulting XYZ colours are not really bound by a colourspace as such but clearly only those colours the sensor sees will be recorded. So the edges will not be straight, there's no colour gamut as such, just a spectral response. But the issue (if there is one) is Resolve taking the XYZ and *then* turning it into a bound colourspace, 709. And really i still can't quite work out whether Resolve will only turn DNGs into 709 or not. So as you say, for film people, P3 is way more important than 709. And i am not 100% convinced the Resolve workflow is opening up that. But i might be wrong as it's proving quite difficult to test (those CIE scopes do appear to be a bit all over the place, and i don't think are reliable). As i mentioned before i would normal Nuke it but at the moment the sigma DNGs for some reason don't want to open. Now i could be missing gaps here, so i am wondering what sigma should supply? cheers Paul -

Devon reacted to a post in a topic:

Sigma Fp review and interview / Cinema DNG RAW

Devon reacted to a post in a topic:

Sigma Fp review and interview / Cinema DNG RAW

-

Sigma Fp review and interview / Cinema DNG RAW

paulinventome replied to Andrew - EOSHD's topic in Cameras

Hi Devon, i missed this sorry. Unbound RGB is a floating point version of the colours where they have no upper and lower limits (well infinity or max(float) really). If a colour is described in a finite set of numbers 0...255 then it is bound between those values but you also need the concept of being able to say, what Red is 255? That's where a colourspace comes in, each colourspace defines a different 'Redness'. So P3 has a more saturated Red than 709. There are many mathematical operations that need bounds otherwise the math fails - Divide for example, whereas addition can work on unbound. There's a more in-depth explanation here with pictures too! https://ninedegreesbelow.com/photography/unbounded-srgb-divide-blend-mode.html Hope that helps? cheers Paul -

Sigma Fp review and interview / Cinema DNG RAW

paulinventome replied to Andrew - EOSHD's topic in Cameras

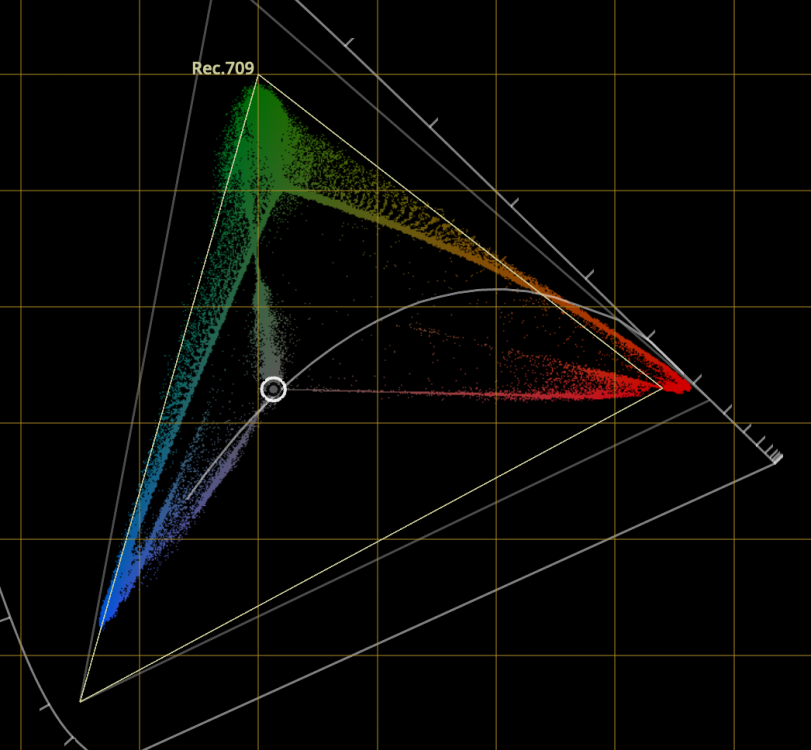

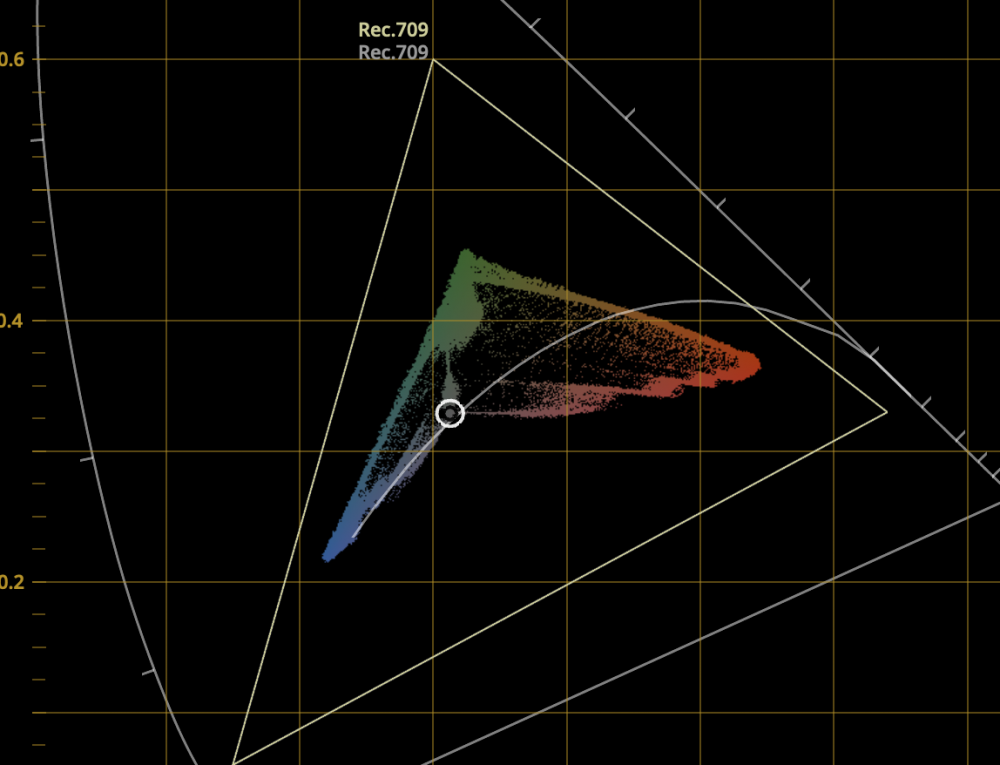

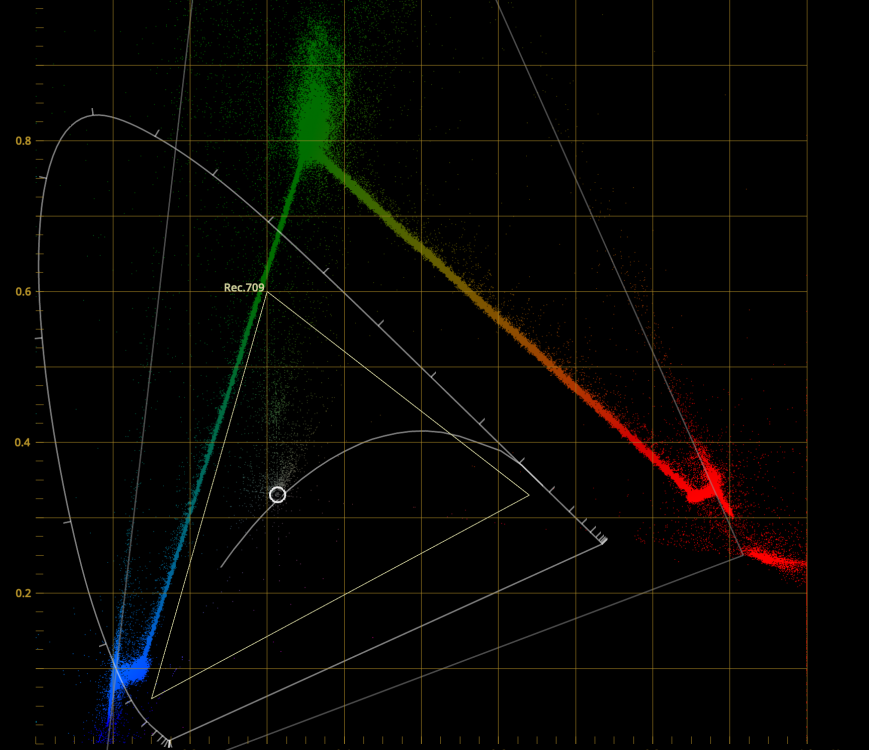

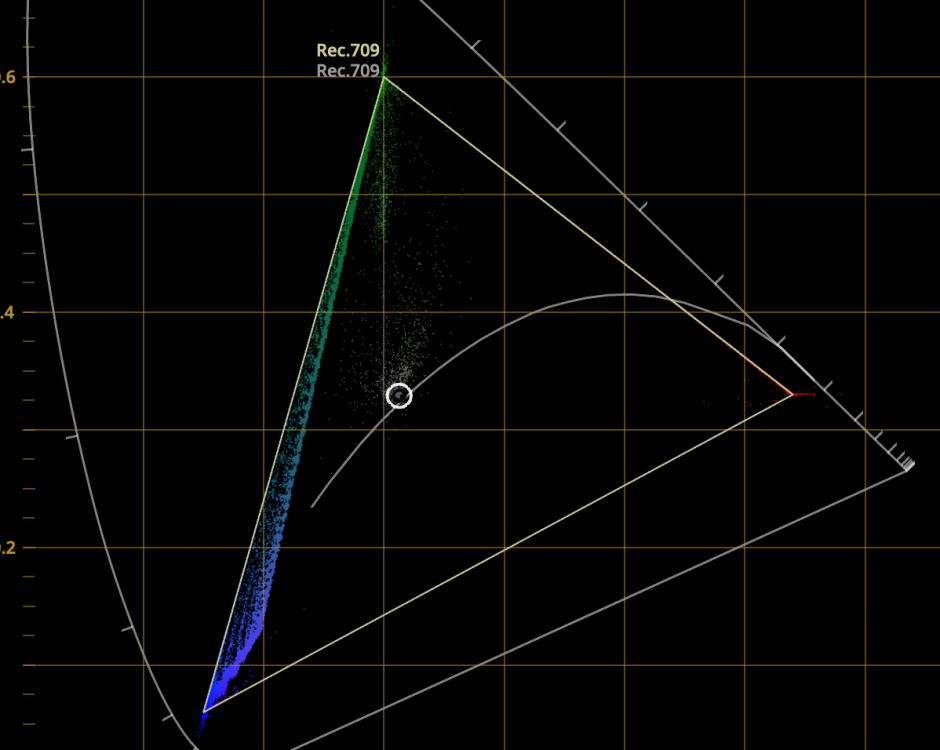

First just to say how much i appreciate you taking the time to answer these esoteric questions! So i have a DNG which is a macbeth shot with a reference P3 display behind showing full saturation RG and B. So in these scene i hope i have pushed the sensor beyond 709 as a test. Resolve is set to Davinci YRGB and not colour managed so i am now able to change the Camera RAW settings. I set the color space to Black Magic Design and Gamma is Black Magic Design Film. To start with my timeline is set to P3 and i am using the CIE scopes and the first image i enclosed shows what i see. Firstly i can see the response of the camera going beyond a primary triangle. So this is good. As you say the spectral response is not a well defined gamut and i think this display shows that. But my choice of timeline is P3. And it looks like that is working with the colours as they're hovering around 709 but this could be luck. Changing time line to 709 gives a reduced gamut and like wise setting timeline to BMD Film gives an exploded gamut view. So BMD Film is not clipping any colours. The 4th is when i set everything to 709 so those original colours beyond Green and Red appear to be clamp or gamut mapped into 709. So i *think* i am seeing a native gamut beyond 709 in the DNG. But applying the normal DNG route seems to clamp the colours. But i could just be reading these diagrams wrong. Also with a BMDFilm in Camera RAW what should the timeline be set to and should i be converting BMD Film manually into a space? I hope this makes sense, i've a feeling i might have lost the plot on the way... cheers Paul -

paulinventome reacted to a post in a topic:

Sigma Fp review and interview / Cinema DNG RAW

paulinventome reacted to a post in a topic:

Sigma Fp review and interview / Cinema DNG RAW

-

Sigma Fp review and interview / Cinema DNG RAW

paulinventome replied to Andrew - EOSHD's topic in Cameras

I don't know what the native space of the fp is but we do get RAW from it so hopefully there's no colourspace transform and i want to know i am getting the best out of the camera. And i'm a geek and like to know. I *believe* that Resolve by default appears to be debayering and delivering 709 space and i *think* the sensor native space is greater than that - certainly with Sony sensors i've had in the past it is. There was a whole thing with RAW on the FS700 where 99% of people weren't doing it right and i worked with a colour scientist on a plug in for SpeedGrade that managed to do it right and got stunning images from that combo (IMHO FS700+RAW is still one of the nicest 4K RAW cameras if you do the right thing). The problem with most workflows was that Reds were out of gamut and being contaminated with negative green values in Resolve (this was v11/v12 maybe? I have screenshots of the scopes with negative values showing and so many comparisons! I understand that the matrixes transform from sensor to XYZ and there's no guarantee whether the sensor can see any particular colour at any point and that the sensor is a spectral response not a defined gamut. I also know that if i was able to shine full spectral light on the sensor i could probably record the sensor response. I know someone in Australia that does this for multiple cameras. But within the Resolve ecosystem how do i get it to give me all the colour in a suitable large space so i can see? If i turn off colour management and manually use the gamut in the camera RAW tab Resolve appears to be *scaling* the colour to fit P3 or 709. What i want to see is the scene looking the same in 709 and P3 except where there is a super saturated red (for example) and in P3 i want to see more tonality there. I'm battling Resolve a little because i don't know 100% what it is doing behind the scenes and if i could get the DNGs into Nuke then i am familiar enough with that side to work this out but for some reason the sigma DNGs don't work! thanks! Paul -

Sigma Fp review and interview / Cinema DNG RAW

paulinventome replied to Andrew - EOSHD's topic in Cameras

I got a reply from them last week, has it shut down recently? We need to double check we're talking about the same thing. I see half way through that an exposure 'pulse' not a single frame flash but more a pulse. I believe it's an exposure thing in linear space but when viewed like this it affects the shadows much more. Can you post a link to the video direct and maybe enable download so we can frame by frame through it. A mild pulsing is also very common in compression so we want to make sure we're not look at that...? cheers Paul -

Sigma Fp review and interview / Cinema DNG RAW

paulinventome replied to Andrew - EOSHD's topic in Cameras

Actually you don't really want to work in a huge colourspace because colour math goes wrong and some things are more difficult to do. Extreme examples here: https://ninedegreesbelow.com/photography/unbounded-srgb-as-universal-working-space.html There were even issues with CGI rendering in P3 space as well. You want to work in a space large enough to contain your colour gamut. These days it's probably prudent to work in 2020 or one of the ACES spaces especially designed as a working space. What you say is essentially true. If you are mastering then you'd work in a large space and then do a trim pass for each deliverable - film. digital cinema, tv, youtube etc, You can transform from a large space into each deliverable but if your source uses colours and values beyond your destination then a manual trim is the best approach. You see this more often now with HDR - so a master wide colour space HDR is done and then additional passes are done for SDR etc,. However, this is at the high end. Even in indie cinema the vast majority of grading and delivering is still done in 709 space. We are still a little way off that changing. Bear in mind that the vast majority of cameras are really seeing in 709. P3 is plenty big enough - 2020 IMHO is a bit over the top. cheers Paul -

Sigma Fp review and interview / Cinema DNG RAW

paulinventome replied to Andrew - EOSHD's topic in Cameras

If you're talking about the shadow flash half way through then yes. At ISO400 only. And yes Sigma are aware of it and i'm awaiting some data as the whether we can guarantee it only happens at 400. This is the same for me. But it's only 400 for me, whereas others have seen it at other ISOs... cheers Paul -

Sigma Fp review and interview / Cinema DNG RAW

paulinventome replied to Andrew - EOSHD's topic in Cameras

That would be awesome! Yes, thank you. Sorry i missed this as i was scanning the forum earlier. Much appreciated! Paul -

Sigma Fp review and interview / Cinema DNG RAW

paulinventome replied to Andrew - EOSHD's topic in Cameras

And that sensor space is defined by the matrixes in the DNG or it is built into Resolve specifically for BMD Cameras? I did try to shoot a P3 image off my reference monitor, actually an RGB in both P3 and 709 space. The idea is to try various methods to display it to see if the sensor space was larger than 709. Results so far inconclusive! Too many variables that could mess the results up. If you turn off resolve colour management then you can choose the space to debayer into. But if you choose P3 then the image is scaled into P3 - correct? If you choose 709 it is scaled into there. So it seems that all of the options scale to fit the selected space. Can you suggest a workflow that might reveal native gamut? For some reason i cannot get the sigma DNGs into Nuke otherwise i'd be able to confirm there. In my experience of Sony sensors usually the Reds go way beyond 709. So end to end there's a bunch of things to check. One example is on the camera itself whether choosing colourspace alters the matrixes in the DNG - how much pre processing happens to the colour in the camera. Cheers Paul -

Sigma Fp review and interview / Cinema DNG RAW

paulinventome replied to Andrew - EOSHD's topic in Cameras

Some small compensation for the lock down. I've been taking mine out on our daily exercise outside. It's pretty quiet around here outside as expected. Dogs are getting more walks then they've ever had in their life and must be wondering what's going on... I am probably heading towards the 11672, the latest summicron version. I like the bokeh of it. As you say, getting a used one with a lens this new is difficult. Have fun though - avoid checking for flicker in the shadows and enjoy the cam first!! cheers Paul -

paulinventome reacted to a post in a topic:

Sigma Fp review and interview / Cinema DNG RAW

paulinventome reacted to a post in a topic:

Sigma Fp review and interview / Cinema DNG RAW

-

paulinventome reacted to a post in a topic:

Sigma Fp review and interview / Cinema DNG RAW

paulinventome reacted to a post in a topic:

Sigma Fp review and interview / Cinema DNG RAW

-

Sigma Fp review and interview / Cinema DNG RAW

paulinventome replied to Andrew - EOSHD's topic in Cameras

Thanks for doing that! It confirms that the fp is probably similar to the a7s. The f4 is clearly smeared as expected. I'd hoped with no OLPF then it would be closer to an M camera. So it seems that it's the latest versions that i will be looking for... The 28 cron seems spectacular in most ways. 35 is too close to the 50 and i find the bokeh of the 35 a little harsher than the others (of course there are so many versions so difficult to tell sometimes!) Thanks again Paul